Vernacular Video Automation: How Enterprises Launch Conversational Shopping in 175 Languages for the Bharat Market

Estimated reading time: ~12 minutes

Key Takeaways

- Vernacular video automation turns static catalogs into conversational shopping across 175+ languages and dialects.

- Indian AI avatars and voice cloning raise trust by mirroring local prosody, code-mixing, and cultural nuance.

- Personalized, intent-triggered videos drive higher watch-through and add-to-cart in Tier-2/3 markets.

- Integrating Hello! UPI with smart speakers enables voice-confirmed checkouts in regional languages.

- A robust governance framework with consent, moderation, and compliance safeguards brand trust.

The digital landscape of India is undergoing a seismic shift as the “Next Billion Users” transition from passive content consumers to active digital transactors. For enterprises aiming to capture the burgeoning Tier-2 and Tier-3 segments, vernacular video automation has emerged as the definitive playbook for scaling trust and engagement. By 2026, the reliance on English-first interfaces has proven insufficient for the 650 million regional language users who demand intuitive, voice-first, and visually rich shopping experiences.

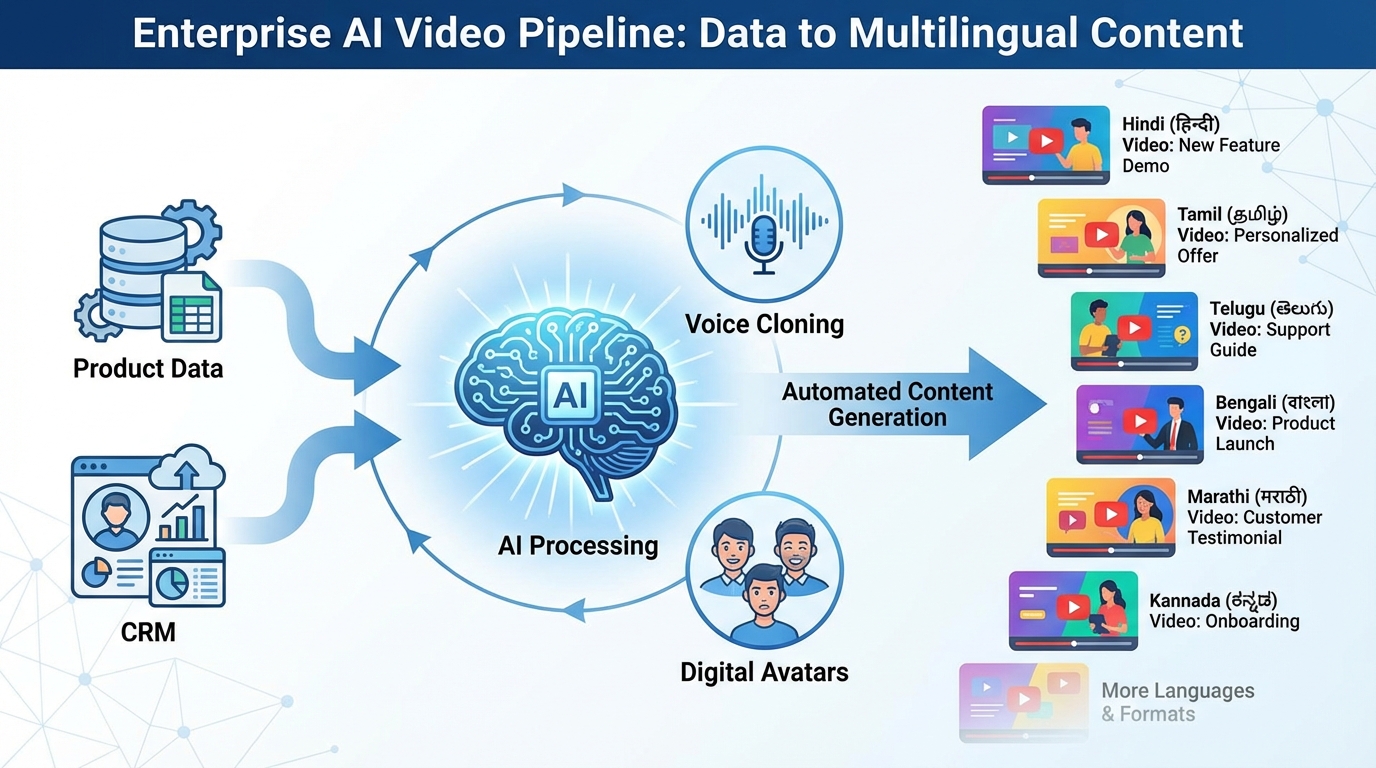

Vernacular video automation refers to the automated generation of personalized, regionally localized, and dialect-aware videos featuring Indian AI avatars and cloned voices. These assets are rendered via high-speed APIs from product feeds or CRM data and distributed across voice assistants, WhatsApp catalog video marketing, and mobile apps. Platforms like TrueFan AI enable enterprises to bridge the linguistic divide, transforming static product catalogs into dynamic, conversational shopping journeys that resonate with the cultural nuances of Bharat.

The problem for modern enterprises is clear: traditional marketing funnels stall when they encounter the linguistic diversity of the Bharat market AI videos landscape. Users in smaller towns often find text-heavy, English-centric apps intimidating. The solution lies in natural language commerce in India, where voice-enabled, dialect-specific shopping videos turn simple voice queries into seamless one-tap UPI checkouts. This strategy does not just improve reach; it fundamentally redefines the user experience for a population that thinks, speaks, and shops in regional tongues.

Section 1: Why Bharat Market AI Videos are the Key to Tier-2 and Tier-3 Growth

The demographic reality of India in 2026 dictates that growth is no longer a factor of internet penetration, but of linguistic inclusion. Bharat market AI videos are specifically designed to cater to this shift, moving beyond simple translation to deep localization. These AI-generated assets are tailored to regional dialects, optimized for low-bandwidth environments, and delivered through the channels where Bharat lives: WhatsApp and voice-activated interfaces.

Consumer behavior in Tier-2 and Tier-3 cities has shifted toward code-mixed queries—often referred to as Hinglish, Tamlish, or Benglish—and short-form video consumption. A user in Indore might search for “Best mobile under 20,000 dikhao,” blending Hindi and English seamlessly. If the response is a dry text list in English, the conversion opportunity is lost. However, a vernacular video that responds in the same code-mixed dialect, featuring an Indian AI avatar, creates an immediate sense of familiarity and trust.

The ROI levers for this approach are substantial. Data from 2026 indicates that Tier-2/3 cities show a 156% higher growth rate in vernacular AI content engagement compared to English-only content. When the voice, script, subtitles, and call-to-action (CTA) match local language norms, enterprises see a significant lift in watch-through rates and add-to-cart actions. Furthermore, addressing dialect nuances—such as distinguishing between Bhojpuri and Awadhi within the broader Hindi belt—allows brands to achieve a level of hyper-localization that was previously impossible at scale.

Sources:

- Cloud9Digital: AI Marketing Stats 2026

- Reverie Inc: Speech-to-Text Trends and Dialect Diversity

- Fortune India: Technology Shifts Defining India’s Digital Future

Section 2: Building a Multilingual AI Video Generator Stack for Enterprise Scale

To compete in the 2026 economy, enterprises require a sophisticated multilingual AI video generator pipeline that moves beyond basic templates. This stack must integrate product feeds, CRM segmentation, and intent-based FAQs to produce thousands of unique video variants daily. The goal is to create a “virtual reshoot” capability where lines can be updated, prices localized, and offers personalized without the need for a physical production crew.

The blueprint for an enterprise-grade pipeline begins with the selection of an Indian AI avatar. This digital spokesperson, whether a brand ambassador or a virtual assistant, must exhibit brand-safe gestures and culturally appropriate prosody. TrueFan AI’s 175+ language support and Personalised Celebrity Videos provide the necessary infrastructure for this, ensuring perfect lip-sync and voice retention across a staggering array of dialects. This level of technical precision is critical for maintaining the “uncanny valley” threshold and ensuring user trust.

The audio component of this stack relies on AI voice cloning for Indian accents. This involves generating high-fidelity speech that captures the specific lilt and rhythm of regional speakers, from the staccato of Tamil to the melodic flow of Bengali. When combined with Hinglish AI video creation, the result is a communication style that mirrors how people actually speak. Security and governance are equally paramount; the stack must include consent logs for voice cloning and adhere to ISO 27001/SOC 2 standards to protect both the brand and the consumer.

Sources:

- TrueFan AI: Enterprise AI Video Platform 2024/2026

- TrueFan AI: AI Voice Cloning for Indian Accents

- Royalways: Digital Marketing Trends for Indian Brands 2026

Section 3: Scaling Conversational Shopping AI Personalization Across Regional Dialects

The true power of vernacular automation is realized through conversational shopping AI personalization. This is the real-time assembly of video responses triggered by specific user intents. Instead of a one-size-fits-all advertisement, the system generates a video that addresses the user by name, references their city, and offers a product based on their previous browsing history or loyalty tier.

Personalization variables in the Bharat context are multifaceted. They include the user’s preferred language, their nearest physical store location, real-time inventory levels, and even festival-specific contexts. For example, a script might read: “Namaste Riya ji, Indore se? Aaj aapke liye ₹499 ka Festive Combo ready hai—UPI pe 10% cashback milega. Order karne ke liye bolo ‘Confirm’ ya WhatsApp pe tap karo.” This level of detail transforms a generic transaction into a personalized consultation.

Solutions like TrueFan AI demonstrate ROI through these intent-to-video flows, where the latency between a user’s voice query and the delivery of a personalized video is less than 30 seconds. These videos are then distributed across high-engagement channels like WhatsApp, RCS, and smart speakers. By integrating with the enterprise CRM, these videos can also include dynamic elements like EMI options or loyalty point balances, making the path to purchase as frictionless as possible for the regional consumer.

Sources:

- TrueFan AI: Voice Commerce Vernacular India 2026 Guide

- TrueFan AI: Vernacular Video Automation Guide

Section 4: Mastering Voice Commerce Vernacular India with UPI and Smart Speaker Integration

The convergence of voice interfaces and digital payments is the final frontier of voice commerce vernacular India. With the National Payments Corporation of India (NPCI) launching “Hello! UPI,” the infrastructure now exists for voice-enabled conversational payments. For enterprises, this means designing a “say-and-see” flow where a voice query leads to a vernacular video response, which then triggers a voice-confirmed checkout.

The integration of smart speaker commerce integration is vital for this ecosystem. Whether through Alexa or Google Assistant, the user journey must be seamless. A user asks their smart speaker about a product in their native dialect; the NLU (Natural Language Understanding) detects the language and intent; the personalization engine pulls the relevant offer; and a dialect-specific shopping video is sent to the user’s phone or displayed on a screened device. The transaction is then completed via a voice-prompted UPI flow, where the user confirms the amount and enters their PIN via voice or a secure mobile link.

Trust is the currency of Bharat. To maintain it, enterprises must implement clear disclosure patterns. Every AI-generated video should include an overlay stating it uses an AI voice and avatar. Furthermore, repeating the order details in the user’s specific dialect before the payment prompt significantly reduces cart abandonment. This “natural language commerce India” approach ensures that the technology feels like a helpful local shopkeeper rather than a faceless corporation.

Sources:

- Government Economic Times: NPCI launches voice-enabled UPI payments

- Paytm: How Hello! UPI Works

- PIB: UPI New Product Launches

Section 5: Strategic Tier-2 Voice Adoption Strategies and Governance Frameworks

Implementing a 175 language video platform requires a structured rollout to ensure technical stability and cultural resonance. A typical 90-180 day tier-2 voice adoption strategies plan begins with a pilot in Hindi and Hinglish, focusing on the top five customer FAQs. During the first 30 days, enterprises should generate 500 to 1,000 dialect-specific videos to measure baseline CTR and add-to-cart metrics.

As the pilot scales into the 31-90 day window, additional regional languages such as Marathi, Tamil, and Telugu are integrated. This phase also sees the activation of UPI voice flows and the launch of store-level QR codes that trigger voice-video interactions. By the 180-day mark, the coverage should expand to include hyper-local dialects and festival-specific promotions. A rigorous QA rubric is essential throughout this process, focusing on pronunciation accuracy, the correct use of honorifics, and the clarity of price utterances in local scripts.

Governance and brand safety cannot be an afterthought. In the Indian context, regional sensitivity is paramount. This involves native reviewer sign-offs for all dialect scripts to avoid linguistic faux pas. Furthermore, the platform must provide robust content moderation to block any offensive or politically sensitive material. Data residency and compliance with local regulations ensure that the enterprise remains a trusted entity in the eyes of both the government and the consumer.

Sources:

- Fortune India: Trust and Interoperability as Tech Pillars

- TrueFan AI: Security and Compliance for AI Video

Section 6: Optimizing Voice SEO Regional Languages and Frequently Asked Questions

To ensure discoverability in a voice-first world, enterprises must master voice SEO for regional languages. This involves optimizing content so that voice assistants can surface “speakable” answers in regional dialects. Unlike traditional SEO, which focuses on keywords, voice SEO focuses on conversational phrases and question-led scripting. For instance, instead of targeting “UPI payment,” the focus shifts to “UPI se pay kaise karein?”

Tactics for voice SEO include the use of speakable and FAQ schema, providing concise first-sentence answers that voice assistants can easily read aloud. Generating short, dialect-specific shopping videos as rich answers linked from FAQ pages can significantly boost visibility. These videos serve as “video snippets” for voice queries, providing a visual confirmation that complements the audio response.

The following FAQ section demonstrates how enterprises can structure their content to address common user queries while maintaining high SEO value. See the Frequently Asked Questions section below.

Conclusion: The Future of Natural Language Commerce in India

The transition to vernacular video automation is not merely a trend; it is a fundamental requirement for any enterprise serious about the Bharat market. By combining the power of a multilingual AI video generator with the convenience of voice commerce and UPI, brands can finally speak the language of their customers—literally and figuratively.

As we look toward the remainder of 2026, the winners in the Indian digital economy will be those who move beyond translation to true conversational immersion. By leveraging Indian AI avatars, AI voice cloning Indian accents, and Hinglish AI video creation, enterprises can build a shopping experience that is as natural as a conversation with a friend. The roadmap is clear: prioritize vernacular, automate personalization, and integrate voice-to-checkout flows to capture the heart of Bharat.

Final Strategic Checklist for Enterprises:

- Audit current Tier-2/3 funnels for English-language friction points.

- Implement voice SEO in regional languages to capture rising voice search volume.

- Partner with a 175 language video platform to scale personalized video production.

- Integrate “Hello! UPI” to enable frictionless, voice-confirmed transactions.

- Maintain strict governance and disclosure standards to build long-term consumer trust.

Recommended Internal Links

- Vernacular Video Automation India

- Vernacular Video Automation India: Enterprise Playbook

- Vernacular Video Automation India: AI Avatars and Voices

- Vernacular Video Automation India: Scale Localized AI Ads

- Hinglish AI Video Creation for Bharat: Tips and Tools

- Conversational shopping AI Hindi: 2026 voice commerce India

- Master voice SEO regional languages for commerce success

- WhatsApp Catalog Video Marketing: Tactics for 2026

Recommended Internal Links

- Vernacular Video Automation India: Scale Multilingual Reach

- Vernacular Video Automation India: Enterprise Playbook

- Vernacular Video Automation India: AI Avatars and Voices

- Vernacular Video Automation India: Scale Localized AI Ads

- Hinglish AI Video Creation for Bharat: Tips and Tools

- Conversational shopping AI Hindi: 2026 voice commerce India

- Master voice SEO regional languages for commerce success

- WhatsApp Catalog Video Marketing: Tactics for 2026

Frequently Asked Questions

How do UPI voice payments work in regional languages?

UPI voice payments, such as NPCI’s “Hello! UPI,” allow users to initiate and authorize transactions using voice commands. The system uses Natural Language Understanding to process the request in languages like Hindi or Tamil. For a secure experience, TrueFan AI can generate a real-time confirmation video that visually verifies the payee and amount before the user provides voice authorization.

How does Hinglish AI video creation improve conversion rates?

Hinglish AI video creation mirrors the natural, code-mixed speaking patterns of modern Indian consumers. By blending English technical terms with regional syntax, brands appear more relatable and less formal. Data shows that this authenticity leads to higher engagement and trust, particularly in Tier-2 and Tier-3 markets where pure-language scripts can sometimes feel “stiff” or disconnected from daily life.

What are the steps for smart speaker commerce integration?

Integration involves four key steps: 1) Account linking between the smart speaker and the user’s brand profile. 2) Syncing the product catalog with real-time price and stock APIs. 3) Developing intent models for regional language prompts. 4) Setting up a video rendering pipeline to send personalized product videos to the user’s companion app or screened device.

Is AI voice cloning for Indian accents legal and ethical?

Yes, provided it follows strict ethical guidelines. This includes obtaining explicit consent from the voice source, using the clone only for authorized purposes, and providing clear on-screen disclosures that the voice is AI-generated. Enterprise platforms must maintain audit trails and adhere to SOC 2 standards to ensure data security and ethical compliance.

What makes a 175 language video platform “enterprise-grade”?

An enterprise-grade platform must offer more than just translation. It requires dialect-specific prosody controls, brand-safe Indian AI avatars, sub-30 second API rendering, and deep integration with CRM and payment systems. It must also provide comprehensive analytics to track performance across different linguistic cohorts, allowing for continuous optimization of the vernacular strategy.