C2PA watermarking AI video marketing: The enterprise blueprint for provenance, disclosure, and trust

Estimated reading time: ~18 minutes

Key Takeaways

- Enterprises must adopt C2PA Content Credentials and digital watermarking to prove AI origins and maintain platform compliance.

- Disclosure-by-default is essential for YouTube, Meta, and TikTok ad approvals and to avoid penalties or de-ranking.

- Align personalization with GDPR and India’s DPDP Act using consent orchestration tied to provenance manifests.

- Implement enterprise provenance architecture: capture, edit, render, publish, and archive with immutable logs.

- Celebrity avatar policies require explicit rights, persistent on-screen labels, and revocation-ready SOPs.

C2PA watermarking AI video marketing has emerged as the indispensable trust foundation for enterprise-scale AI video advertising and personalization in 2026. As global platforms like YouTube, Meta, and TikTok enforce rigorous labeling rules, and India’s MeitY deepfake advisories converge with the DPDP Act’s consent-first regime, brands must prioritize audit-ready provenance. Establishing clear content credentials video marketing strategies ensures that synthetic media remains a tool for engagement rather than a liability for brand safety or a barrier to AI search visibility.

The shift toward a “provenance-by-default” ecosystem is no longer optional for the modern CISO or CMO. With the California AI Transparency Act taking full effect in January 2026 and the EU AI Act’s watermarking mandates looming for August 2026, the technical ability to disclose synthetic origins is now a core requirement for AI video compliance enterprise standards. Enterprises that fail to embed these trust signals risk ad disapprovals, platform de-ranking, and significant regulatory penalties under emerging global frameworks.

Furthermore, as search evolves into agentic discovery, AI Overviews trust signals video metadata will determine which assets are surfaced by answer engines. AI Overviews optimization for 2026. By integrating the Coalition for Content Provenance and Authenticity (C2PA) standards, organizations can provide the cryptographic proof necessary to maintain authority in an era of rampant misinformation. This blueprint outlines the operational, legal, and technical steps required to master AI video provenance enterprise workflows while maximizing the ROI of personalized generative media.

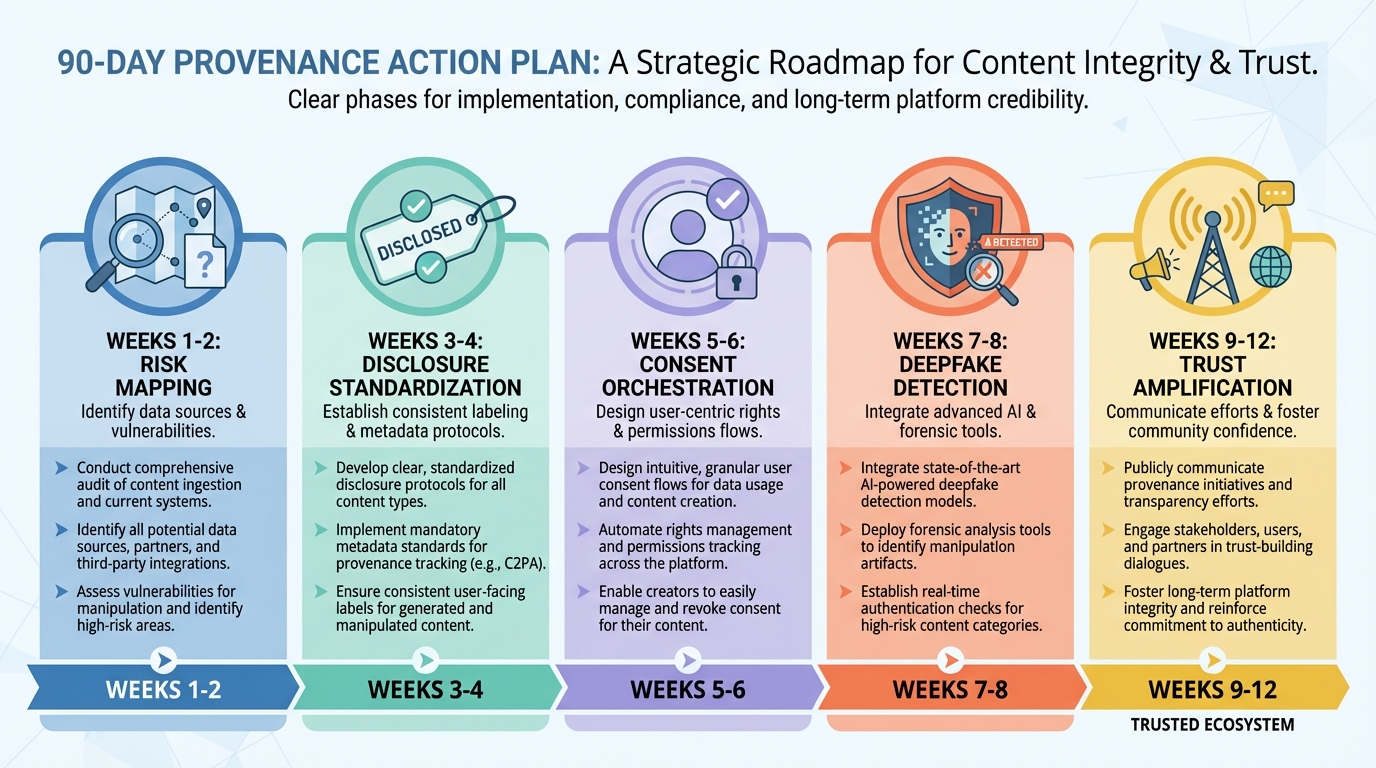

Executive Summary: The 90-Day Provenance Action Plan

For Legal, Compliance, and CISO teams, operationalizing AI transparency requires a multi-layered approach that combines cryptographic signing with human-readable disclosures. This action plan ensures your organization meets the highest benchmarks for brand safety AI watermarking and regulatory alignment.

- Week 1–2: Risk Mapping and Control Baseline. Adopt the C2PA 2.1 manifest specification and standardized digital watermark recovery protocols across all production pipelines to ensure metadata persists through social media compression.

- Week 3–4: Disclosure Template Standardization. Institute human-visible “AI-generated” overlays aligned with India’s MeitY advisories and global platform UI requirements (YouTube/Meta/TikTok) to prevent automated content removal.

- Week 5–6: Consent Orchestration. Wire up consent-gated personalization flows for GDPR video personalization alignment and DPDP Act consent management videos, ensuring every personalized render is backed by a verifiable consent timestamp.

- Week 7–8: Deepfake Detection Integration. Deploy inbound and outbound deepfake detection protocols into vendor SLAs and intake workflows to screen UGC and influencer assets before they enter the brand ecosystem.

- Week 9–12: Trust Amplification. Publish Content Credentials badges on all customer-facing assets and verify their inclusion in AI Overviews trust signals video schemas to boost discoverability and consumer confidence. AI Overviews SEO strategy

The standards base: what C2PA watermarking AI video marketing enables

The foundation of modern digital trust rests on the C2PA standard, an open technical specification that enables the creation of cryptographically signed provenance manifests. These manifests act as a “nutrition label” for digital media, detailing exactly what was captured, which AI tools were applied, and what edits were performed during the production cycle. By adopting C2PA watermarking AI video marketing, enterprises can ensure their assets carry an immutable history that travels with the file across the fragmented ad supply chain.

Content Credentials represent the user-facing manifestation of this technical standard, typically appearing as a small “cr” icon that viewers can click to verify an asset's origin. Content Credentials video marketing guide. In 2026, this has transitioned from a niche technical feature to a primary trust signal for high-stakes marketing. Platforms like TrueFan AI enable enterprises to automate the embedding of these credentials at the point of render, ensuring that every personalized video—whether it features a celebrity or a brand ambassador—is pre-verified for platform compliance.

The introduction of C2PA 2.1 has further strengthened this ecosystem by integrating robust digital watermarking recovery. Unlike traditional metadata, which can be stripped during social media uploads or file conversions, digital watermarks are embedded directly into the pixels of the video. This “soft binding” allows the C2PA manifest to be recovered even after the video has been resized, compressed, or re-recorded, providing a resilient layer of brand safety AI watermarking that protects against unauthorized tampering.

From a marketing perspective, these standards reduce the friction of ad approvals on major social networks. TikTok and Meta now utilize automated systems to detect C2PA manifests; assets that lack proper labeling or provenance data are increasingly flagged as “manipulated media,” leading to reduced reach or outright removal. By leading with transparency, brands turn compliance into a competitive advantage, signaling to both algorithms and audiences that their content is authentic and authorized.

Research Sources:

- C2PA: Technical specifications and manifests

- Digimarc: C2PA 2.1 strengthening Content Credentials and digital watermarks

- Global Market Insights: AI watermarking market analysis (18.2% CAGR)

- Awakened Films: 2026 video marketing trends and Sora watermarking

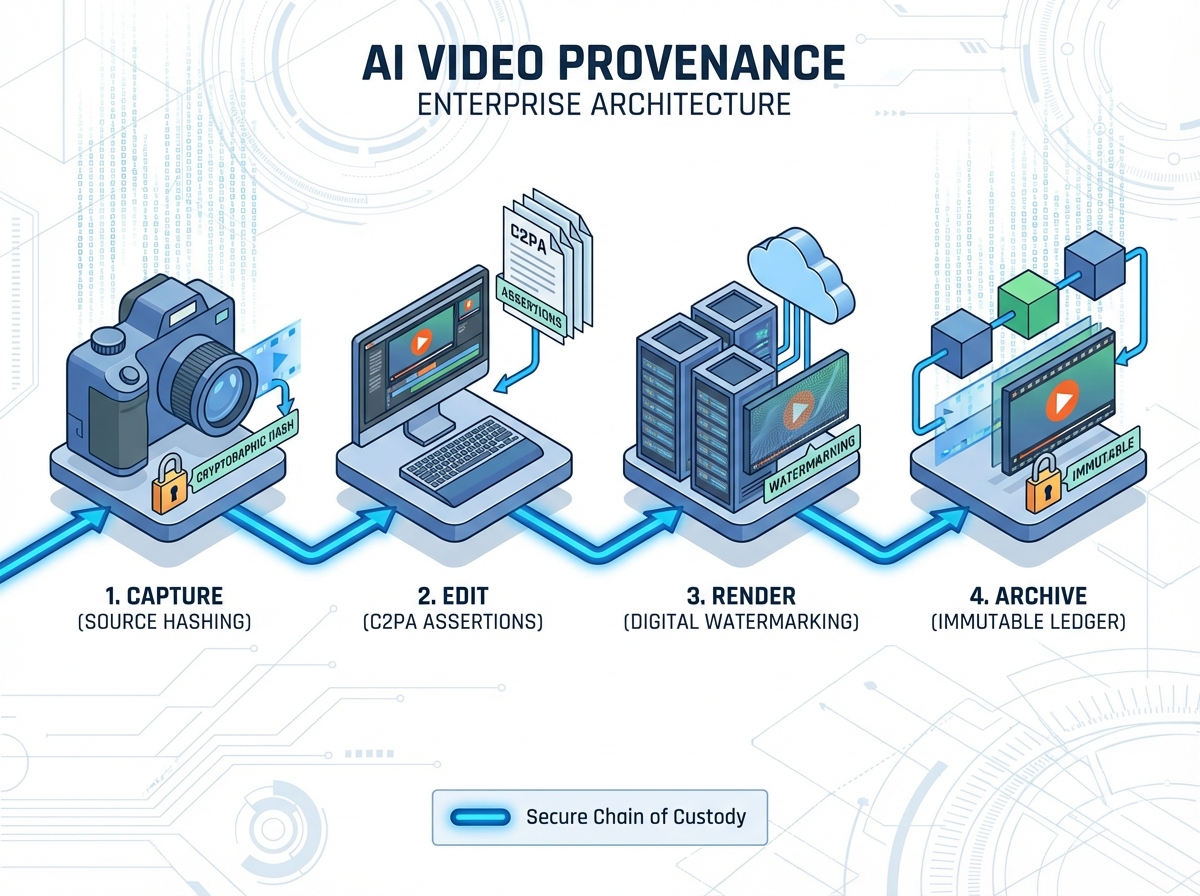

Building an AI video provenance enterprise architecture

An effective AI video provenance enterprise architecture requires a shift from “post-production labeling” to “provenance-by-design.” This means integrating cryptographic signing at every node of the content lifecycle, from the initial capture of raw footage to the final distribution of millions of personalized variants. The goal is to create a chain of custody that is both machine-readable for platforms and human-verifiable for consumers.

The pipeline begins at the Capture stage, where source assets are hashed and registered with unique IDs. Any rights or talent consents associated with the footage are attached to these IDs as metadata assertions. During the Edit phase, non-destructive transformations—such as AI-driven lip-syncing or background replacement—are recorded as C2PA assertions. This ensures that the final manifest accurately reflects the “ingredients” of the video, distinguishing between human-captured elements and synthetic enhancements.

At the Render stage, the architecture must handle the scale of hyper-personalization. For an enterprise generating 500,000 unique videos for a festive campaign, the system must embed a signed C2PA manifest and a robust invisible watermark into every single output in real-time. This requires a high-performance signing service and a secure key management policy to prevent the unauthorized use of the brand’s digital signature. Organizations should look to conformant enterprise video platforms that have already successfully integrated these audit flows into their UI/UX.

Finally, the Archive and Publish stages ensure long-term resilience. Every manifest, along with its associated verification snapshots and key custody logs, should be stored in an immutable ledger. When the video is published, the metadata should include schema.org VideoObject properties that point to the provenance description. This architecture not only satisfies legal requirements but also prepares the brand for the future of AEO, where search engines prioritize content with verifiable origins.

Research Sources:

- Vbrick: First enterprise video platform to achieve C2PA conformance

- Arc InterMedia: Strategic frameworks for content resilience in 2026

- C2PA Specification 2.1

- TBRC: AI model watermarking market report

Synthetic media disclosure India: legal and platform requirements

In India, the regulatory landscape for AI-generated content has tightened significantly following the MeitY advisories of late 2023 and March 2024. These directives mandate that intermediaries and content creators must appropriately label synthetic media or deepfakes to prevent the spread of misinformation. For enterprises, this means that any synthetic media disclosure India strategy must include prominent, persistent, and clear on-screen labels, especially when the content involves public figures or sensitive topics like health and finance.

The Intermediary Guidelines now place the onus on platforms and brands to file action-taken reports regarding the identification and labeling of AI content. Failure to comply can lead to the loss of “safe harbor” protections, exposing the brand to direct legal liability for any harms caused by unlabeled synthetic media. This is particularly critical in the context of India's diverse linguistic landscape, where disclosures must be localized to ensure they are understood by the target audience across all 22 official languages. Regional language video SEO

A practical India playbook for ad platform compliance synthetic content involves a three-tier disclosure system. First, a visible “AI-generated” or “Synthetic Media” overlay must appear within the first five seconds of the video and remain visible if the content is realistic enough to be mistaken for reality. Second, the C2PA Content Credentials must be embedded in the metadata to provide a technical audit trail. Third, brands must retain evidence of these disclosures—including manifest JSONs and upload receipts—to satisfy potential regulatory inquiries or audits.

Moreover, the ongoing debate within India's media policy community suggests that even stricter rules for deepfake detection and labeling are on the horizon for 2026. Enterprises should adopt a “maximum disclosure” posture, ensuring that their celebrity avatar watermarking policies align with the most conservative interpretations of the law. By proactively labeling all AI-modified scenes, brands can mitigate the risk of being caught in the crosshairs of evolving enforcement actions by MeitY or the Central Consumer Protection Authority (CCPA).

Research Sources:

- MeitY Advisory on Deepfakes and AI Labeling (2024)

- The Dialogue: Comparative analysis of MeitY AI advisory

- S&R Associates: AI-related Advisories under Intermediary Guidelines

- MediaNama: Deepfake rules context and readings (2025)

GDPR video personalization alignment + DPDP Act consent management videos

Personalized video marketing at scale introduces complex data privacy challenges, particularly under the European Union’s GDPR and India’s Digital Personal Data Protection (DPDP) Act of 2023. Achieving GDPR video personalization alignment requires a “privacy-by-design” approach where the lawful basis for processing—typically explicit consent for named personalization—is baked into the rendering workflow. Enterprises must ensure that the data used to personalize a video (such as a customer's name, city, or purchase history) is processed with strict minimization and retention controls.

Under the DPDP Act, which saw its draft rules fully operationalized in late 2025, the requirements for DPDP Act consent management videos are even more prescriptive. Consent must be free, specific, informed, unconditional, and given through an affirmative action. This means that before a personalized video is generated, the user must be presented with a clear notice—available in multiple languages—explaining exactly how their data will be used to create the synthetic media. The system must also provide an easy mechanism for users to withdraw their consent, triggering the immediate deletion of the personalized asset.

Data protection impact assessments (DPIAs) are mandatory for large-scale video personalization campaigns, as they often involve “extensive profiling” or “automated decision-making” that could significantly affect the individual. These assessments should document the risks of misidentification, the security of the biometric data (if used for face-swapping), and the measures taken to prevent the unauthorized repurposing of the personalized renders. Organizations must also maintain a Record of Processing Activities (ROPA) that includes the source of the data and the duration of its storage.

To streamline this, enterprises should implement a centralized consent orchestration layer. This layer captures consent timestamps, notice versions, and withdrawal events, linking them directly to the asset’s C2PA manifest. By doing so, the brand can provide a complete “privacy provenance” for every video, proving to regulators that the personalization was not only transparently labeled but also lawfully generated. This integrated approach reduces the risk of the multi-million dollar fines associated with non-compliance in both the EU and Indian markets.

Research Sources:

- EDPB: Guidelines on video devices and GDPR

- Gov. of India: Digital Personal Data Protection (DPDP) Act 2023—full text

- PRS India: Summary of DPDP Act provisions

- IAPP: Decoding India’s draft DPDP rules (2025)

Celebrity avatar watermarking policies (rights and disclosures)

The use of celebrity likenesses in AI-driven marketing requires a sophisticated governance framework to manage rights, ethics, and disclosures. Celebrity avatar watermarking policies must be established to ensure that any synthetic reanimation of a talent’s face or voice is clearly identified as such. This is not only a legal necessity to protect the talent's “right of publicity” but also a critical brand safety measure to prevent the audience from feeling deceived by a “digital puppet.”

TrueFan AI's 175+ language support and Personalised Celebrity Videos exemplify how enterprises can navigate this complexity by using a consent-first model. Voice sync accuracy comparison guide. Every celebrity avatar on the platform is backed by a formal contract that specifies the scope of AI usage, the permitted geographies, and the mandatory disclosure requirements. This ensures that when a star like Kareena Kapoor Khan or MS Dhoni “speaks” to a customer in their native tongue, the technology is used within ethically and legally defined boundaries.

A robust policy should mandate that any video featuring a synthetic likeness must carry a persistent on-screen disclaimer, such as “AI-generated likeness.” Additionally, the C2PA manifest should include an assertion that specifically identifies the use of a synthetic avatar and the tools used for the reanimation. This level of transparency helps in maintaining the “human-in-the-loop” oversight that regulators and talent agents increasingly demand. It also protects the brand from “deepfake backlash,” where audiences react negatively to perceived trickery.

Furthermore, enterprises must establish clear “takedown SOPs” for celebrity avatars. If a talent revokes their consent or if a contract expires, the organization must have the technical capability to revoke the digital signatures associated with those assets and remove them from distribution channels. By integrating rights management directly into the provenance architecture, brands can ensure that their use of high-value celebrity assets remains compliant, respectful, and highly effective for driving engagement.

Research Sources:

- Adobe + TikTok: Advancing trust and transparency with Content Credentials

- Hiebing: Marketer’s guide to AI labels on social media

- Reuters Institute: Journalism, media & technology trends 2026

- AICerts: Why AI marketing content requires clear labels

Ad platform compliance synthetic content (YouTube, Meta, TikTok)

Navigating the specific requirements of major ad platforms is a daily operational challenge for modern marketing teams. Each platform has developed its own set of rules for ad platform compliance synthetic content, and staying ahead of these changes is vital for maintaining campaign momentum. YouTube, for instance, requires a mandatory disclosure for any “realistic” AI-generated content, with even stricter prominence rules for ads related to elections, health, or crisis response. AI video political campaigns in India. Failure to check the “altered or synthetic” toggle can lead to the removal of the video and potential account suspension.

Meta (Facebook and Instagram) has implemented “AI info” overlays that are automatically triggered when their systems detect C2PA metadata or invisible watermarks. However, brands should not rely solely on auto-detection; they should proactively apply their own labels to ensure consistency across all placements, including Reels and Stories. Meta’s policy specifically targets “deceptive” media, so ensuring that the AI’s role is clear in the creative itself is the best way to avoid being flagged by their integrity systems.

TikTok has been a pioneer in adopting C2PA standards, auto-detecting and labeling AI content to provide transparency to its younger, tech-savvy audience. Their systems are highly sensitive to invisible watermarking, and they have been known to reduce the reach of unlabeled synthetic content that appears to be “organic.” For enterprises, this means that every asset uploaded to TikTok must have its provenance data intact. Solutions like TrueFan AI demonstrate ROI through higher ad approval rates and better engagement by ensuring that all outputs are pre-configured for these platform-specific labeling requirements.

To manage this across a multi-channel strategy, enterprises should maintain an “Evidence Pack” for every ad flight. This pack should contain screenshots of the disclosure screens, a copy of the C2PA manifest, and the platform’s receipt of the AI disclosure. This documentation is invaluable during audits or if an ad is incorrectly flagged by an automated moderation system. By treating platform compliance as a data-driven process rather than a creative afterthought, brands can scale their AI video efforts with confidence.

Research Sources:

- YouTube: Policy on disclosing AI-generated content

- Meta: Labeling AI-generated and manipulated media

- Virvid: AI video ads complete guide (2026)

- DAM News: Provenance and industry round-up (Feb 23, 2026)

Conclusion: Turning Provenance into a Competitive Advantage

The era of “stealth AI” in marketing has ended, replaced by a new standard of cryptographic transparency and consumer-first disclosure. For the enterprise, C2PA watermarking AI video marketing is not merely a legal hurdle but a strategic opportunity to build deeper, more resilient relationships with audiences. By adopting a robust provenance architecture, organizations can protect their brand equity, ensure seamless platform distribution, and dominate the emerging landscape of AI-driven discovery.

As we move through 2026, the brands that thrive will be those that treat trust as a feature, not a footnote. Integrating C2PA manifests, mastering the nuances of the DPDP Act, and maintaining rigorous celebrity avatar policies are the hallmarks of a mature, future-ready marketing organization. The tools and standards are now in place to make generative AI both infinitely scalable and undeniably authentic.

Ready to secure your AI video pipeline? See how TrueFan AI bakes C2PA Content Credentials, invisible watermarking, and compliant disclosures into enterprise-scale, personalized video workflows—book a provenance and disclosure readiness workshop to produce your 90-day rollout plan today.

Frequently Asked Questions

What exactly is C2PA watermarking AI video marketing?

It is the practice of using the Coalition for Content Provenance and Authenticity (C2PA) standards to embed cryptographic metadata (manifests) and digital watermarks into AI-generated videos. This ensures that the origin, editing history, and AI tools used are verifiable by platforms and consumers, fostering trust and ensuring regulatory compliance.

How does the DPDP Act affect personalized video campaigns in India?

The DPDP Act requires that any personal data used for video personalization (like a user's name) must be processed based on clear, informed, and withdrawable consent. Enterprises must provide a notice in multiple languages and maintain a verifiable audit trail of consent for every personalized render.

Will labeling my AI videos as “synthetic” hurt my engagement rates?

On the contrary, 2026 marketing trends show that “authenticity is the new currency.” Consumers are more likely to trust and engage with brands that are transparent about their use of AI. Furthermore, platforms like TikTok and YouTube may penalize or remove unlabeled AI content, making disclosure a prerequisite for reach.

Can C2PA manifests be removed from my videos?

While metadata can sometimes be stripped during social media processing, the C2PA 2.1 standard utilizes “soft binding” with invisible digital watermarks. This allows the manifest to be recovered even if the file is compressed or re-saved, providing a resilient layer of protection for your brand's assets.

How can platforms like TrueFan AI help with enterprise compliance?

TrueFan AI provides an enterprise-grade pipeline that automatically embeds C2PA manifests, invisible watermarks, and localized on-screen disclosures into every video. With ISO 27001 and SOC 2 certifications, it ensures that your hyper-personalized campaigns are both innovative and fully aligned with global privacy and transparency standards.

What are AI Overviews trust signals video and why do they matter?

As search engines transition to AI-driven summaries (AEO), they prioritize content that carries strong trust signals. By including C2PA provenance data and schema.org VideoObject metadata, your videos are more likely to be recognized as authoritative and surfaced in AI-generated search results.