Voice Commerce Vernacular India 2026: An Enterprise Blueprint for Multilingual Conversational Shopping at Scale

Estimated reading time: ~12 minutes

Key Takeaways

- India’s next 500M users will be voice-first and vernacular, demanding code-mixed, regional language support at scale

- An enterprise-grade stack pairs ASR, NLU, TTS with orchestration across PIM/OMS, payments, and compliance

- Multimodal flows linking smart speakers, WhatsApp, and IVR remove friction and expand access

- Voice SEO in regional languages and dialect-tuned content unlock discovery and conversions

- Personalized, voice-triggered video offers drive ROI with measurable lift in CTR, AOV, and COD-to-UPI migration

The landscape of digital retail is undergoing a seismic shift as voice commerce vernacular India 2026 becomes the primary interface for the next half-billion users. For enterprise architects and CTOs, the transition from touch-based navigation to natural language commerce India represents more than a UI update; it is a fundamental re-engineering of the customer journey. By 2026, the ability to process complex, code-mixed queries in regional dialects will distinguish market leaders from those tethered to legacy English-centric models.

As India’s internet user base scales toward one billion, the reliance on Indic languages has reached a critical tipping point. Strategic implementation of voice commerce India 2026 requires a sophisticated blend of automated speech recognition (ASR), neural text-to-speech (TTS), and localized persuasion layers. This blueprint provides the technical and strategic framework necessary to capture the 67% of e-commerce orders now originating from Tier-2 and Tier-3 cities, where voice is not just a preference but a necessity for digital inclusion.

1. The State of Natural Language Commerce India: A 2026 Market Snapshot

The evolution of natural language commerce India is driven by a demographic that prioritizes speed and linguistic comfort over traditional text entry. In 2026, the digital ecosystem is defined by a “voice-first, vernacular-always” reality, where 98% of the 886 million internet users actively consume content in Indic languages. This shift has necessitated a move away from simple keyword matching toward deep semantic understanding of regional dialects and intent.

Current data indicates that India’s internet users will exceed 900 million by 2025, with growth almost exclusively powered by non-English speakers. This surge has transformed voice commerce India 2026 into a multi-billion dollar opportunity, particularly as Tier-2 and Tier-3 regions now dominate 67% of total e-commerce order volumes. For these users, typing in complex Indic scripts is a friction point that voice-activated shopping seamlessly resolves.

The intent taxonomy in 2026 has become highly specialized across categories. In the grocery sector, users utilize voice for rapid-fire list building and brand substitutions, often using colloquial terms for weights and measures. In beauty and electronics, the queries are more exploratory, involving shade matching or specification comparisons spoken in a mix of English and regional languages. This “Hinglish” or “Tanglish” phenomenon requires NLU models that can handle fluid code-switching without losing the transactional context.

Furthermore, the channel mix has matured. While mobile voice inputs via Android and WhatsApp remain the dominant entry points, smart speakers have gained significant traction in urban and semi-urban households for reordering and tracking. Enterprises must now orchestrate a unified experience that spans these devices, ensuring that a voice search initiated on a smart speaker can be seamlessly fulfilled via a WhatsApp confirmation or a voice-triggered video offer.

Sources:

- IAMAI-Kantar Report 2024

- IBEF: India’s Internet Users 2025

- ML Conference: Rise of Voice Commerce

- India Digital Advertising: 2026 Trends

2. Architectural Blueprint for Vernacular Voice Shopping Optimization

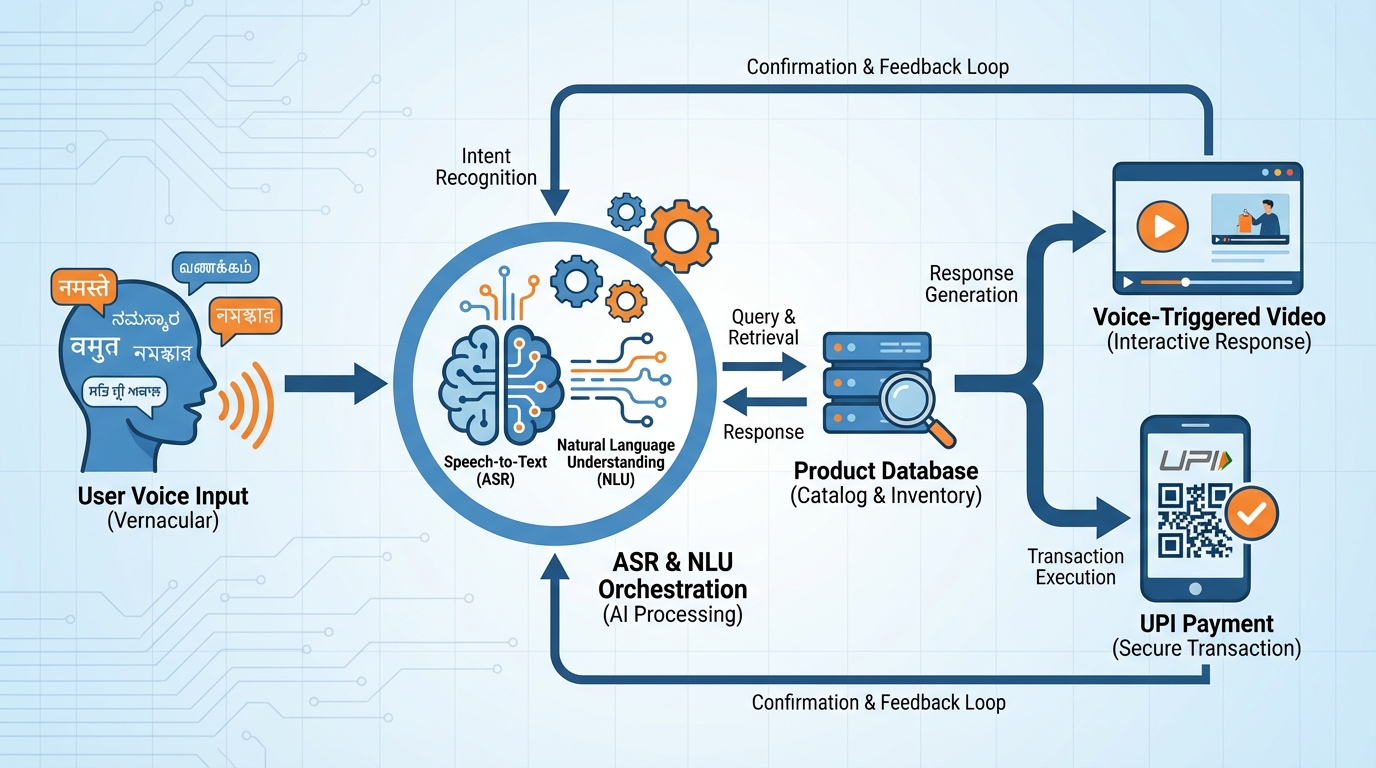

Achieving vernacular voice shopping optimization at an enterprise level requires a multi-layered architecture that prioritizes low latency and high linguistic precision. The foundation of this stack is the Capture Layer, which must handle diverse audio environments—from noisy Tier-3 marketplaces to quiet households—using bandwidth-aware audio processing. This ensures that the raw voice data is clean enough for the subsequent ASR engines to process with minimal Word Error Rates (WER).

The core of the system lies in the ASR and NLU orchestration. Enterprises are increasingly leveraging the Bhashini infrastructure, which supports ASR and NLU across 22 scheduled Indian languages. By integrating Bhashini’s pipelines, brands can handle complex dialect variants and code-mixing that standard global models often miss. This layer must be supplemented with custom lexicons containing brand-specific SKUs and phonetic variations of product names to ensure that “Santoor” isn’t misidentified as a musical instrument when a user is shopping for soap.

Once the intent is classified and slots (like quantity, brand, and delivery time) are filled, the Orchestration Layer takes over. This engine interacts with headless PIM (Product Information Management) and OMS (Order Management System) via APIs to check real-time inventory and apply region-specific pricing. A critical component here is the integration of voice-triggered video offers. When a user expresses intent via voice, the system can instantly generate a personalized video nudge that confirms the selection and offers a relevant upsell, bridging the gap between voice search and visual confirmation.

The final mile of the architecture is the Payment and Compliance Layer. With the launch of NPCI’s Hello! UPI, conversational voice payments have become a reality. The architecture must facilitate a secure hand-off from the shopping flow to the UPI voice authorization, providing language-specific fraud prevention prompts. Throughout this process, conversational AI personalization ensures that the tone, speed, and vocabulary of the interaction remain consistent with the user’s profile, while DPDP (Digital Personal Data Protection) guardrails manage consent and data retention in a transparent, multilingual format.

Sources:

- PIB: Bhashini and Indic AI Infrastructure

- Bhashini Research Portal

- ET Government: NPCI Hello! UPI Launch

- TrueFan AI: Voice Commerce Blueprint

3. Multimodal Entry: Smart Speaker Integration India and Mobile Voice

The 2026 consumer journey is rarely linear, necessitating a robust strategy for smart speaker integration India alongside mobile-first voice entry points. Smart speakers like Alexa and Google Assistant have evolved into essential household commerce hubs, particularly for routine reorders. To capitalize on this, enterprises must develop sophisticated “skills” or “actions” in Hindi, Tamil, and Bengali that allow for persistent permissions, enabling users to say, “Alexa, mera mahine ka rashan order kar do” (Alexa, order my monthly groceries) with zero friction.

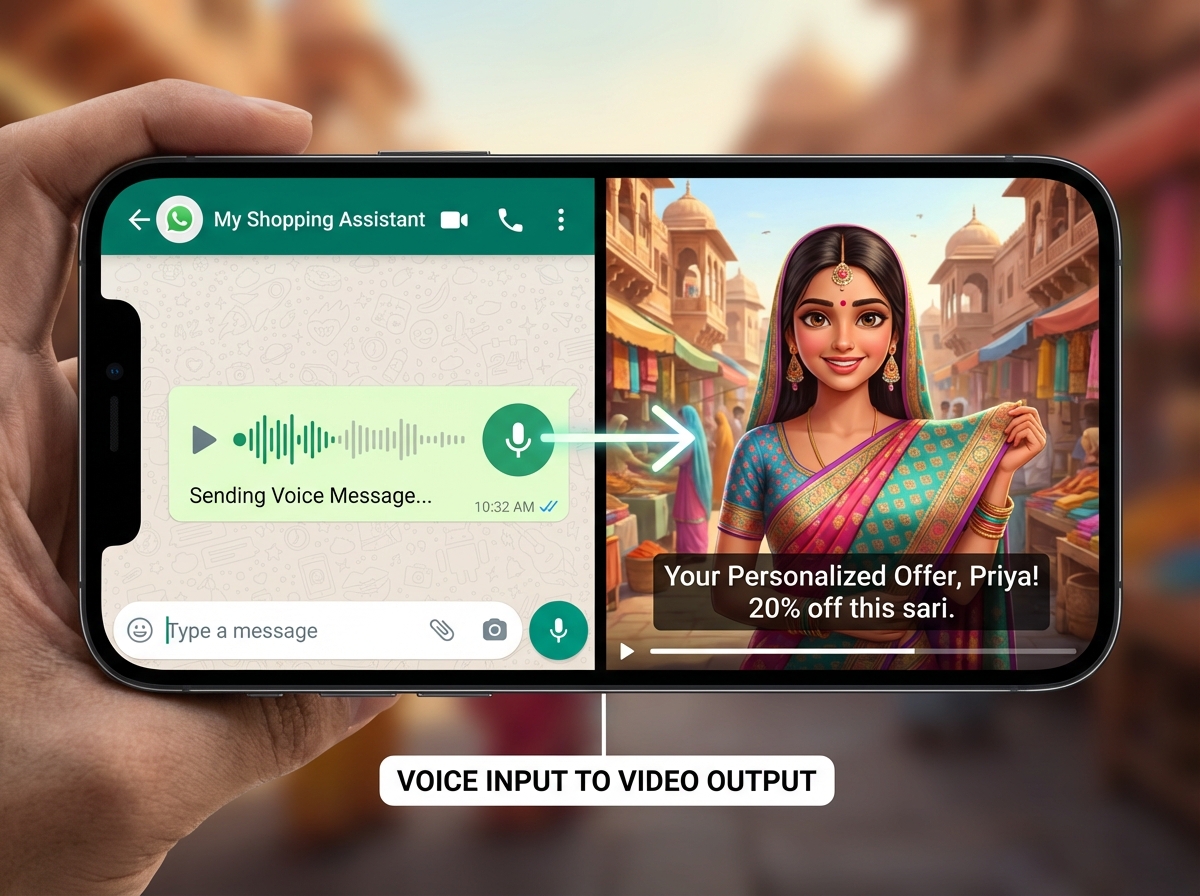

Mobile-centric voice, however, remains the primary driver for tier-2 voice adoption strategies. The ubiquity of WhatsApp in India has made voice notes a preferred method of communication. Leading enterprises are now integrating WhatsApp voice-to-commerce flows where a user can send a voice note describing their needs. This audio is processed through ASR, converted into a structured cart, and sent back to the user as a checkout link. This multimodal approach ensures that users who are uncomfortable with complex app interfaces can still participate in the digital economy.

Accessibility is a cornerstone of this multimodal strategy. For the elderly or first-time internet users, the system must offer IVR (Interactive Voice Response) fallbacks. If a smart speaker or app-based voice interaction fails due to connectivity or ASR confidence issues, the system should trigger an automated call in the user’s native language to complete the transaction. This ensures that no user is left behind due to technical barriers, reinforcing the inclusive nature of voice commerce.

Furthermore, the integration of smart speaker integration India must account for the phonetic nuances of Indian languages. SSML (Speech Synthesis Markup Language) is used to tune the pitch, pace, and emphasis of the AI’s voice, making it sound more like a local store assistant than a robotic entity. By matching the AI’s persona to the regional context—using appropriate honorifics in Tamil or festive greetings in Bengali—brands can build the trust necessary to move users from simple search to high-value purchases.

Sources:

- Razorpay: Understanding Hello! UPI

- Cloud9 Digital: AI Marketing Stats 2026

- TrueFan AI: Voice Commerce Tips

4. Language Playbooks and Voice SEO Regional Languages

To dominate the SERPs and voice assistant results, enterprises must implement a rigorous strategy for voice SEO regional languages. Unlike traditional SEO, which focuses on short-tail keywords, voice SEO is built on long-tail, question-based queries. A user in Uttar Pradesh might ask, “₹10,000 ke andar sabse accha phone kaun sa hai?” (Which is the best phone under ₹10,000?). To capture this, content models must include Devanagari script, Romanized Hindi (Hinglish), and phonetic variants to ensure discoverability across all input methods.

Hindi voice search marketing requires a deep understanding of regional dialects like Bhojpuri or Haryanvi, which often influence the vocabulary used in voice queries. Enterprises should deploy “Speakable” schema markup and FAQ structures that specifically target these conversational phrases. By mapping phonetic variants to canonical product pages, brands can ensure that even if a user’s pronunciation is non-standard, the NLU engine can accurately resolve the intent and present the correct offering.

In the southern markets, Tamil conversational commerce AI must handle complex morphology and honorifics. Tamil is a highly agglutinative language where word endings change based on context and respect. A successful voice interface must recognize these nuances to avoid sounding jarring or disrespectful. Similarly, Bengali voice-activated offers should be timed with the cultural calendar, such as Durga Puja or Poila Boishakh, using seasonal hooks and dialect-specific idioms to resonate with the local audience.

The technical execution of voice SEO regional languages involves implementing hreflang tags for all Indic scripts and creating dedicated regional category pages. These pages should feature audio snippets and video content that mirror the voice queries they are designed to answer. This creates a virtuous cycle where the SEO strategy informs the voice interface, and the data from voice interactions further refines the SEO content, ensuring the brand remains the top recommendation for natural language commerce India.

Sources:

- ROI Hunt: E-commerce Trends 2026

- IndiaMART: Voice and Vernacular Powering the Next Wave

- MxMIndia: India’s Digital Surge 2026

5. Multilingual Voice Marketing Automation and Video Personalization

The true power of voice commerce is realized when it is paired with multilingual voice marketing automation. In 2026, the journey doesn’t end with a voice search; it is just the beginning of an automated, high-conversion funnel. When a voice intent is captured, the system immediately triggers a set of eligibility rules based on the user’s region, past purchase behavior, and current inventory. This data is then used to serve voice-triggered video offers that are dynamically generated to match the user’s specific query and language.

Platforms like TrueFan AI enable enterprises to bridge the gap between a disembodied voice interaction and a high-trust visual experience. By generating dialect-specific shopping videos in real-time, brands can provide a “virtual salesperson” experience. For instance, if a user in Kolkata asks about a specific skincare product in Bengali, the system can instantly deliver a video via WhatsApp featuring a creator or AI avatar speaking the same dialect, explaining the product’s benefits, and providing a direct “Buy Now” link.

TrueFan AI’s 175+ language support and Personalised Celebrity Videos allow for a level of scale previously impossible. These videos are not generic advertisements; they are data-driven assets that include the user’s name, the specific product they mentioned, and a localized call-to-action. This level of conversational AI personalization has been shown to significantly outperform generic reminders, driving higher click-through rates (CTR) and average order values (AOV) in Tier-2 and Tier-3 markets.

The automation logic also handles the complexities of the Indian market, such as SKU substitutions and price fluctuations. If a requested item is out of stock, the voice-triggered video can suggest a relevant alternative in the user’s native language, maintaining the momentum of the sale. This seamless integration of voice, video, and automation creates a frictionless path to purchase that respects the user’s linguistic identity while maximizing the enterprise’s ROI.

Sources:

6. ROI, Analytics, and Regional Shopping Behavior Analysis

Measuring the success of a voice strategy requires a shift from traditional web metrics to a specialized regional shopping behavior analysis. Enterprises must track a new set of KPIs, including ASR confidence scores, intent success rates, and IVR fallback frequencies. By segmenting this data by language and dialect, brands can identify specific linguistic cohorts where the AI may be struggling and refine their lexicons accordingly. This granular approach is essential for optimizing voice assistant marketing ROI.

Solutions like TrueFan AI demonstrate ROI through their ability to convert voice intent into verified sales via personalized video nudges. Analytics dashboards now allow CTOs to see the direct correlation between a voice-initiated search and a video-assisted checkout. Metrics such as “Video View-to-Cart Addition” and “Dialect-Specific Conversion Lift” provide the data-driven insights needed to justify further investment in vernacular technologies. In 2026, the target for ASR accuracy across major Indic languages is 88-90%, with a goal of reducing IVR fallbacks to less than 12%.

The experimentation matrix for tier-2 voice adoption strategies involves A/B testing different voice personas and CTA scripts. For example, a brand might test whether a female voice with a formal register performs better for financial services in Tamil Nadu, while a more colloquial, male voice drives higher engagement for sports apparel in Punjab. These insights into regional preferences allow for the continuous refinement of the conversational AI, ensuring it remains relevant to the evolving shopping behaviors of the Indian consumer.

Furthermore, the ROI model must account for the migration from Cash on Delivery (COD) to digital payments. Voice-enabled UPI confirmations, such as those powered by Hello! UPI, provide a secure and familiar way for vernacular users to pay. By tracking the “COD-to-UPI Migration Rate” within voice cohorts, enterprises can quantify the impact of voice commerce on reducing operational costs and improving cash flow. This holistic view of ROI ensures that voice is seen not just as a marketing tool, but as a fundamental driver of business efficiency.

Sources:

7. Compliance, Trust, and the 90-Day Implementation Roadmap

As enterprises scale their multilingual voice marketing automation, compliance with the Digital Personal Data Protection (DPDP) Act is non-negotiable. Every voice interaction must begin with a clear, multilingual consent prompt that explains how the data will be used. The system must support “purpose limitation,” ensuring that voice data captured for a specific transaction is not used for unrelated profiling without explicit permission. Conversational AI personalization must therefore be built on a foundation of trust and transparency.

The 90-day pilot plan for launching a voice commerce vernacular India 2026 strategy begins with a focused scope: 3 product categories across 3 key languages (Hindi, Tamil, Bengali) in 20 target cities. The first 15 days are dedicated to setting up the Bhashini-backed ASR/NLU pipelines and defining the event stream for analytics. By day 45, the integration of Hello! UPI and the rollout of voice-triggered video offers via TrueFan AI should be live, allowing for the first wave of real-world data collection.

During the final 45 days of the pilot, the focus shifts to optimization and scaling. This involves A/B testing dialect-specific shopping videos and refining the ASR lexicons based on the initial intent match failures. The goal is to achieve a 15-25% lift in checkout conversions for the voice+video cohort compared to the voice-only control group. This phased approach allows enterprises to mitigate risk while building the internal expertise necessary for a full-scale national rollout.

Ultimately, the success of tier-2 voice adoption strategies depends on the brand’s ability to provide a seamless, secure, and culturally resonant experience. By prioritizing accessibility features like slower TTS options for elderly users and clear disclosures for AI-generated content, enterprises can build long-term loyalty in the vernacular market. The 2026 imperative is clear: voice is the bridge to the next billion, and the time to build that bridge is now.

Sources:

Recommended Internal Links

- Voice commerce India 2026: Strategies for regional growth

- Voice Commerce India 2026: Multilingual Growth Playbook

- Voice Commerce India 2026: Strategies for Regional Growth

- Voice Commerce India 2026: Vernacular Video AI Strategies

- Voice Commerce India 2026: Winning Multilingual Strategies

- Voice Commerce India 2026: Vernacular Growth Strategies

- Voice Commerce India 2026: Multilingual Tactics That Convert

- Master voice SEO regional languages for commerce success

- Multilingual voice marketing automation: tactics for 2026

Frequently Asked Questions

How does DPDP compliance affect voice data collection in India?

DPDP requires explicit, informed consent for data processing. In voice commerce, this means providing clear audio prompts in the user’s native language and allowing them to opt out or delete their voice recordings easily. Enterprises must also ensure that voice data is stored securely and used only for the specified purpose of the transaction.

Can voice commerce handle code-mixed languages like Hinglish?

Yes. Modern ASR and NLU engines—particularly when integrated with Bhashini—are designed to handle code-mixing. They can recognize shifts between English nouns and Indic verbs, ensuring the intent is accurately captured even in fluid, conversational speech.

What is the role of video in a voice-first shopping journey?

Video serves as the persuasion and confirmation layer. While voice excels at intent capture, personalized videos provide visual trust to close the sale. Solutions leveraging 175+ languages and personalized celebrity videos deliver these nudges at scale and drive higher CTR and AOV.

Is smart speaker integration necessary for Tier-2 and Tier-3 markets?

Mobile voice is the primary entry point, but smart speaker adoption is rising as an all-in-one family device. Integrating with smart speakers ensures your brand is present for routine, in-home tasks while mobile voice handles on-the-go needs.

How should I measure the ROI of voice commerce initiatives?

Track conversion lift with voice-triggered videos, language/dialect-level intent success, ASR accuracy, IVR fallback reduction, CAC improvements in regional markets, and COD-to-UPI migration within voice cohorts.