India IT Rules 2026 AI Content Labeling: The Enterprise Guide to SGI Labels, 3-Hour Takedowns, and DPDP-Safe Video Personalization

Estimated reading time: ~15 minutes

Key Takeaways

- India’s 2026 IT Rules mandate clear, persistent SGI labels and platform detection for AI-generated media.

- Enterprises should adopt C2PA provenance and semi-fragile watermarking to meet transparency requirements.

- New timelines require 2–3 hour takedowns, demanding always-on incident response and evidence packs.

- Personalized AI video must be DPDP-safe with explicit consent, data minimization, and deletion workflows.

- TrueFan AI offers compliant-by-design architecture with embedded manifests and label automation.

India IT rules 2026 AI content labeling are now in force, mandating prominent labels for AI-generated and manipulated media and tightening platform liability with 2–3 hour takedown timelines. This regulatory shift, effective as of February 20, 2026, represents a fundamental change in how enterprises must approach synthetic media, deepfakes, and personalized marketing. For Chief Technology Officers (CTOs) and Chief Marketing Officers (CMOs), compliance is no longer a peripheral concern but a core operational requirement for brand safety and legal standing.

The Ministry of Electronics and Information Technology (MeitY) has introduced these amendments to the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules to combat the rise of misinformation and non-consensual deepfakes. Platforms like TrueFan AI enable enterprises to navigate this complex landscape by providing tools that are compliant-by-design, ensuring that every piece of synthetic content meets the rigorous standards of provenance and transparency required by Indian law.

As the digital ecosystem evolves, the distinction between "routine edits" and "Synthetically Generated Information" (SGI) has become the new frontier of corporate governance. Enterprises must now integrate visible labels, persistent watermarking, and verifiable metadata into their creative workflows. Failure to do so risks not only heavy penalties under the Digital Personal Data Protection (DPDP) Act but also the immediate removal of content and the potential loss of "safe harbor" protections for the platforms hosting their advertisements.

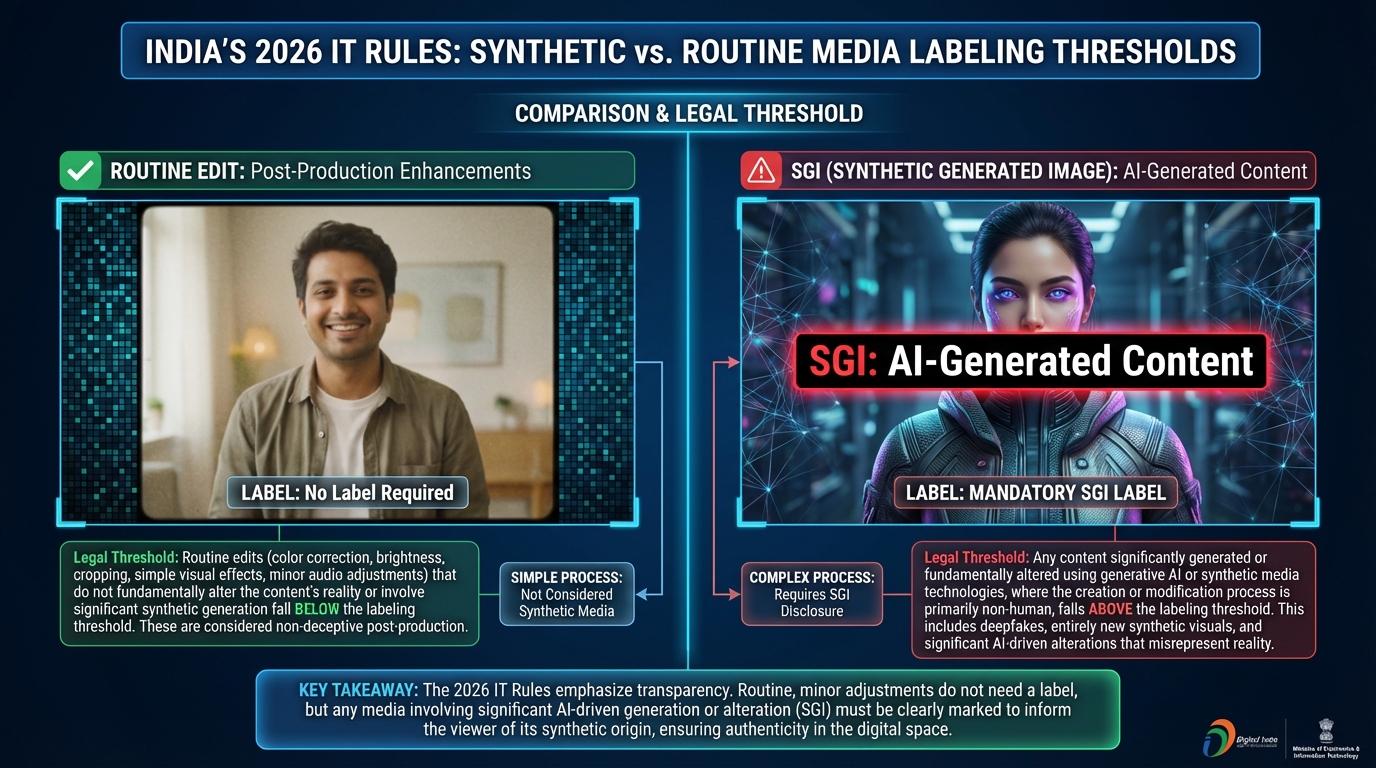

What Changed in the IT Intermediary Guidelines 2026 Amendment

The IT intermediary guidelines 2026 amendment has significantly expanded the scope of digital oversight in India. The most critical addition is the formal definition of "Synthetically Generated Information" (SGI). According to MeitY Rule 2(1), SGI includes "photorealistic AI-generated or significantly AI-altered content (images, video, audio) that could mislead users to believe it depicts real people, speech, or events." This definition purposefully excludes routine, non-misleading edits like color correction or background blurring, focusing instead on content that alters the perceived reality of a digital asset.

The amendment was officially notified on February 10, 2026, and came into full force on February 20, 2026. This ten-day window forced a rapid overhaul of terms of service and internal compliance workflows for all digital intermediaries. Significant Social Media Intermediaries (SSMIs)—those with more than 5 million users—are now under a heightened duty of care. They must not only provide tools for users to self-declare SGI but also deploy automated detection systems to identify and label undeclared synthetic content.

For the enterprise, this means that any AI-generated content labeling rules 2026 must be adhered to at the point of creation. The rules mandate that labels must be "clearly visible," "prominent," and "persist" with the media wherever it is shared. This persistence is a technical challenge, requiring that the label remains embedded in the video or metadata even if the file is cropped, transcoded, or compressed. MeitY AI content guidelines emphasize that the burden of transparency lies with the content creator and the platform, creating a shared liability model that necessitates robust audit trails.

Sources:

- MeitY IT Rules 2026 (Official PDF)

- Khaitan & Co: IT Rules Amendment Summary

- JSA Prism: 2026 IT Intermediary Amendment Overview

- DSCI: IT Amendment Rules 2026 Resource

Labeling, Watermarking, and Provenance: Standards for Enterprise Marketing

Under the new synthetic media compliance India framework, "clear and persistent" labeling is defined by specific technical and visual parameters. For enterprise marketing teams, this means moving beyond simple captions to integrated on-video overlays. A compliant overlay, such as "AI-generated video (SGI)," must maintain a contrast ratio of at least 4.5:1 to ensure readability across different screen types. The label must appear within the first 5–7 seconds of the video and be present in the end card to prevent users from missing the disclosure.

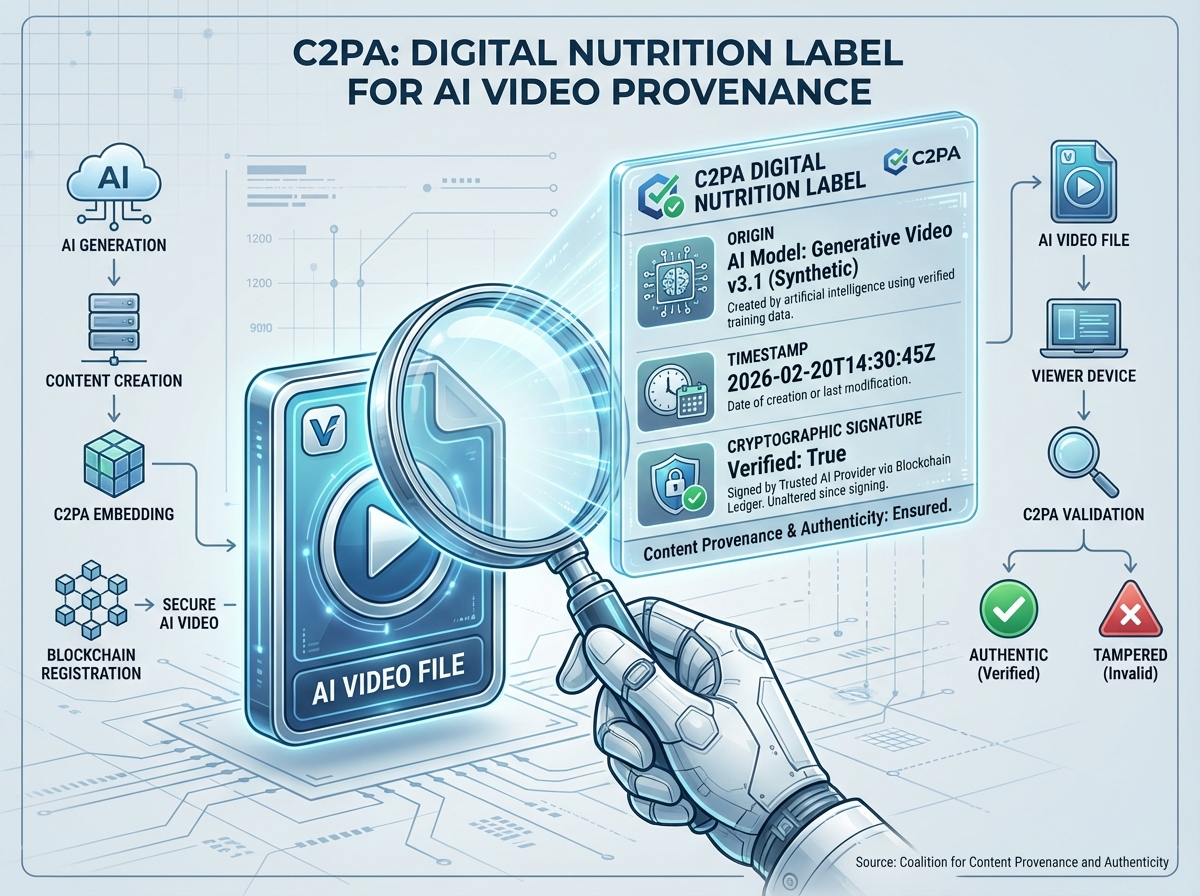

Beyond visual labels, AI video watermarking India regulations now lean heavily toward the adoption of the C2PA (Coalition for Content Provenance and Authenticity) standard. This involves embedding a verifiable manifest into the digital file. This manifest acts as a "digital nutrition label," documenting the asset's origin, the AI tools used, and any modifications made. Because these manifests are cryptographically signed, they provide a level of provenance and authenticity certification that simple watermarks cannot match, allowing platforms and regulators to verify the content's history even after multiple shares.

Persistent watermarking is the second pillar of this technical requirement. Enterprises are encouraged to use semi-fragile watermarks that are embedded directly into the video and audio streams. These watermarks are designed to resist common editing techniques like recompression or cropping. For brands running cross-platform campaigns on YouTube, Meta, and X, it is vital to ensure that these watermarks survive the specific transcoding pipelines of each platform. If a platform’s automated system cannot detect the provenance, it may apply its own generic "Made with AI" badge, which might not align with the brand’s specific messaging or legal disclosures.

Sources:

- MediaNama: Platform Rollout and India’s Tightened Deepfake Rules

- PMF IAS: Regulation of AI Content in India

- India Today Insight: Transparency and Operational Hiccups

- TrueFan AI: AI-Generated Video Copyright (Enterprise Guide)

2–3 Hour Takedowns and Incident Response Operations

The most aggressive component of the 2026 amendment is the synthetic media takedown compliance requirement. Upon receiving a valid government or court order, intermediaries are now required to remove specified unlawful content within a 3-hour window. In certain high-risk categories, such as those involving public order or non-consensual sexual content, this window can be as short as 2 hours. This "takedown clock" begins the moment the intermediary receives the notification, leaving virtually no room for manual review or internal debate.

For enterprises, this necessitates an "always-on" incident response playbook. If a brand’s AI-generated campaign is flagged—whether due to a labeling error or a dispute over synthetic likeness—the marketing and legal teams must be able to respond in real-time. A robust playbook includes pre-registering brand Points of Contact (PoCs) with platform legal portals and maintaining API access to creative libraries for instant removal or label correction. Brands should conduct quarterly "3-hour drills" to simulate a takedown order, ensuring that the chain of command from Marketing Ops to Legal is seamless.

Platform liability has been significantly tightened to enforce these timelines. If an intermediary fails to act within the 3-hour window, they risk losing their immunity from prosecution for user-generated content. Consequently, platforms are becoming hyper-vigilant, often opting to "take down first and ask questions later." To protect their media spend, enterprises must provide platforms with an "evidence pack" for every SGI asset, including timestamps, C2PA manifest URLs, and proof of consent. This proactive documentation is the only way to quickly resolve disputes and restore content that has been erroneously flagged.

Sources:

- The Hindu: Faster Takedown of AI-Generated Deepfake Content

- DD News: Government Briefing on 3-Hour Takedowns

- Times of India: Government Cracks Down on Deepfakes

- Hogan Lovells: Mandatory AI Labelling and 3-Hour Takedown Summary

DPDP Act Implications for AI Video Personalization

The intersection of the India IT rules 2026 AI content labeling and the Digital Personal Data Protection (DPDP) Act creates a complex regulatory environment for personalized marketing. When AI video personalization uses personal data—such as a customer’s name, purchase history, or location—to generate a custom video, it triggers strict DPDP-compliant personalization obligations. Processing this data requires a clear lawful basis, which in most marketing contexts is explicit, granular consent.

Responsible AI marketing India compliance dictates that consent must be as easy to withdraw as it is to give. Enterprises must maintain detailed consent logs that link a specific user’s permission to the unique creative ID of the personalized video generated for them. Furthermore, the principle of data minimization requires that only the necessary PII (Personally Identifiable Information) is sent to the AI rendering engine. Using techniques like tokenization, where sensitive data is replaced with non-sensitive identifiers during the render process, is now considered an industry best practice for mitigating risk.

Data rights operations must also be integrated into the personalization pipeline. If a customer exercises their right to be forgotten or requests the deletion of their data, the enterprise must ensure that all associated personalized AI videos are also removed or de-identified. This requires a tight integration between the CRM, the AI personalization engine, and the distribution channels (like WhatsApp or Email). TrueFan AI's 175+ language support and Personalised Celebrity Videos are built on this foundation of consent, ensuring that every interaction is both legally compliant and brand-safe.

Sources:

- PMF IAS: DPDP and AI Content Regulation Context

- MediaNama: Platform Compliance Under 2026 IT Amendment

- Vikaspedia: IT Amendment Rules, 2026 Overview

Why TrueFan AI: Compliant-by-Design Architecture for 2026

Navigating the deepfake regulation India enterprise landscape requires more than just a creative tool; it requires a secure, audited infrastructure. Solutions like TrueFan AI demonstrate ROI through their ability to automate compliance tasks that would otherwise require hundreds of manual hours. By integrating ISO 27001 and SOC 2-certified environments, TrueFan AI ensures that the data used for AI generation is protected by enterprise-grade security controls, including encryption in transit and at rest, and strict access governance.

The TrueFan AI architecture is specifically mapped to the 2026 MeitY AI content guidelines. Every asset generated through the platform includes an embedded C2PA-style manifest and a visible watermark overlay by default. This "compliance-first" approach means that marketing teams don't have to worry about whether their content will be flagged by platform algorithms. Furthermore, TrueFan AI’s "virtual reshoot" capability allows brands to instantly update labels or disclosures across thousands of personalized videos without needing to re-render from scratch, a critical feature for maintaining agility in a shifting regulatory environment.

Beyond technical labeling, TrueFan AI prioritizes the ethical dimension of AI. The platform only uses contracted and fully consented celebrity likenesses, providing a legal shield against publicity rights and AI-generated video copyright claims. This consent-first model extends to the end-user as well, with built-in hooks for interactive, DPDP-ready consent capture and revocation. By providing a clear chain of custody from the initial talent contract to the final rendered video, TrueFan AI offers the auditability that legal and compliance officers require to approve large-scale AI campaigns in 2026.

Sources:

- TrueFan AI: Enterprise Solutions Overview

- Freshfields: India Targets Deepfakes and AI-Generated Content

- Galaxy Classes: Summary of India’s 2026 AI Rules

Enterprise SGI Compliance Checklist

To ensure your organization meets the enterprise AI content declaration rules, follow this comprehensive checklist for every AI-enabled campaign:

Phase 1: Pre-Publishing & Creation

- SGI Declaration: Is the content clearly identified as "Synthetically Generated Information" in the creative brief?

- Visual Labeling: Does the video include a high-contrast overlay (min 4.5:1) stating "AI-generated" within the first 5 seconds?

- Provenance Embedding: Has a C2PA manifest been attached to the file, documenting the AI tools and modifications?

- Watermarking: Is a semi-fragile watermark embedded in both the video and audio streams?

- Consent Verification: Is there a signed contract for any celebrity likeness, and is end-user consent logged for personalization?

Phase 2: Distribution & Monitoring

- Platform Toggles: Has the "AI-generated content" toggle been activated in the Meta/YouTube/X ad manager?

- Persistence Check: Does the label remain visible after the video has been uploaded and processed by the platform?

- Real-time Alerts: Are social listening tools and platform webhooks configured to flag any regulatory notices?

- 3-Hour Runbook: Is the legal and marketing "on-call" team ready to execute a takedown if an order is received?

Phase 3: Audit & Maintenance

- Records Retention: Are all C2PA manifests, consent logs, and timestamps stored in a central, searchable archive?

- Quarterly Drills: Has the team performed a simulated 3-hour takedown to identify bottlenecks in the escalation matrix?

- Policy Refresh: Have internal AI usage policies been updated to reflect the February 20, 2026, effective date?

Conclusion: The Path to Responsible AI Marketing

The India IT rules 2026 AI content labeling represent a maturing of the digital landscape. While the 3-hour takedown windows and mandatory C2PA manifests may seem daunting, they provide a framework for building deeper trust with consumers. By embracing these rules, enterprises can distinguish themselves as ethical leaders in the age of synthetic media.

Compliance is not a one-time event but a continuous operational standard. As platforms refine their detection algorithms and regulators increase their oversight, the brands that succeed will be those that integrate transparency directly into their creative DNA. With the right tools and a proactive strategy, the transition to SGI-compliant marketing can be a seamless evolution rather than a regulatory hurdle.

Disclaimer: This article is for informational purposes only and does not constitute legal advice. Enterprises should consult with their legal counsel to ensure full compliance with the Information Technology Rules 2026 and the DPDP Act.

Recommended Internal Links

- C2PA watermarking AI video marketing: enterprise guide

- AI Content Authenticity Certification: India 2026 Guide

- DPDP Compliant Personalization: Privacy-First Marketing

- DPDP Consent-First Marketing Guide for Indian CMOs

- Data Minimization Personalized Video: DPDP Act Guide 2026

- Interactive Video Data Capture: DPDP-Compliant 2026

- AI-generated video copyright enterprise: India 2026 guide

Frequently Asked Questions

What exactly qualifies as SGI versus a routine edit?

Under the MeitY AI content guidelines, SGI refers to content that is photorealistic and could mislead a user into thinking it depicts a real person or event. Examples include voice cloning, face-swapping, or AI-generated avatars. Routine edits like color grading, noise reduction, or basic background removal do not require SGI labeling as they do not fundamentally alter the "truth" of the media.

How is the “3-hour” takedown window measured?

The 3-hour window begins the moment a valid order from a government agency or a court is received by the intermediary's designated grievance officer or legal portal. For enterprises, internal response time should be under 60 minutes to allow the platform time to process the removal.

Who is liable if an AI-generated ad is not labeled correctly?

Liability is shared. The intermediary (platform) can be penalized for failing to enforce the rules, while the advertiser risks account suspension, wasted media spend, and potential DPDP actions for deceptive practices.

Does TrueFan AI support the C2PA standard for all its videos?

Yes. TrueFan AI's 175+ language support and Personalised Celebrity Videos include the option to embed C2PA-compliant metadata and manifests, ensuring a verifiable digital trail of origin and AI-generated status for every asset.

Can we use a single disclaimer for a video that is only partially AI-generated?

If only specific elements are synthetic (e.g., an AI voiceover on real footage), use the label “Contains synthetically generated elements (SGI).” This is more precise than a blanket “AI-generated” label and aligns with the amendment’s focus on preventing user deception.