AI Celebrity Video Rights India 2026: The Enterprise Legal, Consent, and Brand-Safety Playbook

Estimated reading time: ~10 minutes

Key Takeaways

- Adopt a consent-first architecture to meet DPDP and IT Rules obligations and avoid rapid takedowns.

- 2–3 hour takedown SLAs and deepfake labelling mandates require 24/7 monitoring and grievance redressal.

- Personality and publicity rights protect celebrity name, image, likeness, and voice—explicit “consent-to-clone” licenses are essential.

- Use C2PA/watermarking and verifiable provenance to strengthen brand safety and platform trust.

- Operationalize compliance via an enterprise AI video legal checklist spanning pre-production to distribution and governance.

The landscape of AI celebrity video rights India 2026 represents the bundle of statutory, judicial, and contractual controls governing a brand’s use of a celebrity’s name, image, likeness, and voice in AI-generated or synthetically reanimated videos. As we navigate 2026, the enforcement of the Digital Personal Data Protection (DPDP) Act intersects with revised IT Rules for deepfakes and global provenance standards. This “enforcement nexus” year marks a shift where consent is no longer a suggestion but a technical requirement for survival.

Indian enterprises are currently facing a regulatory environment where MeitY’s IT Rules deepfake obligations have tightened labelling requirements and accelerated takedown timelines to as low as 2–3 hours. Simultaneously, courts have expanded personality and publicity rights to protect against unauthorized AI clones. To scale AI video marketing safely, CMOs and GCs must adopt a consent-first architecture and celebrity-rights-cleared pipelines.

1. The 2026 Regulatory Shift: IT Act Amendments and Deepfake Marketing Regulations India

The year 2026 has introduced some of the world's most stringent timelines for managing synthetic media. Under the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Amendment Rules 2026, which took effect on February 20, the definition of “deepfake” is now statutory. Platforms and brands are now held to a 2-hour removal window for specified non-consensual deepfake categories, while a broader 3-hour window applies to other AI-generated content upon flagging.

This shift is driven by a staggering 550% rise in deepfake cases in India, with projected losses hitting ₹70,000 Cr. According to the 2026 Thales Data Threat Report, 65% of organizations in India have already experienced deepfake-driven attacks. Consequently, AI video marketing legal compliance India now requires real-time monitoring and a 24/7 grievance redressal mechanism to meet these compressed SLAs.

Strategic risk for CMOs has moved from simple copyright infringement to operational paralysis. A campaign launched without pre-cleared consent can be taken down within hours, leading to massive paid media waste. Deepfake marketing regulations India now mandate that every piece of synthetic content must carry clear, prominent, and persistent labels to ensure consumer transparency.

Sources:

- MeitY: Advisory on deepfakes and platform obligations

- MediaNama: 2-hour IT Amendment Rules 2026 overview

- LiveMint: Centre amends IT Rules for AI labels and takedowns

2. The Legal Spine: DPDP Act AI Video Compliance and Copyright Enterprise

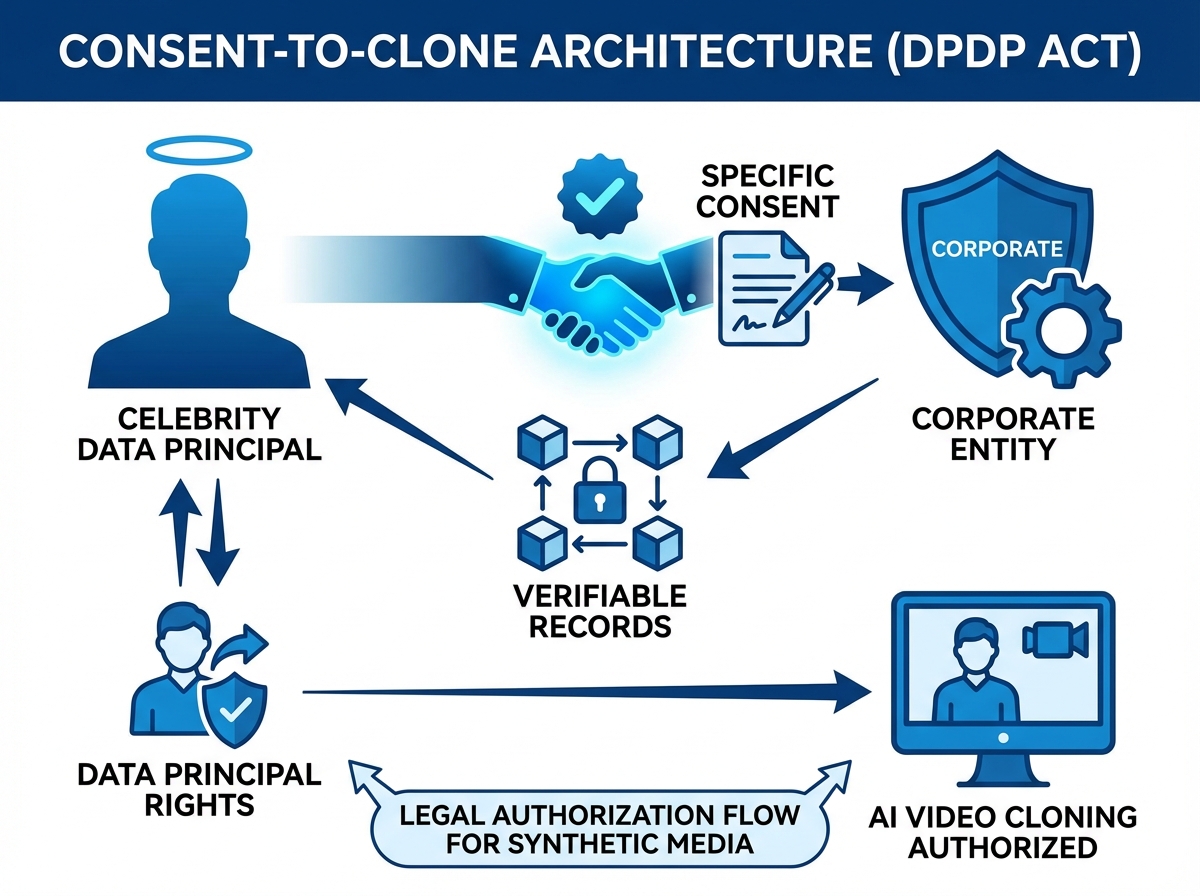

The DPDP Act AI video compliance framework is the primary engine for data processing in 2026. See DPDP-compliant personalization strategies. Under Section 6, valid consent must be free, specific, informed, unconditional, and unambiguous, provided through a clear affirmative action. For AI video personalization, this means brands must maintain verifiable records of consent from both the celebrity (the Data Principal) and the end-user whose data might be used for personalization. Explore interactive video data capture.

Enterprises must integrate “just-in-time” notices that clearly state the purpose of processing, the duration of retention, and the rights of the individual. For youth-focused campaigns, obtaining verifiable parental consent is mandatory under Section 9. Platforms like TrueFan AI enable enterprises to navigate these complexities by ensuring every celebrity likeness is used under a formal, contract-backed consent model. Read the interactive video data capture guide.

Regarding AI-generated video copyright enterprise standards, the legal community remains focused on the “human-in-the-loop” requirement. Purely autonomous AI outputs often lack protectable authorship in India. However, human-directed creative selection and arrangement strengthen the claim to copyright, provided the training inputs and celebrity footage are fully licensed.

Sources:

- Digital Personal Data Protection Act, 2023 (full text)

- IIPRD: Generative AI and copyright trends in India

- Law.asia: India’s deepfake regulation commentary

3. Celebrity Likeness Rights AI Video and Judicial Precedents

The protection of celebrity likeness rights AI video is grounded in Article 21 of the Constitution, covering privacy and dignity. Indian courts have been proactive in granting “John Doe” orders to protect the personality rights of A-listers. Landmark cases like Amitabh Bachchan v. Rajat Nagi & Ors. (2022) and Anil Kapoor v. Simply Life India (2023) have set the standard for injunctive relief against unauthorized AI clones.

In the Anil Kapoor case, the Delhi High Court extended protection to the actor's image, likeness, voice, and even signature catchphrases. This means any celebrity deepfake regulations India 2026 strategy must account for “moral rights” under Section 57 of the Copyright Act. These rights allow a celebrity to restrain or claim damages for any distortion or mutilation of their work that is prejudicial to their honor.

For enterprises, this implies that an AI avatar or “digital double” requires an explicit license that covers specific “Authorized AI Uses.” Unauthorized use risks swift injunctions and significant reputational damage. Celebrity IP rights video personalization must be treated as a high-value asset class with rigorous audit trails to prove the chain of custody for every synthetic frame generated.

Sources:

- LiveLaw: Amitabh Bachchan personality rights order

- Khurana & Khurana: Celebrity endorsements and AI persona rights

- Conventus Law: Identity and personality rights in the age of deepfakes

4. Synthetic Media Consent Framework India: The Enterprise Architecture

A robust synthetic media consent framework India requires a “consent-to-clone” architecture. This framework begins with capturing high-fidelity video and written consents that specify the scope of use, including the specific AI models used for generation. Purpose limitation is critical; enterprises must bind generation and distribution strictly to enumerated campaigns and block re-use across unrelated projects.

The deepfake consent legal framework enterprise must also include operational SLAs for revocation. If a celebrity withdraws consent, the enterprise must have the technical capability to remove all assets and cease generation within the 2–3 hour window mandated by the IT Rules. This requires a centralized consent registry mapped to each unique asset ID and render log.

Furthermore, AI avatar legal requirements enterprise standards demand data minimization. When personalizing videos with user names or locations, brands should only collect the data necessary for that specific render. Learn more about AI avatar micro-influencer marketing (2026). TrueFan AI's 175+ language support and Personalised Celebrity Videos are built on this foundation, ensuring that multilingual scale does not compromise data integrity or legal compliance. See vernacular video automation in India.

Sources:

- MeitY Advisory (Mar 2024): AI content labelling

- Biometric Update: India’s new deepfake rules for social media

5. Contracts, Policy, and Provenance: The AI Video Governance Framework CMO

Drafting a celebrity contract AI video campaigns document in 2026 requires specific clauses that traditional endorsement deals lack. These include restrictions on “retraining” or “fine-tuning” the celebrity's digital model beyond the agreed dataset. Contracts must also mandate the use of watermarking and C2PA-like content credentials to ensure the media's provenance is cryptographically verifiable. See C2PA watermarking for AI video marketing.

An enterprise synthetic media policy 2026 should serve as the internal North Star for marketing teams. This policy must define prohibited content categories—such as political, adult, or discriminatory themes—and establish a clear approvals matrix. It should also align with the AI video brand safety legal framework, ensuring that every creative output is reviewed for potential misleading claims or sensitive category violations.

Technical provenance is the final layer of trust. By embedding C2PA metadata at the point of export, enterprises can provide a tamper-evident history of the video. This complements statutory labels, such as the “AI-Generated” sticker required on Instagram or the pinned comment disclosure on YouTube. Solutions like TrueFan AI demonstrate ROI through these pre-validated security and consent architectures, reducing legal latency for global brands.

Sources:

- C2PA: Content provenance and authenticity standards

- Gartner: Information security spending in India (2026)

6. Implementation: The Enterprise AI Video Legal Checklist

To ensure AI video marketing legal compliance India, CMOs should follow a rigorous pre-launch checklist. This begins with rights clearance and a Data Protection Impact Assessment (DPIA). Enterprises must verify that their vendors hold ISO 27001 or SOC 2 certifications and can support real-time content moderation to prevent brand safety breaches.

The enterprise AI video legal checklist includes:

- Pre-production: Obtain “consent-to-clone” licenses; verify background IP; set up age-gating for minors.

- Production: Attach consent IDs to templates; embed C2PA credentials; store encrypted render logs.

- Distribution: Implement platform-specific disclosures; test 2-hour takedown readiness; sync with 24/7 grievance officers.

- Governance: Conduct quarterly audits of the consent registry; update blocklists for AI prompts; train agency partners on DPDP standards.

Adopting a consent-first AI video platform is the most effective way to automate these controls. By integrating these steps into the workflow, brands can move from reactive risk management to proactive value creation. This approach ensures that the AI video governance framework CMO is not just a document, but a functional part of the marketing technology stack.

Conclusion: The Path to Consent-First Innovation

The era of “move fast and break things” in AI marketing is over. In 2026, the winners are the enterprises that prioritize legal integrity as much as creative output. By aligning with the DPDP Act, mastering the IT Rules' takedown timelines, and respecting the evolving personality rights of talent, brands can unlock the true potential of hyper-personalization.

Building a consent-first AI video platform is no longer a luxury—it is the baseline for enterprise operations. As the regulatory landscape continues to mature, the focus will remain on transparency, auditability, and the fundamental right of individuals to control their digital identities. For the modern CMO, this is the ultimate playbook for sustainable, brand-safe growth in the age of synthetic media.

Frequently Asked Questions

What are the penalties for non-compliance with AI celebrity video rights in India?

Under the DPDP Act 2023, penalties for failing to protect personal data or violating consent requirements can reach up to ₹250 crore per instance. Additionally, unauthorized use of a celebrity's likeness can lead to heavy civil damages and immediate court-ordered injunctions.

How does the 2-hour takedown rule affect my marketing campaigns?

The 2-hour rule applies to non-consensual deepfakes. If your campaign is flagged as unauthorized (even erroneously), you must have a rapid response team to provide proof of consent to the platform. Failure to do so will result in immediate removal and potential platform-level penalties.

Can I use AI to make a celebrity speak a language they don't actually know?

Yes, provided your contract specifically grants rights for “multilingual synthetic voice cloning.” For example, a consent architecture can allow celebrities to “speak” in 175+ languages while maintaining their unique vocal characteristics, all within a legally cleared framework.

Do I need to label every AI-generated video, even if it looks real?

Yes. MeitY's 2026 amendments mandate clear and prominent labelling for all synthetic media. This transparency requirement is designed to prevent consumer deception, regardless of how realistic the AI video appears.

Is C2PA mandatory for Indian enterprises in 2026?

While not explicitly mandated by the IT Act, C2PA is fast becoming the industry standard for due diligence. Using C2PA metadata helps enterprises prove they have followed AI video marketing legal compliance India standards if their content is ever challenged or flagged.