Vernacular Video Automation: The Enterprise Blueprint for Voice Commerce in Bharat’s Tier-2/3 Cities

Estimated reading time: ~16 minutes

Key Takeaways

- Bharat’s tier-2/3 users drive voice-first discovery; vernacular video automation converts intent to checkout at scale.

- Multilingual AI video generators, Indian AI avatars, and voice cloning deliver hyper-local, culturally accurate content.

- A robust conversational shopping stack personalizes videos and offers across WhatsApp, apps, and smart speakers.

- Winning discovery requires voice SEO in regional languages with structured data and code-mixed queries.

- Follow a phased roadmap for tier-2 voice adoption with governance, DPDP compliance, and ROI tracking.

In 2026, tier-2 cities now drive 45% of India’s voice queries as shoppers speak naturally in Hindi, Hinglish, and regional dialects—demanding vernacular video automation to convert discovery into checkout. This seismic shift in consumer behavior signifies that the era of English-centric digital storefronts has ended, replaced by a landscape where natural language commerce India is the primary driver of growth.

Enterprises that fail to adapt to this “voice-first, vernacular-always” reality risk alienating the next 500 million shoppers who prioritize ease of interaction over traditional navigation. This blueprint shows how enterprises can deploy vernacular video automation as the core engine for voice-led, multilingual shopping in Bharat, ensuring every digital touchpoint feels native, personal, and trustworthy.

1. The Bharat Shift: Scaling Voice Commerce Vernacular India

The digital landscape in India has undergone a fundamental transformation, moving away from text-heavy interfaces toward immersive, auditory, and visual experiences. As of 2026, the “Bharat” user—typically residing in tier-2 and tier-3 cities—has bypassed the desktop era entirely, relying on voice as the most natural input for mobile commerce.

Digital video is set to overtake TV by 2030 in India, with the nation already contributing 25% of global YouTube content. This explosion in video consumption, coupled with the fact that tier-2 cities drive 45% of voice queries, signals an urgent demand for local-language content that can keep pace with real-time shopping intents.

For a shopper in Indore or Coimbatore, the traditional e-commerce journey is often fraught with linguistic friction. When a user asks in Hinglish, “Bhaiya, sasta 5G phone batao, camera accha ho,” they expect more than a list of search results; they expect a 20–30 second regional dialect shopping video that highlights 2–3 options, explains the value in their dialect, and provides a direct WhatsApp link to complete the purchase.

This shift is further validated by the launch of conversational assistants like Amazon Rufus in India, which confirms that the future of retail is assistant-led. To win in this environment, brands must move beyond simple translation and embrace a comprehensive strategy for voice commerce vernacular India that integrates high-speed video generation with deep linguistic nuance.

Sources:

- Fortune India: Tier-2 cities drive 45% of voice queries

- Moneycontrol: India produces 25% of global YouTube content

- AdTechToday: 2026 outlook for India’s digital growth

- Social Beat: Digital marketing trends 2026 in India

- About Amazon India: Rufus AI shopping assistant launch

- TrueFan AI: Voice commerce in vernacular India guide

2. Defining the Core: The Mechanics of Vernacular Video Automation

Vernacular video automation is the automated, AI-driven generation and delivery of localized, hyper-personalized product or service videos in Indian regional languages and dialects at enterprise scale. This process utilizes advanced multilingual AI video generators, Indian AI avatars, and AI voice cloning for Indian accents with perfect lip-sync to create content that resonates culturally and linguistically.

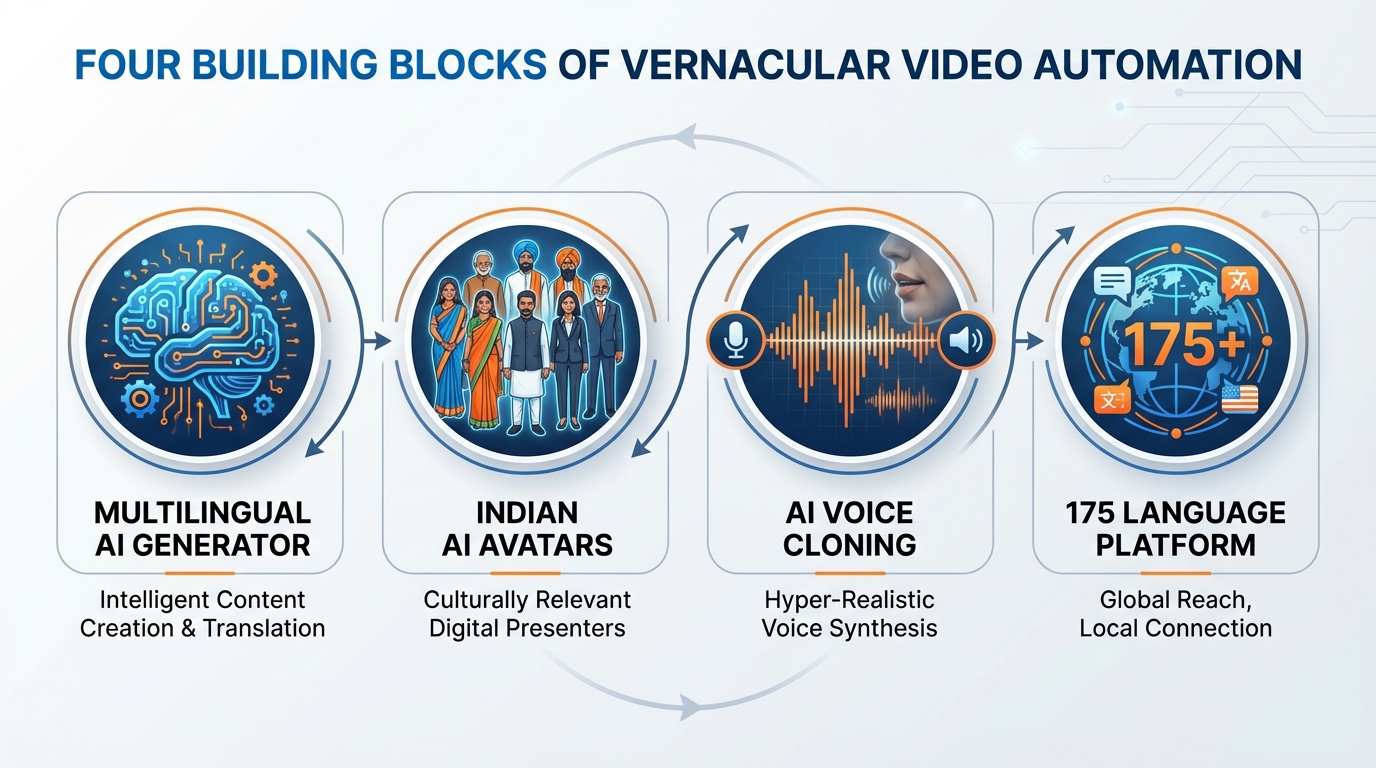

The architecture of a modern vernacular video system rests on four critical building blocks. First is the multilingual AI video generator, a robust system capable of ingesting vast datasets—such as product feeds, CSV files, or URLs—and rendering localized videos in minutes. This allows for batch varianting where thousands of unique videos can be produced for different customer segments simultaneously.

Second, the Indian AI avatar serves as the virtual face of the brand. Unlike generic global avatars, these are customized for Indian cultural cues, including appropriate attire, gestures, and facial expressions that align with regional sensibilities. When paired with AI voice cloning Indian accents, the result is a seamless experience where the avatar speaks Hindi, Tamil, or Marathi with the correct prosody and pronunciation of local names and places.

Finally, a 175 language video platform provides the necessary infrastructure for rendering, storage, and distribution. This pipeline ensures that whether a brand is targeting a Bhojpuri speaker in Bihar or a Tulu speaker in Karnataka, the video is delivered with low latency and high fidelity. Platforms like TrueFan AI enable enterprises to orchestrate these complex workflows, ensuring that every video generated is brand-safe, compliant, and optimized for conversion.

By automating these elements, enterprises can produce over 1,000 creative variants in hours rather than weeks. This speed-to-market is essential for festive sales, flash offers, and personalized retargeting, where the relevance of the message is directly tied to the timing of the delivery.

Sources:

- TrueFan AI: App overview

- TrueFan AI: AI video compliance for enterprises

- TrueFan AI: Vernacular video automation 2024

3. Orchestrating Conversational Shopping AI Personalization

To truly capture the Bharat market, brands must integrate video into a broader framework of conversational shopping AI personalization. This involves the real-time tailoring of product discovery, offers, and assistance via voice or chat, adapted specifically to the user’s language, dialect, and intent across all digital touchpoints.

The architecture for this personalization layer begins with data signals. By analyzing language preferences, geographic location, and past purchase behavior, the system determines the optimal dialect and tone for the interaction. This is followed by a sophisticated NLU (Natural Language Understanding) and ASR (Automatic Speech Recognition) stack that can parse code-mixed speech, such as Hinglish or Tamlish, extracting specific entities like brand names or SKU attributes.

Once the intent is understood, the decisioning engine selects the appropriate offer logic and content template. This triggers the vernacular video automation pipeline via API, filling a pre-defined script with personalized details like the customer's name, their city, and a specific discount code. The final output is then delivered through the user's preferred channel, whether it be a WhatsApp deep link, an in-app overlay, or a smart speaker notification.

Smart speaker commerce integration is a vital component of this omnichannel strategy. While the initial discovery might happen via an Alexa or Google Home device, the journey often transitions to a mobile device for rich media consumption and payment. For instance, a user might ask their smart speaker to reorder groceries, receiving a voice confirmation followed by a WhatsApp video showcasing a personalized “bundle offer” based on their history, which they can then pay for via UPI in one click.

This level of integration ensures that the shopping experience is not just automated, but truly conversational. It mirrors the experience of walking into a local kirana store where the shopkeeper knows your name, your preferences, and speaks your language—but scales that intimacy to millions of users.

Sources:

4. The Content Playbook for Regional Dialect Shopping Videos

Creating high-impact regional dialect shopping videos requires a shift from traditional production mindsets to a “script lattice” approach. Instead of filming a single commercial, enterprises develop a flexible framework where variables can be swapped out dynamically to create thousands of hyper-local versions.

The workflow for Hinglish AI video creation begins with a base script that includes placeholders for dynamic data. For example: “Namaste [name], aapke sheher [city] mein [product] par ab mil raha hai [discount] ka off.” This base is then “forked” into various regional dialects. A Hindi version might be tweaked with Awadhi or Bhojpuri idioms for specific northern clusters, while the Tamil or Telugu versions are rewritten by linguists to ensure the cultural nuances and local slang are captured accurately.

Visual consistency is maintained through a library of product B-roll and brand-safe templates. However, the Indian AI avatar on screen must change its delivery to match the dialect. TrueFan AI’s 175+ language support and Personalised Celebrity Videos allow brands to use recognizable faces or custom avatars that perform with perfect lip-sync across all these variations, significantly increasing the perceived authenticity of the content.

Quality assurance in this automated pipeline is paramount. Enterprises must implement automated checks for name and place pronunciation, ensuring that the AI doesn’t mispronounce “Coimbatore” or “Thiruvananthapuram,” which would immediately break the illusion of locality. Furthermore, the use of CSV-to-video and URL-to-video tools allows marketing teams to upload a spreadsheet of 5,000 customer records and receive 5,000 unique, personalized videos in under an hour.

This production shift is not just about volume; it is about “content-market fit.” Data from 2026 shows that hyper-local Hindi and code-mixed content raise engagement rates by over 40% in tier-2 cities compared to standard Hindi or English content. By leveraging a multilingual AI video generator, brands can finally bridge the gap between national reach and local relevance.

Sources:

- TrueFan AI: Vernacular video automation 2024

- Fluxnote: Vernacular content creation in India (2026)

- Social Beat: Digital marketing trends 2026

- SlideShare: Future of video production in India (2026+)

5. Mastering Voice SEO Regional Languages for Discovery

As voice search becomes the primary discovery tool for Bharat, mastering voice SEO regional languages is no longer optional. This involves optimizing digital assets so that AI assistants and search engines can accurately parse and surface your brand’s vernacular content in response to natural language queries.

The technical foundation of voice SEO lies in structured data. Enterprises must implement comprehensive Schema markup, including FAQ, Q&A, and “Speakable” properties, not just in English but in Hindi and other major regional languages. This allows search engines to identify specific sections of content that are suitable for text-to-speech playback on smart speakers or voice-enabled browsers.

Content operations must also adapt to the way people actually speak. Queries in tier-2 cities are often conversational and code-mixed. Instead of searching for “Best 5G phones,” a user might ask, “Sabse sasta 5G mobile kaunsa hai?” or “Under 15000 mein accha camera phone dikhao.” Creating dedicated landing pages or FAQ sections that mirror these exact phrases—and embedding a corresponding vernacular video automation snippet—can significantly boost visibility in voice search results. For festival peaks, align with voice SEO regional festivals best practices.

Local SEO also plays a critical role. For businesses with a physical presence, Google Business Profile (GMB) categories and descriptions should be localized. Embedding regional dialect shopping videos on store-specific pages, complete with click-to-WhatsApp buttons, creates a seamless transition from a voice search to a localized shopping conversation.

By aligning technical SEO with the linguistic realities of the Indian market, brands can ensure they are the first answer provided when a shopper asks a question in their native tongue. This builds immediate authority and trust, which are the cornerstones of natural language commerce India.

Sources:

- Adzjunction: Regional language search and voice SEO in India

- Doors Studio: Voice search SEO—regional, conversational

6. A Strategic Roadmap for Tier-2 Voice Adoption Strategies

Implementing a full-scale vernacular strategy requires a phased approach that balances speed with governance. Successful tier-2 voice adoption strategies begin with a deep dive into language TAM (Total Addressable Market) sizing and intent mapping.

Phase 0: Foundation (Weeks 1-2)

The first step is to analyze existing search logs and store data to prioritize languages. While Hindi and Hinglish are often the starting point, the data might reveal a massive untapped opportunity in Marathi or Telugu. During this phase, enterprises must also establish governance pillars, ensuring all AI-generated content follows consent-first protocols and data protection standards like ISO 27001.

Phase 1: MVP Launch (Days 1-30)

Launch a pilot program in Hindi and Hinglish using 3–5 core video templates. These should be integrated into high-impact touchpoints like cart-abandonment triggers on WhatsApp or personalized welcome videos in the app. Key performance indicators (KPIs) such as Click-Through Rate (CTR) and Voice-to-Cart conversion should be closely monitored to validate the approach.

Phase 2: Regional Expansion (Days 31-90)

Scale the program to the top 8–10 regional languages. This involves creating “dialect forks” for the scripts and building a comprehensive phrasebook of local idioms. A/B testing different hooks and offers in various languages will provide insights into regional price sensitivities and product preferences.

Phase 3: Full Automation and Optimization (Days 91-180)

At this stage, the vernacular video automation pipeline should be fully integrated with the CRM, allowing for real-time, event-driven video generation at a scale of thousands of variants per hour. Solutions like TrueFan AI demonstrate ROI through significant reductions in creative production hours—often saving over 3,800 hours annually by replacing manual shoots with AI-driven localization.

The ROI of such a program is measured not just in immediate sales, but in long-term efficiency and brand equity. By reducing the cost-per-variant and increasing the relevance of every communication, enterprises can achieve a sustainable competitive advantage in the rapidly evolving Bharat market.

For compliance-by-design across these phases, align with DPDP-compliant personalization strategies and interactive video data capture practices.

Sources:

- TrueFan AI: AI video compliance for enterprise

- Fortune India: Native language search rise, tier-2 voice share

- Social Beat: India digital marketing trends 2026

Conclusion

Natural language commerce in India succeeds when every shopper hears and sees your brand in their own words and accents—vernacular video automation makes that possible at enterprise scale. As we move through 2026, the ability to communicate authentically with the “Bharat” user is no longer a luxury; it is the fundamental requirement for any enterprise seeking to maintain market leadership.

By integrating a multilingual AI video generator with a robust 175 language video platform, brands can transform their digital presence from a static storefront into a dynamic, conversational experience. The roadmap is clear: prioritize voice, embrace vernacular, and automate the video pipeline to meet the shopper exactly where they are.

Request an enterprise demo to stand up a multilingual AI video generator and 175 language video platform integrated with your conversational shopping AI personalization stack today.

Frequently Asked Questions

What is the primary benefit of using a multilingual AI video generator for Indian e-commerce?

The primary benefit is the ability to achieve hyper-localization at scale. A multilingual AI video generator allows a brand to speak to millions of customers in their specific dialect (like Hinglish, Bengali, or Tamil) without the prohibitive costs and time requirements of traditional video production. This significantly boosts engagement and trust in tier-2 and tier-3 markets.

How does vernacular video automation handle different Indian accents?

Advanced systems use AI voice cloning Indian accents to replicate the specific prosody, rhythm, and pronunciation patterns of various regions. This ensures that the audio sounds natural and relatable to a local listener, avoiding the “robotic” feel of standard text-to-speech engines.

Is AI-generated content compliant with Indian data protection laws?

Yes, provided the enterprise uses a platform with robust governance. Leading solutions ensure that all Indian AI avatars and celebrity likenesses are used with explicit consent. Furthermore, data residency and PII minimization practices are employed to align with the Digital Personal Data Protection (DPDP) Act and international standards like SOC 2. See guidance for DPDP-compliant personalization.

Can these videos be integrated with WhatsApp and UPI for a complete shopping journey?

Absolutely. The most effective use case for vernacular video automation is as a bridge in the conversational journey. A personalized video can be sent via WhatsApp with a deep link that takes the user directly to a pre-filled cart, where they can complete the transaction using UPI, creating a frictionless “voice-to-video-to-payment” flow.

How does TrueFan AI support large-scale enterprise requirements?

TrueFan AI provides a comprehensive 175 language video platform designed for high-volume, low-latency rendering. It offers API-first integration for CRMs, ISO 27001 certified security, and the ability to generate over 100,000 video variants, making it the preferred choice for enterprises looking to dominate the vernacular commerce space in India.