How to Win AI Overviews with Answer Engine Optimization Video Content: Zero-Click Strategies, Schema, E-E-A-T, and Voice Search for Enterprises

Estimated reading time: ~12 minutes

Key Takeaways

- Design videos for extractive answers with question-led chapters and synchronized on-screen text.

- Implement advanced schema (VideoObject, Clip, SeekToAction) to unlock Key Moments and featured snippets.

- Prioritize zero-click visibility and brand citation share across AI Overviews and voice assistants.

- Strengthen trust with E-E-A-T signals—expert intros, credentials, sources, and update transparency.

- Localize for India with multilingual, voice-first scripts and fast-loading video experiences.

The digital landscape has shifted from a “search and click” economy to a “query and consume” ecosystem. As we navigate 2026, AI Overviews, featured snippets, and voice assistants increasingly surface video answers directly on the SERP, making traditional click-through rates a secondary metric. For modern enterprises, answer engine optimization video content is no longer optional; it is the primary vehicle for securing brand citations in an era where AI-generated summaries dominate user attention.

Winning in AI Overviews video SEO 2026 requires a fundamental pivot from creating broad-form entertainment to engineering extractive, high-authority assets. This playbook provides a step-by-step system to structure and scale enterprise videos for extractive AI, utilizing advanced schema, question-led chaptering, and multilingual voice-ready scripts to ensure your brand remains the definitive source of truth.

The Evolution of Answer Engine Optimization Video Content in 2026

Answer Engine Optimization (AEO) for video is the strategic process of structuring and annotating visual content so AI systems can extract concise, trustworthy answers. Unlike traditional SEO, which focuses on driving traffic to a landing page, AEO prioritizes the extraction of precise information bits that AI models like Gemini, Perplexity, and GPT-Search can cite and display.

By 2026, the mental model for video production must shift toward “Answer-First” architectures. This involves creating intros (20–60 seconds) that provide immediate value, accompanied by on-screen text that mirrors the spoken transcript. This alignment ensures that multimodal AI models can verify the information through both auditory and visual data streams, increasing the likelihood of a featured citation.

AEO also demands a rigorous approach to semantic search video content. This means moving beyond keyword stuffing to topical authority. Your videos must address the “why” and “how” with surgical precision, using question-led chaptering where timestamps are titled as natural-language queries. This structure allows AI to jump to precise segments, providing users with “Key Moments” that answer their specific intent without requiring a full video view.

In the Indian market, this evolution is particularly critical. With the rapid expansion of 5G and a massive influx of new internet users, search behavior is heavily skewed toward voice and video. Enterprises must implement multilingual transcripts and captions to cater to this linguistic diversity, ensuring that their AEO strategy is inclusive of regional dialects and “Hinglish” nuances that AI engines are now adept at parsing.

Sources:

AI Overviews Video SEO 2026: Navigating the Zero-Click Landscape

The rise of AI Overviews (AIO) has solidified the “zero-click” reality for enterprise search. In 2026, Google’s Gemini-era algorithms ingest video content by parsing transcripts, chapters, and deep-level schema to quote definitions and steps directly in the search interface. To thrive, brands must adopt zero-click optimization strategies that treat on-SERP visibility as a primary success metric.

Design your videos to be “complete answers” on the SERP. This means incorporating strong brand attribution through logo watermarks, verbal brand mentions within the first ten seconds, and lower-third graphics that establish authority. Even if a user never clicks through to your website, the AI Overview will cite your brand as the source, building mental availability and trust.

To maximize AI answer citation strategies, enterprises should provide extractive TL;DR summaries (40–60 words) in the metadata and on-page text surrounding the video. Use data tables and short, bulleted lists that align with “People Also Ask” (PAA) style questions. When an AI engine looks for a definitive answer to a complex query, it looks for the path of least resistance—content that is already formatted for extraction.

Measurement in this landscape must shift beyond traditional CTR. Core KPIs for 2026 include AIO presence rate per topic cluster, citation share across major LLMs (Gemini, Perplexity, ChatGPT), and branded search lift. By tracking how often your video segments appear as “Key Moments,” you can instrument ROI based on brand reach and assisted conversions rather than just direct sessions.

Sources:

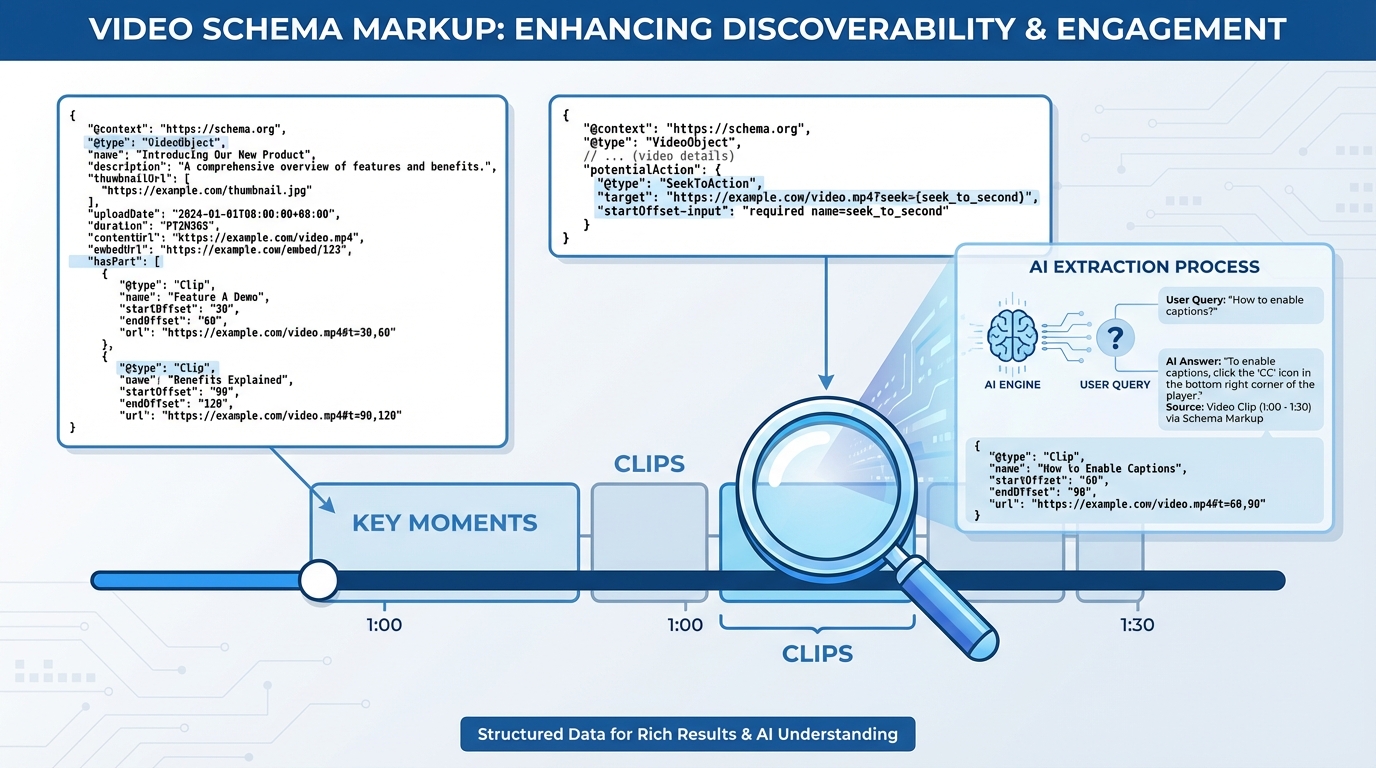

Technical Architecture: Schema Markup Video Content and Featured Snippets

The technical backbone of AEO is schema markup video content. Without robust JSON-LD, even the most informative video remains “invisible” to the extractive layers of an AI engine. Enterprises must implement a combination of VideoObject, Clip, and SeekToAction properties to ensure their content is eligible for featured snippets and enhanced SERP displays.

The VideoObject schema should be the baseline, containing essential fields like name, description, uploadDate, and thumbnailUrl. However, to truly dominate, you must use Clip objects for every chapter. Each clip should have a question-form name (e.g., “How to calculate GST for small businesses?”) and precise start/end offsets. This tells the AI exactly where the answer resides, facilitating the creation of “Key Moments” that users can interact with directly from the search results.

Furthermore, HowTo schema video markup and FAQ schema video implementation provide additional layers of context. By mapping each step of a process to a specific video segment, you increase the chances of appearing in “How-To” rich results. While Google has refined the visibility of these results since 2023, they remain a primary data source for AI Overviews and voice assistants that need to read out instructions sequentially.

For enterprises operating at scale, SeekToAction is a game-changer. It allows Google to automatically identify “Key Moments” based on the video’s structure, even if you haven’t manually defined every clip. When combined with stable, timestamped URLs, this technical setup ensures that your featured snippet video content is always ready for multimodal ingestion by search bots.

Sources:

- Official Video Structured Data and Key Moments Documentation

- Video Schema Upgrade with Clip and SeekToAction

Voice Search Video Optimization and Conversational SEO for the Indian Market

India’s search landscape is uniquely defined by voice. By 2026, voice-driven queries in regional languages have surpassed text-based searches in many demographics. Consequently, voice search video optimization must be a core pillar of your content strategy. Explore multilingual voice marketing automation. This involves scripting videos for natural conversation, moving away from formal corporate jargon toward a 6th–8th grade reading level.

Conversational SEO video marketing requires a “Question-Answer-Elaboration” cadence. Your scripts should mirror how people actually speak. Start with a direct, one-sentence answer to a likely voice query, followed by a brief elaboration. This “micro-answer” format is exactly what voice assistants like Alexa, Siri, and Google Assistant look for when providing spoken responses to users.

In the Indian context, localization is paramount. It is not enough to simply translate English scripts. You must account for dialectal phrasing and “Hinglish” (the blend of Hindi and English) that dominates urban centers. Providing clean, multilingual captions and transcripts in at least the top five regional languages ensures that your video content is accessible to the broadest possible audience and indexable by AI engines localized for India. See this voice commerce in India 2026 guide.

Technical performance also plays a role in voice search. Since many voice queries happen on mobile devices over varying network speeds, ensuring low startup latency and fast Largest Contentful Paint (LCP) for your video embeds is critical. A delay in playback can lead to the AI engine skipping your content in favor of a faster-loading text snippet or a competitor’s optimized clip.

Sources:

- Local SEO Trends and Voice-First Practices in India

- Service Blueprints for Search Discovery in India

Building Authority through E-E-A-T Video Optimization and AI Answer Citation Strategies

As AI engines become more discerning, the “Trust” component of E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) has become the ultimate differentiator. E-E-A-T video optimization involves embedding trust signals directly into the video and the surrounding page. AI models are now capable of identifying the person on camera and cross-referencing their credentials against the broader web.

To earn citations, your videos should feature recognized experts who state their credentials verbally within the first 20 seconds. Use lower-third graphics to display titles, certifications, and affiliations. Furthermore, incorporate source overlays—such as citations from industry journals or government standards—while discussing data. This visual and auditory proofing makes your content “citation-ready” for AI models that prioritize verified information.

On the page level, ensure the video is accompanied by a detailed author bio and a “Last Updated” date. Transparency is key; include a “Sources” section that lists all references used in the video. In India, this also includes compliance disclosures related to the Digital Personal Data Protection (DPDP) Act, ensuring that your data-gathering and personalization workflows are fully transparent and consent-based. Read more on DPDP-compliant personalization strategies.

Finally, building consensus is a powerful AI answer citation strategy. Use canonical phrasing that is consistent across your website, social media, and video transcripts. When multiple high-authority sources (including your own) use the same terminology and definitions, AI engines are more likely to view that information as a “fact” and cite your video as the definitive source.

Sources:

Enterprise Scalability: Semantic Search Video Content and TrueFan AI Integration

Scaling AEO across thousands of products or services is a monumental task for any enterprise. This is where semantic search video content meets automated production. To maintain a competitive edge, brands must map their entire entity graph—products, tasks, and user pain points—and create a web of interlinked video assets that cover every stage of the customer journey.

Platforms like TrueFan AI enable enterprises to bridge the gap between high-quality production and AI-ready optimization. By automating the creation of personalized, question-led video content, brands can address thousands of unique PAA queries without the overhead of traditional film shoots. This allows for a “Video-First” FAQ hub where every common customer question is answered by a high-authority, schema-rich video clip.

TrueFan AI's 175+ language support and Personalised Celebrity Videos allow brands to achieve unprecedented scale in the Indian market. Whether it’s a festive greeting or a complex product tutorial, the ability to deliver content in a user's native tongue with perfect lip-sync ensures that the content is both engaging and optimized for regional voice search. This level of localization is a significant signal for AI engines looking for the most relevant local answer.

Solutions like TrueFan AI demonstrate ROI through deep integration with search analytics. By providing export-ready VideoObject and Clip JSON-LD, these platforms ensure that every video produced is immediately “discoverable” by AI Overviews. Enterprises can track watch-through rates on specific answer segments and measure how these “Key Moments” contribute to branded search lift and offline visitation, as seen in large-scale campaigns for brands like Zomato and Hero MotoCorp.

Sources:

Frequently Asked Questions

Frequently Asked Questions: Mastering AEO for Enterprise Video

How does Answer Engine Optimization (AEO) differ from traditional Video SEO?

Traditional Video SEO focuses on ranking a video in search results to drive clicks to a platform like YouTube or a website. AEO, however, focuses on making the content “extractive.” The goal is to provide clear, structured answers that AI engines can quote directly in AI Overviews or voice responses, often resulting in a “zero-click” interaction where the brand gains authority through citation rather than a direct visit. Learn more in this AEO for enterprise video guide.

Can TrueFan AI help in creating content that ranks for “Key Moments”?

Yes, TrueFan AI is designed to operationalize AEO at scale. The platform automates the chaptering process, ensuring that videos are structured around specific questions. It also generates the necessary schema markup (JSON-LD) for Clips and SeekToAction, making it significantly easier for search engines to identify and surface “Key Moments” from your video content.

Is FAQ schema still relevant for video in 2026?

While Google has reduced the frequency of FAQ rich results on the SERP, FAQ schema video implementation remains vital for AI discoverability. AI engines use FAQ blocks to understand the semantic relationship between questions and answers. By pairing a video clip with FAQ schema, you provide a structured data source that AI Overviews and voice assistants use to generate accurate, cited responses.

How do I ensure my videos are compliant with India’s DPDP Act?

Compliance involves transparency in how you use data for video personalization and transcripts. Ensure your video landing pages have clear consent banners and DPDP-ready disclosures. If you are using AI to personalize content, ensure your service provider (like TrueFan AI) follows ISO 27001/SOC 2 standards and has built-in moderation filters to protect user data and brand integrity. See the interactive video data capture guide.

What are the most important 2026 statistics for video SEO?

By 2026, it is projected that over 65% of enterprise search queries will result in zero clicks, making citation share the new gold standard. Additionally, voice search in India is growing at a 40% CAGR, and AI Overviews now feature video citations in nearly 80% of informational queries. Enterprises that fail to optimize for these multimodal surfaces risk losing significant market share to AI-native competitors.

Should I host my videos on YouTube or my own enterprise site?

A “dual-publish” strategy is best. YouTube provides massive reach and is a primary source for Google’s video features. However, self-hosting (or using an enterprise video player) on your own domain allows for better data control, advanced schema implementation, and the ability to track down-funnel conversions. Always ensure that your self-hosted version is the “canonical” version to consolidate authority.