Voice Commerce Vernacular India: Enterprise Playbook to Launch Hindi, Tamil, Bengali Shopping for Tier-2/3

Estimated reading time: ~15 minutes

Key Takeaways

- Vernacular voice commerce is essential for Tier-2/3 growth, serving code-mixed users (Hinglish/Tanglish/Benglish) and reducing cognitive load.

- A robust stack with ASR/NLU, dialog management, personalization, and in-app voice plus smart speaker entry-points drives trust and conversion.

- Multilingual AI video closes the loop after voice intent, enabling localized, scalable personalization and significant ROI uplift.

- Governance-first deployment requires DPDP-aligned consent, disclosure for synthetic media, bias mitigation, and audit trails.

- Follow a 90-day roadmap from utterance mining to full rollout with UPI flows, pilots, and KPI-led optimization.

The digital landscape in Bharat is undergoing a seismic shift, moving away from the traditional “type-and-search” model toward a more intuitive, speech-first ecosystem. As we approach 2026, voice commerce vernacular India has transitioned from a niche experimental feature to a core strategic necessity for enterprises aiming to capture the next billion users. With India on track to exceed 900 million internet users by 2025, the demand for natural, Indic-language interfaces is skyrocketing, particularly in non-metro regions where linguistic comfort dictates purchasing confidence.

For the modern Chief Digital Officer (CDO) or Head of Customer Experience (CX), the challenge is no longer just about translation; it is about cultural and linguistic resonance. The “next billion” users do not just speak Hindi, Tamil, or Bengali—they speak a fluid, code-mixed version of these languages, often referred to as Hinglish, Tanglish, or Benglish. Mastering voice commerce vernacular India requires a sophisticated understanding of these nuances, integrating advanced Natural Language Understanding (NLU) with localized content engines to build trust and drive conversions in Tier-2 and Tier-3 markets.

The Strategic Imperative of Natural Language Commerce India

The evolution of natural language commerce India is driven by a fundamental change in user behavior: the “speak not type” revolution. In rural and semi-urban India, voice is the primary bridge across the digital divide, offering a low-friction entry point for users who may find complex app interfaces or English-centric navigation intimidating. By 2026, it is projected that voice-activated shopping will account for nearly 25% of all digital transactions in India, with regional languages contributing to over 75% of that volume.

Enterprises that fail to adapt to this vernacular-first reality risk obsolescence in the country’s fastest-growing consumer segments. The integration of voice is not merely a convenience; it is a trust-building mechanism. When a user in Coimbatore can reorder groceries in their local dialect or a farmer in Siliguri can inquire about credit terms in Bengali, the cognitive load of digital transacting is significantly reduced. This shift toward natural language commerce India necessitates a robust technological stack that can handle phonetic variations, regional accents, and the complexities of Indian syntax.

Furthermore, the government’s Mission Bhashini is accelerating this transition by building a national public digital infrastructure for languages. This initiative provides the foundational AI models for 22 scheduled languages, enabling enterprises to build more accurate Automatic Speech Recognition (ASR) and Text-to-Speech (TTS) systems. By leveraging these advancements, brands can create seamless, end-to-end shopping journeys—from discovery to checkout—entirely through voice.

Sources:

- IBEF: India’s internet users to exceed 900 million

- TechArc: Vernacular Voice Revolution

- Mission Bhashini Official

Designing Tier-2 Voice Adoption Strategies for Bharat

To successfully penetrate the “Bharat” market, enterprises must move beyond generic voice assistants and implement specialized tier-2 voice adoption strategies. These strategies must account for the high prevalence of code-switching, where users mix English nouns with regional verbs and grammar. For instance, a user might say, “Mujhe blue color ki shirt dikhao under two thousand,” blending Hindi and English seamlessly. A rigid, single-language NLU model would fail here; the system must be trained on “Hinglish” utterance libraries to be effective.

Another critical component of tier-2 voice adoption strategies is the accommodation of low-literacy UX. In many regional markets, visual and auditory confirmation is more effective than text-based notifications. Implementing a “voice-first, video-second” approach—where a voice command triggers a short, personalized video explainer in the local dialect—can significantly increase comprehension. This is particularly vital for complex financial products or high-value retail purchases where trust is the primary barrier to conversion.

By 2026, data suggests that long-tail, conversational queries will dominate search patterns in India. Users are no longer searching for “best smartphone”; they are asking their devices, “Dus hazaar ke andar sabse achha camera wala phone kaunsa hai?” (Which is the best camera phone under ten thousand?). Capturing this intent requires a move away from keyword-based SEO toward a semantic, intent-based architecture that mirrors the natural speech patterns of regional India. For festival-led surges and hyperlocal spikes, extend playbooks with Tier-2 festival commerce automation.

Sources:

Reference Architecture for Smart Speaker Commerce Integration

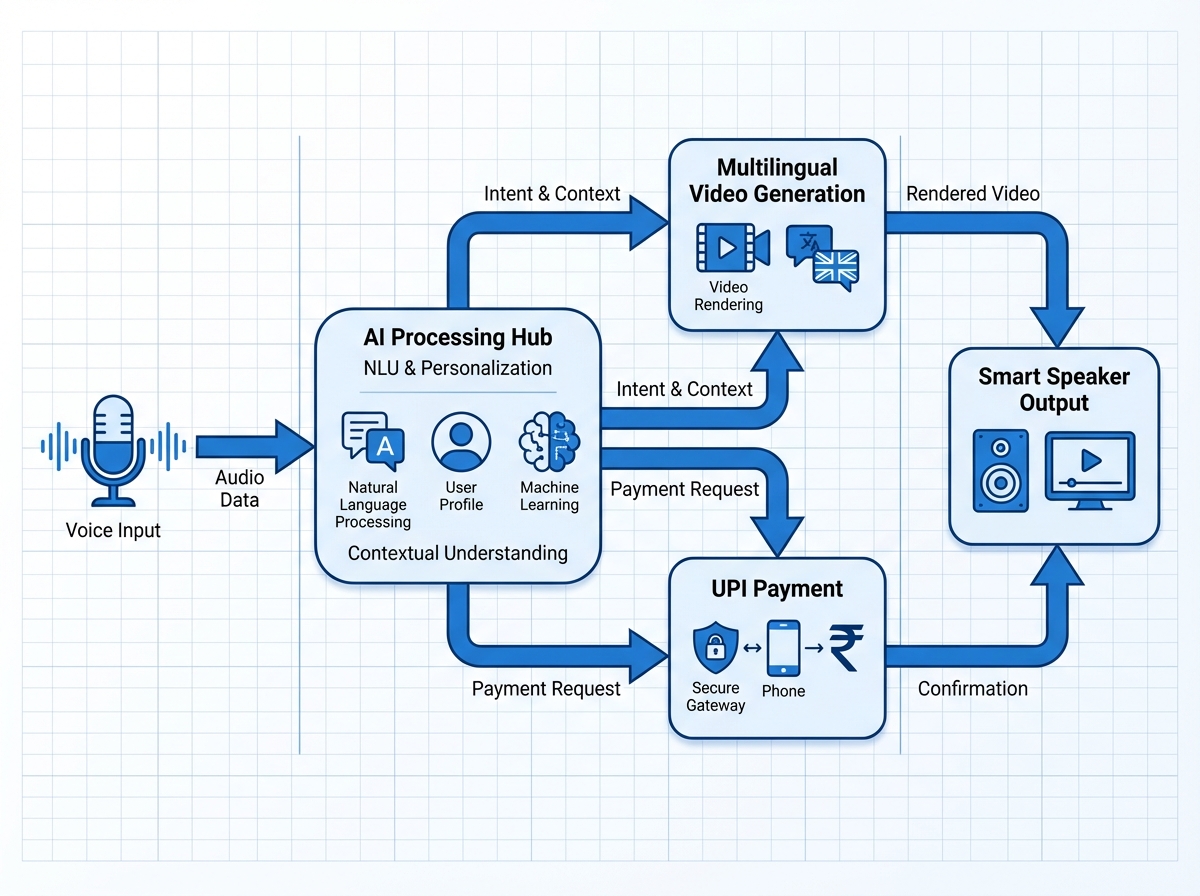

A successful enterprise voice deployment requires a multi-layered reference architecture that connects the user’s utterance to the back-end commerce engine. At the forefront is smart speaker commerce integration, utilizing platforms like Alexa or Google Assistant as entry points. However, the true power lies in the “in-app voice” experience, where the brand maintains full control over the data and the user journey. This architecture must include a high-performance ASR layer tuned for Indian accents and a dialog management system capable of handling error recovery gracefully.

The middle tier of this architecture focuses on conversational shopping AI personalization. This layer pulls data from the CRM and CDP to understand the user’s language preference, past purchase history, and regional affinity. For example, if a user in West Bengal frequently buys sweets during Durga Puja, the AI should proactively offer recommendations in Bengali, using culturally relevant imagery and terminology. This level of personalization ensures that the voice interaction feels like a conversation with a local shopkeeper rather than a robotic interface.

Platforms like TrueFan AI enable enterprises to bridge the gap between voice intent and visual engagement by generating hyper-personalized video content that responds to these voice triggers. By integrating a multilingual AI video generator into the commerce stack, brands can instantly produce a video that explains a product's features or a personalized offer in the user's specific dialect, delivered via WhatsApp or the mobile app. This creates a closed-loop system where voice initiates the intent, and personalized video closes the sale.

Key Components of the Architecture:

- ASR/NLU Layer: Optimized for 22+ Indian languages and code-mixed variants.

- Dialog Policy: Context-aware state management to handle multi-turn conversations.

- Personalization Engine: Real-time integration with loyalty and purchase data.

- TTS & Voice Cloning: Generating brand-consistent voices with regional accents.

- Fulfillment API: Seamless connection to UPI payments and logistics tracking.

Sources:

- Times of India: Flipkart's Voice Assistant Team

- IndiaAI: National Mission on Natural Language Translation

Scaling Conversion with a Multilingual AI Video Generator

In the context of Tier-2 and Tier-3 markets, text is often a secondary medium. The primary drivers of engagement are voice and video. To scale effectively, enterprises need a multilingual AI video generator that can produce thousands of localized video variants in seconds. This technology allows a brand to take a single master campaign and automatically adapt it for different regions—changing the language, the dialect, the currency format, and even the cultural references to suit the local audience.

TrueFan AI's 175+ language support and Personalised Celebrity Videos provide a powerful example of how this technology can be leveraged for massive reach. By using Hinglish AI video creation, brands can speak the language of the youth in Indore, while simultaneously using a regional dialect shopping videos approach to reach an older demographic in Madurai with pure Tamil. This granular level of targeting ensures that the message is not just heard, but understood and acted upon.

The ROI of this approach is well-documented. For instance, when brands move from generic English ads to personalized, vernacular video content, they often see a 15-20% uplift in conversion rates. Solutions like TrueFan AI demonstrate ROI through metrics like Zomato’s 354,000 personalized videos in a single day or Goibibo’s 17% increase in WhatsApp read rates. These results prove that when you combine the reach of voice with the impact of personalized video, the results are transformative for enterprise growth.

Content Engine Capabilities:

- Dynamic Scripting: Automatically inserting user names, local cities, and specific product prices.

- Lip-Sync Accuracy: Ensuring the AI avatar’s mouth movements perfectly match the regional audio.

- Dialect Branching: Creating separate versions for Lucknowi Hindi vs. Delhi Hindi to enhance authenticity.

- Automated Distribution: Triggering video delivery via WhatsApp API immediately after a voice interaction.

Governance and AI Voice Cloning Indian Accents

As enterprises adopt synthetic media, ethical governance and compliance become paramount. AI voice cloning Indian accents offers a unique opportunity to create a consistent brand persona across all touchpoints. Whether it is a celebrity brand ambassador or a friendly mascot, the voice must sound authentic to the region. However, this must be balanced with strict adherence to India’s Digital Personal Data Protection (DPDP) Act (DPDP Act guidance for personalization). Explicit consent must be obtained before capturing voice data, and users must be clearly informed when they are interacting with AI-generated content.

Transparency is the foundation of trust in digital commerce. Every instance of AI voice cloning Indian accents should be accompanied by a disclosure, such as “This is an AI-generated message from [Brand Name].” Furthermore, enterprises must ensure that their AI models are free from linguistic bias and do not inadvertently use offensive or culturally insensitive phrasing. Regular audits of the NLU and TTS outputs are essential to maintain the integrity of the brand’s communication in diverse regional markets.

A 175 language video platform must also prioritize data residency and security. For Indian enterprises, ensuring that user data is processed and stored within national borders is a legal requirement. By implementing robust security protocols and clear opt-out mechanisms, brands can leverage the power of generative AI while protecting user privacy and maintaining long-term loyalty in the competitive Tier-2 and Tier-3 landscapes.

Compliance Checklist:

- DPDP Alignment: Clear consent prompts for voice and data processing.

- Synthetic Disclosure: Visual and verbal cues indicating AI-generated media.

- Bias Mitigation: Continuous testing for regional and gender-neutral language.

- Audit Trails: Comprehensive logging of all AI-generated interactions for quality control.

Implementation Roadmap: Launching Hindi, Tamil, and Bengali in 90 Days

Launching a comprehensive vernacular voice strategy requires a phased approach to ensure technical stability and user acceptance. The first 30 days should focus on “Utterance Mining”—analyzing existing customer service logs and search queries to identify the most common intents in Hindi, Tamil, and Bengali. This data forms the training set for the NLU models, ensuring they can handle the specific slang and code-switching patterns of the target regions.

The second phase (Days 31-60) involves the integration of the voice interface with the existing commerce back-end. This includes connecting to the product catalog, payment gateways (specifically UPI), and the multilingual AI video generator for post-interaction engagement. During this phase, pilot programs should be run in specific cities—such as Kanpur for Hindi, Coimbatore for Tamil, and Siliguri for Bengali—to gather real-world feedback and refine the conversational flows.

The final 30 days are dedicated to scaling and optimization. By monitoring KPIs such as “Voice Containment Rate” (the percentage of queries resolved without human intervention) and “Conversion Uplift,” enterprises can fine-tune their strategies. The goal is to create a seamless loop where a user can discover, inquire, and purchase using only their voice, supported by hyper-personalized visual content that reinforces the brand's commitment to the local culture.

90-Day Milestone Summary:

- Week 4: Completion of language-specific utterance libraries and intent mapping.

- Week 8: Integration of voice-to-payment flows and initial video template creation.

- Week 12: Full rollout across target Tier-2 cities with active performance monitoring.

Frequently Asked Questions

How does voice commerce improve accessibility for Tier-2 and Tier-3 users?

Voice commerce removes the barrier of digital literacy by allowing users to interact with technology using their natural speech. In regions where typing in English or even regional scripts can be cumbersome, voice provides a high-speed, intuitive alternative that mirrors real-life shopping experiences.

What is the difference between standard translation and vernacular voice commerce?

Standard translation often misses the cultural nuances and code-switching (like Hinglish) prevalent in India. Vernacular voice commerce uses NLU models specifically trained on regional dialects and colloquialisms, ensuring the AI understands the user's intent, not just their literal words.

Can AI voice cloning sound natural in regional Indian accents?

Yes, modern AI voice cloning technology can capture the specific prosody, rhythm, and accent of various Indian regions. This allows brands to create a localized experience that feels familiar and trustworthy to the user, provided it is done with proper consent and disclosure.

How does personalized video enhance the voice shopping journey?

While voice is excellent for intent and navigation, video is superior for building trust and explaining complex details. A personalized video delivered after a voice query can visually confirm the order details, explain EMI options, or provide a product demo in the user's language, leading to higher conversion rates.

How does TrueFan AI support enterprise voice and video strategies?

TrueFan AI provides the infrastructure to generate hyper-personalized, multilingual video content at scale. By integrating with an enterprise's voice commerce stack, it allows for the automated creation of regional dialect shopping videos that respond to specific user actions, driving deeper engagement and ROI in the Bharat market.

What are the key security considerations for voice commerce in India?

Enterprises must comply with the DPDP Act, ensuring explicit consent for voice data collection. Data must be encrypted, and PII (Personally Identifiable Information) should be minimized. Additionally, all synthetic media must be clearly labeled to maintain transparency with the consumer.

Is smart speaker integration enough for a complete voice strategy?

While smart speakers are a great entry point, a complete strategy must include in-app voice capabilities. This ensures the brand owns the user data and can provide a seamless transition from voice search to checkout and payment within their own ecosystem.