Voice Commerce Vernacular India 2026: A Blueprint for Hindi, Tamil, and Bengali Shopping in Tier-2/3 Bharat

Estimated reading time: ~13 minutes

Key Takeaways

- By 2026, vernacular, voice-first journeys will dominate Tier-2/3 commerce, boosted by short-form video.

- Multilingual AI video engines localize at scale (175+ languages) to deliver near real-time, personalized responses.

- Code-mixing and accent localization (Hinglish/Tamglish/Benglish) are critical for accurate NLU and trusted UX.

- A full-stack approach—ASR, NLU, dialog, CDP, and UPI 123PAY—closes the loop from voice to payment.

- 90-day pilots with privacy, compliance, and granular measurement de-risk scale and prove ROI.

Voice commerce vernacular India is moving from pilot to default by 2026, fundamentally reshaping how the “Next Billion Users” interact with digital marketplaces. As enterprises pivot toward Tier-2 and Tier-3 markets, the transition from text-based search to natural language commerce India is no longer a luxury but a strategic imperative for survival. By 2026, vernacular, voice-led journeys are expected to dominate regional user interfaces, with enterprises building sophisticated voice-to-cart flows and multimodal journeys that combine speech with short-form video to lift engagement. Platforms like TrueFan AI enable this transition by providing the infrastructure for hyper-personalized, multilingual video content that bridges the gap between voice intent and visual confirmation.

Current market signals indicate that regional user cohorts show significantly higher comfort levels with speaking rather than typing, particularly in markets where literacy or digital fluency varies. Tier-2 and Tier-3 users are driving this growth, aided by the rise of Hindi, Tamil, and Bengali code-mixing and the proliferation of vernacular assistants. Furthermore, the integration of public infrastructure like UPI 123PAY allows for voice-mediated transactions even in low-bandwidth environments, ensuring that the commerce journey remains uninterrupted.

Section 1: Why Vernacular Voice is India’s 2026 Growth Lever (Bharat Focus)

The definition of voice commerce vernacular India has evolved into a comprehensive ecosystem where discovery, selection, and checkout are executed via speech in regional Indian languages and code-mixed utterances. This includes “Hinglish,” “Tamglish,” and “Benglish,” spanning across mobile apps, smart speakers, IVR systems, and even in-store kiosks. By 2026, the expectation is that e-commerce will become smarter, safer, and more human, moving away from sterile grids of products toward conversational interfaces that mirror the experience of a local shopkeeper.

Market data suggests that voice-to-cart and conversational commerce are mainstreaming in Indian retail. Regional cohorts are demonstrating a clear preference for voice interfaces because they lower the cognitive load of navigation. In the Bharat market, where users often skip the “desktop” phase of the internet entirely, voice is the primary bridge to digital literacy. This shift is critical for tier-2 voice adoption strategies, as it directly addresses the friction points of traditional UI/UX that often alienate non-English speaking users.

The business case for this transition is grounded in three core metrics: reduced Customer Acquisition Cost (CAC) in vernacular cohorts, higher conversion rates for assisted voice-plus-video flows, and incremental revenue generated via voice-triggered personalized offers. By deploying Bharat market AI videos that explain products in a user’s native dialect, brands can build trust faster than through generic English-language banners. This localized approach ensures that the brand resonates with the cultural nuances of the specific region, leading to higher brand recall and loyalty.

Sources:

- Voice-to-Cart: The Game-Changer for E-commerce in India

- Why 2026 Will Make Indian E-commerce Smarter and More Human

- Vernacular Voice Revolution: Why India’s Next Billion Users Will Speak, Not Type

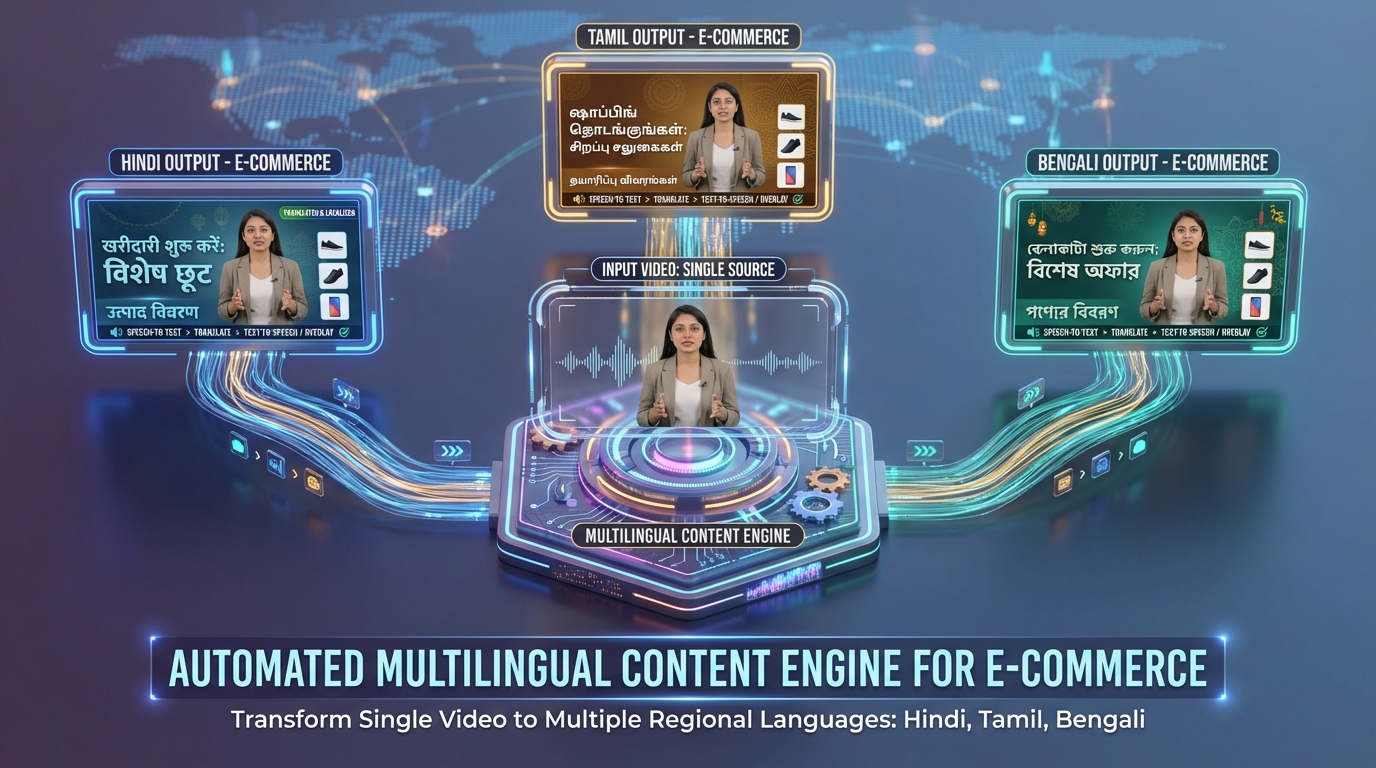

Section 2: The Multilingual Content Engine for Commerce (Video + Voice)

To support the scale of voice commerce vernacular India, enterprises require a robust multilingual AI video generator. This engine serves as an automated pipeline that transforms a single base shoot into millions of regionally localized, hyper-personalized short videos. These videos feature synchronized voice and facial movements, delivered on-demand across 175+ languages via high-performance APIs. TrueFan AI's 175+ language support and Personalised Celebrity Videos provide the necessary scale to ensure that every user, regardless of their dialect, receives a tailored shopping experience.

The core components of this engine include vernacular video automation, which utilizes template-based scripts and dynamic data fills. For instance, a single product demo can be automatically updated with the user’s name, their specific city, the current price in their region, and a call-to-action in their native script. This level of automation allows for near real-time renders (often under 30 seconds), which is essential for powering triggered commerce journeys where a user’s voice query must be met with an immediate visual response.

Security and compliance are paramount in this architecture. Enterprise-grade platforms must adhere to ISO 27001 and SOC 2 standards to ensure that data is handled securely and that the use of likeness or voice is fully consented. This is particularly important when using AI voice cloning to match regional prosody and diction. By integrating these capabilities into existing CRMs and WhatsApp flows, brands can execute large-scale campaigns that feel deeply personal to the individual consumer in Durgapur, Madurai, or Indore.

Sources:

- TrueFan AI Platform Overview

- AI Product Showcase: Jewelry and Personalization

- Top 8 E-commerce Trends for 2026

Section 3: Hinglish and Regional Nuance in Creative and UX

A critical oversight in many early voice implementations was the failure to account for code-mixing. In India, users rarely speak in “pure” versions of their native tongues. Instead, they blend English tokens with native grammar—a phenomenon known as code-mixing. Hinglish AI video creation must account for phrases like “Aata 5kg order kar do” (Order 5kg of flour) or “Shampoo under 200 dikhao” (Show me shampoo under 200). Understanding these nuances is the difference between a successful transaction and a frustrated user.

Script guidelines for 2026 emphasize the use of phonetic spellings and colloquial synonyms. For a campaign in Tamil Nadu, the NLU (Natural Language Understanding) must recognize “Tamglish” utterances, while in West Bengal, “Benglish” becomes the standard. This requires AI voice cloning Indian accents that can handle the specific prosody and diction of different states. For example, the way a user in Kolkata pronounces a brand name will differ from a user in Delhi, and the AI must be trained to recognize and mirror these variations to maintain brand safety and authenticity.

Creative artifacts should include regional dialect shopping videos that demonstrate the most frequent queries per state. These should be designed as low-bandwidth storyboard variants (10–15 seconds) to ensure they load quickly on older devices or in areas with spotty connectivity. Including on-screen pricing in native scripts further enhances accessibility. By testing accent localization and name pronunciation, enterprises can avoid the “uncanny valley” effect, making the AI interaction feel as natural as a conversation with a human assistant.

Sources:

Section 4: Conversational Shopping and Personalization Architecture

The technical stack for conversational shopping AI personalization in 2026 is a multi-layered architecture designed for speed and accuracy. At the base is Automatic Speech Recognition (ASR) specialized for Indian languages and code-mix. This is followed by an NLU layer that extracts commerce-specific intents—such as “browse,” “add-to-cart,” or “reorder”—and entities like brand names, quantities, and price points. A dialog manager then handles the logic of the conversation, managing confirmations and fallbacks when the AI is uncertain of the user's intent.

Integration with a Customer Data Platform (CDP) is vital for governing voice-triggered personalized offers. When a user expresses purchase interest via voice, the system performs a real-time CDP lookup to determine the user’s eligibility for specific discounts or loyalty rewards. Solutions like TrueFan AI demonstrate ROI through this layer by generating a localized video on the fly that explains the offer, significantly increasing the likelihood of conversion. This multimodal approach—moving from voice intent to a personalized video explanation—creates a high-trust environment for the user.

Finally, the payments layer must be seamless. In the Indian context, this means integrating UPI and specifically UPI 123PAY. This technology enables IVR and voice-based payments without the need for an active internet connection, which is a game-changer for feature-phone users in rural areas. By 2026, the ability to confirm a payment verbally (“Haan, pay kar do”) will be a standard feature of the conversational shopping journey, closing the loop from discovery to fulfillment within a single voice-led session.

Sources:

Section 5: Smart Speaker Commerce Integration and App Patterns

Smart speaker commerce integration is a primary pillar of the 2026 blueprint. There are two dominant use cases: the “Reorder Flow” and “Discovery-to-Mobile Handoff.” In a reorder flow, a user might tell their Alexa or Android assistant, “Dawat atta reorder karo.” The system recognizes the SKU and brand, confirms the quantity and delivery address, and either pushes a payment link to the user’s phone or initiates a UPI 123PAY callback. This removes nearly all friction from the replenishment of household staples.

The second pattern involves discovery via voice followed by a visual handoff. If a user asks a smart speaker to “Find a red saree under 3000,” the speaker can provide a brief audio summary and then automatically send a personalized regional video to the user’s WhatsApp. This video, generated in the user’s native language, provides the visual confirmation necessary for high-consideration purchases. This “voice-to-video-to-cart” logic ensures that even if the initial intent is captured on a screenless device, the final conversion is supported by rich, localized media.

To implement this, developers must define specific intents (e.g., FindDealUnderPrice, TrackOrder) and log these voice intents for future retargeting. If a user’s voice intent indicates a high level of interest but the transaction isn’t completed, the system can trigger an automated follow-up video in the correct dialect, offering a small incentive to complete the purchase. This level of integration between smart speakers and mobile apps creates a unified commerce experience that follows the user across devices.

Sources:

- Voice-to-Cart: The Game-Changer for E-commerce in India

- Why 2026 Will Make Indian E-commerce Smarter

Section 6: Voice SEO Optimization Regional for Discovery

As voice search becomes the primary discovery method for Bharat, voice SEO optimization regional becomes a mandatory discipline. This involves optimizing for long-form, conversational queries rather than short, keyword-dense phrases. A user in a Tier-2 city is more likely to ask, “Sabse accha waterproof mobile kaunsa hai?” (Which is the best waterproof mobile?) than to type “waterproof mobile.” Content must be structured to answer these specific, spoken questions directly.

Implementing Answer Engine Optimization (AEO) is the next step. This requires building FAQ clusters that mirror spoken questions and using structured data—such as FAQ, Product, and LocalBusiness schema—to help search engines parse the content. Crucially, scripts and captions must be maintained in native scripts (Devanagari, Tamil, Bengali) to improve discoverability. Search engines are increasingly prioritizing content that matches the linguistic and script preferences of the local user base. See also voice SEO for regional languages and festival-led voice SEO.

Content operations should focus on generating localized snippets and short-form videos that answer common “how-to” and “where-to-buy” questions. For example, a brand could create a series of 15-second videos answering queries like “EMI kaise lein?” (How to get EMI?) or “Under ₹500 gifts dikhao” (Show gifts under ₹500). By dominating these regional voice search results, brands can capture intent at the very top of the funnel, long before the user reaches a competitor's app.

Sources:

Section 7: Tier-2/3 Voice Adoption Strategies: The 90-Day Pilot

Launching a successful voice commerce vernacular India initiative requires a structured, phased approach. A 90-day pilot is the ideal timeframe to test, learn, and scale. The first two weeks should be dedicated to discovery and design, mapping languages and dialects by state and identifying the top 100 spoken intents for your specific category. This phase also includes a data audit to ensure your CDP can handle language preferences and regional tags while maintaining strict consent protocols.

Weeks 3 through 6 focus on building and integration. This is where you configure your ASR and NLU models for code-mix and design the dialog flows. Integrating TrueFan Enterprise APIs during this stage allows you to set up the on-demand video generation layer, ensuring that your system can respond to voice queries with localized video in under 30 seconds. You should also finalize your UPI 123PAY IVR flows to ensure that the payment process is as voice-friendly as the discovery process.

The final phase, from Week 7 to 12, involves the actual pilot in three representative cities—for example, Indore (Hindi), Madurai (Tamil), and Durgapur (Bengali). During this period, you will track KPIs such as voice-to-cart rate, video view-through, and AOV lift. By Week 10, you should be using multivariate testing to refine your scripts, accents, and offers. This data-driven approach allows you to optimize the experience before rolling it out to a larger set of 8–10 cities, ensuring that your vernacular video automation is delivering maximum ROI.

Sources:

Section 8: Measurement, Privacy, and Compliance

Measuring the success of a voice commerce vernacular India strategy requires a new set of metrics. Beyond traditional conversion rates, enterprises must track voice-to-cart rates, video view-through rates for personalized content, and the specific lift in Average Order Value (AOV) from voice-triggered personalized offers. Attribution is equally complex; every voice intent must be tagged with the language, dialect, and video template ID to understand which combinations are driving the most value.

Privacy and governance are the bedrock of trust in 2026. Every experience must be consent-first, with explicit opt-ins for personalized voice and video interactions. Users must have clear, easy-to-navigate pathways to revoke consent at any time. Furthermore, bias testing for ASR and NLU models is essential to ensure that the system performs equally well across different dialects and socio-economic backgrounds. Safety rails must be in place to handle noisy environments, automatically falling back to text or app-based flows when voice recognition confidence is low.

From an enterprise perspective, security posture is non-negotiable. Using platforms that are ISO 27001 and SOC 2 compliant ensures that user data and brand assets are protected. Moderation and policy enforcement must be automated to prevent the generation of inappropriate or off-brand content. By prioritizing accessibility—including captions in native scripts and low-bandwidth delivery options—brands can ensure that their voice commerce initiatives are inclusive and reach the widest possible audience in Bharat.

Sources:

Conclusion

The imperative for voice commerce vernacular India 2026 is clear: regional, voice-first, and multimodal journeys are the only way to effectively capture and convert the Bharat market. As the digital landscape becomes increasingly conversational, enterprises must move beyond simple translation and embrace true localization through vernacular video automation and AI-driven personalization. By operationalizing these technologies today, brands can secure their position in the next phase of India's e-commerce evolution.

To stay ahead, enterprises should focus on building a 175 language video platform capability that can respond to user needs in real-time—see vernacular video automation in India. The goal is to create a shopping experience that is as intuitive as a spoken conversation, supported by the visual richness of personalized video. Whether it's through smart speaker integrations or WhatsApp-based video commerce, the future of retail in India is vocal, visual, and vernacular.

Final Call to Action: Launch your 90-day Tier-2/3 voice commerce pilot today. By integrating Hindi, Tamil, and Bengali voice flows with automated video responses and UPI 123PAY, you can unlock the full potential of the Bharat market. The window for early-mover advantage is closing—now is the time to build the future of conversational shopping.

Frequently Asked Questions

How do we start voice commerce vernacular India pilots in 90 days?

Starting a pilot requires a clear roadmap: Week 1-2 for language mapping and intent identification, Week 3-6 for ASR/NLU configuration and API integration, and Week 7-12 for live testing in cities like Indore or Madurai. Focus on a single high-value use case, such as reordering or cart recovery, to prove the ROI before scaling.

What’s the best way to do Hinglish AI video creation for conversions in Tier-2 cities?

The most effective approach is to use a multilingual AI video generator that can handle code-mixing naturally. This involves using scripts that blend English product terms with Hindi grammar and ensuring the AI lip-sync is perfectly aligned with the regional accent. TrueFan AI provides the infrastructure to automate this process at scale.

How does AI voice cloning Indian accents work ethically and with consent?

Ethical AI voice cloning requires explicit consent from the individual whose voice is being modeled. For enterprise use, this means using professional voice actors who have agreed to the specific use of their digital likeness. The system should also include watermarking and moderation to prevent misuse.

How to integrate smart speaker commerce with UPI 123PAY in India?

Integration involves mapping voice intents on the smart speaker to a backend payment trigger. Once the user confirms the purchase verbally, the system initiates a UPI 123PAY IVR callback to the user's registered mobile number, allowing them to complete the payment securely via voice.

How to measure voice-triggered personalized offers ROI?

ROI is measured by comparing the conversion rate and AOV of users who receive a voice-triggered personalized video offer against a control group. Additionally, you should track the reduction in CAC for vernacular segments and the long-term retention lift from these hyper-personalized interactions.