Voice commerce vernacular India: An enterprise blueprint for tier-2/3 conversational shopping and multilingual video at scale

Estimated reading time: ~10 minutes

Key Takeaways

- Tier-2/3 growth in India will be led by voice-first, multilingual commerce experiences aligned to Hinglish and regional dialects.

- Enterprises need a code-mix aware ASR/NLU stack with sub-2s roundtrip latency and transliteration robustness.

- Multilingual video at scale (175+ languages) drives higher comprehension, trust, and conversion on PDPs and chat flows.

- Voice SEO and smart speaker skills unlock discoverability for long-tail, conversational queries in Hinglish.

- A disciplined 180-day roadmap with governance and DPDP-compliant consent ensures scalable, ROI-positive deployment.

Voice commerce vernacular India is the definitive frontier for enterprise growth in 2026, representing a fundamental shift in how the “Next 500 Million” users interact with digital marketplaces. As India’s e-commerce market is projected to grow by 12.4% in 2026, the transition from text-heavy interfaces to voice-first, multilingual ecosystems is no longer optional for brands seeking tier-2 and tier-3 dominance. This evolution is driven by a demographic that prioritizes convenience, linguistic comfort, and the intuitive nature of natural language commerce India.

For the modern enterprise, capturing this market requires more than simple translation; it demands a sophisticated orchestration of conversational shopping AI, regional dialect shopping videos, and a robust technical architecture capable of handling the nuances of “Bharat.” This blueprint provides the strategic framework for deploying Bharat market AI videos and voice-led commerce stacks that convert digitally hesitant users into loyal advocates.

The Bharat Shift: Why Voice Commerce Vernacular India is the Near-Term Growth Unlock

The momentum behind voice commerce vernacular India is anchored in a critical behavioral pivot: the rise of the “voice-first” consumer in non-metropolitan hubs. These users often bypass traditional search bars, preferring to discover, evaluate, and purchase products through spoken queries that blend Hindi, English, and regional dialects. This “natural language commerce India” approach mirrors traditional offline shopping experiences, where conversation is the primary vehicle for trust.

Market signals in 2026 confirm this trajectory. Major players like Meesho have already moved to onboard the next 500 million users via their generative AI voice assistant, “Vaani,” specifically targeting users who find text-based navigation intimidating. This push is supported by broader economic trends, including the continued expansion of high-speed mobile internet into rural corridors and the increasing affordability of smart devices.

For enterprises, the mandate is clear: prioritize tier-2 voice adoption by building systems that understand the “Hinglish” reality. This involves moving beyond standard English UIs to incorporate code-mixed interfaces and regional dialect shopping videos that explain product value in the user’s native tongue. By 2026, the ability to process a query like Mera last wala detergent dobara order kar do (Reorder my last detergent) with 99% accuracy will be the baseline for competitive differentiation.

What to implement this sprint:

- Audit current search logs for transliterated or code-mixed queries.

- Identify top 3 regional languages by customer density for a voice pilot.

- Benchmark current mobile app latency for voice-to-text triggers.

Sources:

- Fashion Network: India’s e-commerce market to grow 12.4% in 2026

- BusinessLine: Meesho launches GenAI voice assistant Vaani

- BusinessLine: Meesho bets on AI voice assistant to onboard next 500M users

Conversational Shopping AI: Engineering Natural Language Commerce India for Scale

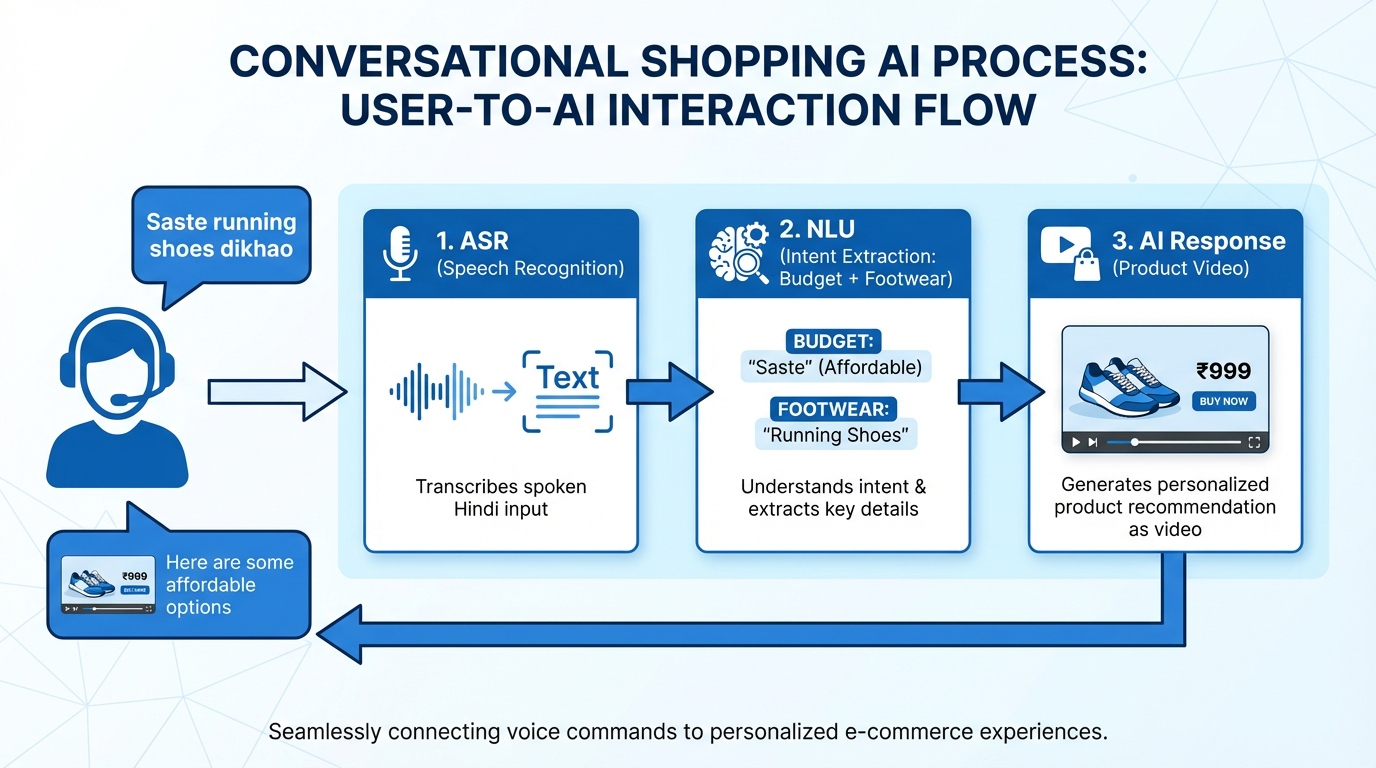

Building a conversational shopping AI that resonates with the Bharat market requires a departure from Western-centric NLU (Natural Language Understanding) models. The technical architecture must account for the “code-mix reality,” where users fluidly switch between languages within a single sentence. Platforms like TrueFan AI enable enterprises to bridge the gap between static product catalogs and dynamic, voice-driven engagement by integrating high-fidelity video responses into the conversational flow.

The core pipeline for an enterprise-grade system must achieve a perceived latency of less than 1.5 seconds. This involves a high-speed ASR (Automatic Speech Recognition) layer adapted for Indian accents, followed by an NLU engine capable of extracting intent from colloquialisms like sabse sasta (cheapest) or jaldi delivery (fast delivery). Furthermore, the system must handle transliteration—understanding that “fridge” might be searched as “friz” or “priz”—to ensure no user is left behind due to spelling or pronunciation variances.

Personalization is the final piece of the engineering puzzle. By pulling context from a Centralized Data Platform (CDP), the AI can tailor its responses based on the user’s loyalty tier, previous purchase history, and geographic location. For instance, a user in Patna asking for “oil” should receive recommendations for mustard oil brands popular in Bihar, delivered via a voice that carries a familiar regional lilt. This level of localization transforms a transactional interface into a trusted shopping companion.

Technical Latency Targets for 2026:

- ASR Processing: <300 ms (chunked streaming).

- NLU Intent Extraction: <150 ms (code-switch aware).

- TTS (Text-to-Speech) Response: <300 ms for initial audio playback.

- Total Roundtrip: <1.5–2.0 seconds to maintain conversational flow.

Sources:

- BrandEquity: Why customer service shouldn’t be left to AI agents

- Grand View Research: India voice commerce market outlook

Multilingual Creative at Scale: Leveraging a 175 Language Video Platform for Tier-2 Voice Adoption

In the Bharat market, visual comprehension is as vital as auditory understanding. Users in tier-2 and tier-3 cities often exhibit higher engagement rates with short-form video content than with text-based product descriptions. To meet this demand, enterprises are adopting Hinglish AI video creation tools to produce localized explainers at a scale previously thought impossible. TrueFan AI’s 175+ language support and Personalised Celebrity Videos allow brands to generate millions of unique video variants that address specific user queries in real-time.

A multilingual AI video generator enables a “create once, distribute everywhere” strategy. A single master creative for a new skincare product can be automatically localized into Marathi, Bengali, Tamil, and Telugu, complete with perfect lip-sync and voice retention. This ensures that the emotional resonance of the original campaign is preserved across all linguistic boundaries. When these videos are triggered by voice queries—such as a user asking, Is cream ko kaise use karte hain? (How do I use this cream?)—the result is a seamless, high-conversion experience.

Beyond simple translation, Hinglish AI video creation accounts for cultural nuances. It ensures that transliterations are contextually correct and that the visual elements (such as currency symbols or local store pins) align with the viewer’s reality. For an enterprise, this means deploying a 175 language video platform that integrates directly with the Product Information Management (PIM) system, allowing for the instant generation of Bharat market AI videos whenever a new SKU is added or a price changes.

Strategic Delivery Surfaces:

- WhatsApp Business API: Sending personalized video reorder prompts.

- PDP (Product Detail Page) Embeds: Replacing static images with regional dialect shopping videos.

- IVR Callbacks: Sending a video link via SMS after a voice-based support call.

Sources:

- TrueFan Enterprise Documentation (Internal Product Intelligence).

- Technavio: Voice Commerce Market industry analysis

Smart Speaker Commerce Integration and Voice SEO Optimization for the Hinglish Market

The integration of smart speakers into the Indian household has created a new channel for passive and active commerce. Smart speaker commerce integration allows users to manage their households through simple voice commands on Alexa or Google Assistant. For enterprises, this means developing account-linked “skills” that enable frictionless reordering. A user saying, Alexa, mera pichhli baar wala detergent order kar do (Alexa, order my detergent from last time), triggers a background check of the order history, a price confirmation in Hinglish, and a UPI payment request sent to the user’s phone.

To be discoverable in this voice-first world, voice SEO optimization is mandatory. Unlike traditional SEO, which focuses on short-tail keywords, voice SEO targets long-tail, conversational queries. Enterprises must optimize their content for how people speak, not just how they type. This includes creating FAQ sections in regional languages and using structured data (Schema.org) to help voice assistants parse product details, prices, and availability in specific localities.

Solutions like TrueFan AI demonstrate ROI through the creation of video-based “Voice Snippets.” When a user asks a “How-to” question via a voice assistant, the system can serve a short, localized video explainer that not only answers the question but also provides a direct link to purchase. This synergy between voice search and video content is the key to winning the “Position Zero” in the Bharat market.

Voice SEO Cheatsheet for Hinglish:

- Target Intent: “Best [Product] under [Price] near me.”

- Schema Markup: Use FAQPage and Product schema with regional language fields.

- Transliteration: Include common misspellings and local aliases in meta tags.

- Locality Tags: Ensure city and district names are present in regional scripts.

Sources:

- BusinessLine: Meesho’s voice strategy to onboard next 500M users

- BrandEquity: Service quality considerations in AI deployments

Enterprise Implementation Roadmap: From Pilot to 180-Day Scale

Transitioning to a voice-and-video-first commerce model requires a phased approach to manage technical complexity and ensure brand safety. The following 180-day roadmap outlines the journey from initial discovery to full-scale deployment across the Bharat market.

Phase 1: Discovery and Data (Days 0–30)

The first month focuses on identifying high-impact categories—typically FMCG, beauty, or small appliances—where voice reordering and video explainers provide the most value. Enterprises must collect dialect-specific corpora to train their ASR and NLU models. This stage also involves instrumenting baseline metrics, such as current voice query volume and conversion rates on existing PDPs.

Phase 2: MVP in One Region (Days 30–60)

Launch a pilot in a single linguistic region (e.g., Hindi-speaking North India). This involves building the basic conversational shopping AI flow for search and reordering. Simultaneously, produce a set of regional dialect shopping videos for the top 50 SKUs using TrueFan’s enterprise templates. Integrate UPI and COD (Cash on Delivery) as the primary payment methods to align with local preferences.

Phase 3: Expansion and Optimization (Days 60–180)

Once the pilot proves successful, the enterprise can scale to additional languages like Tamil, Bengali, and Marathi. This phase includes the full rollout of a multilingual AI video generator for lifecycle marketing—sending personalized “win-back” videos to dormant users in their native language. Continuous tuning of Word Error Rate (WER) thresholds per dialect ensures that the system remains accurate as it encounters more diverse speech patterns.

Enterprise Technical Requirements:

- SSO & RBAC: Secure access for marketing and engineering teams.

- API-First Architecture: Trigger-based video generation for cart abandonment.

- ISO 27001 / SOC 2: Ensuring data security and compliance.

Sources:

- Fashion Network: India e-commerce forecast for 2026

- TrueFan Enterprise Offering (Internal Product Intelligence).

Governance, Compliance, and ROI: Measuring Bharat Market AI Videos

As enterprises deploy AI voice cloning Indian accents and video generation at scale, governance becomes a critical pillar. The Digital Personal Data Protection (DPDP) Act in India mandates explicit consent for voice capture and processing. Systems must be designed with “privacy-by-design,” ensuring that users are informed in their regional language about how their voice data will be used. Consent logs must be immutable and easily auditable.

Furthermore, the use of AI voice cloning Indian accents requires strict ethical guardrails. Enterprises must ensure that any voice models used—whether they are celebrity clones or brand-specific voices—are created with explicit permission and used only for the intended purpose. Content moderation tools are essential to prevent the generation of offensive or off-brand content, especially when using generative AI to script Hinglish utterances.

Measuring the ROI of these initiatives requires a shift from traditional web metrics to “conversational KPIs.” Success is measured by intent recognition accuracy, the reduction in WER across dialects, and the lift in Average Order Value (AOV) driven by personalized video recommendations. Early benchmarks suggest that integrating Bharat market AI videos into the customer journey can lead to a 15–25% increase in conversion rates on product pages, as visual and linguistic barriers are removed.

Core KPIs for Voice & Video Commerce:

- Intent Accuracy: Percentage of voice queries correctly mapped to actions.

- WER by Dialect: Accuracy of speech-to-text across different regional accents.

- CVR Lift: Conversion rate increase on PDPs with vernacular videos.

- Reorder Rate: Frequency of voice-led repeat purchases.

- NPS/CSAT: Customer satisfaction with the conversational experience.

Sources:

- BrandEquity on AI agents and service quality

- Grand View Research: Voice commerce market outlook (India)

Conclusion: The Future is Spoken and Visual

The convergence of voice commerce vernacular India and AI-driven video content marks a turning point for the Indian enterprise. By moving beyond the linguistic limitations of the past, brands can finally speak the language of the “Next 500 Million”—literally and figuratively. The roadmap to success involves a disciplined integration of conversational shopping AI, Hinglish AI video creation, and a commitment to regional personalization.

As we look toward the end of 2026, the leaders in the Indian market will be those who have successfully bridged the gap between complex technology and simple, human-centric conversation. The tools to build this future—from 175 language video platforms to sophisticated voice cloning—are available today. The only question is how quickly your enterprise will move to claim its stake in the Bharat market.

Ready to lead the Bharat shift?

Book an enterprise demo of Studio by TrueFan AI to launch a vernacular voice commerce pilot in 90 days—combining conversational shopping AI, Hinglish AI video creation, multilingual AI video generator, and AI voice cloning Indian accents integrated with your commerce stack. Deliver Bharat market AI videos that resonate, convert, and scale.

Frequently Asked Questions

How do we implement conversational shopping AI for tier-2 voice adoption?

Implementation begins with a robust ASR and NLU pipeline specifically trained on Indian code-mixed data (Hinglish). Follow a 90-day MVP roadmap: start with high-volume categories, map common intents like price check and reorder, and integrate these flows into your mobile app and WhatsApp channels. TrueFan AI can be integrated at this stage to provide real-time video responses to voice queries, enhancing user understanding and trust.

Which Indian accents and languages should we start with?

Begin with Hinglish (Hindi–English mix) to cover the largest tier-2 demographic. Then prioritize regional languages by SKU distribution—typically Tamil, Telugu, Bengali, and Marathi. Test for dialectal variance within each language to ensure robustness against regional slang and colloquialisms.

How do we measure the success of Bharat market AI videos?

Track engagement and conversion metrics: View-Through Rate (VTR), Click-Through Rate (CTR) on in-video “Buy Now” CTAs, and CVR lift on pages with vernacular videos versus text-only pages. Also monitor reorder rate and AOV improvements attributable to video explainers.

Is AI voice cloning Indian accents compliant with Indian laws?

Yes—if it adheres to India’s DPDP Act. Obtain explicit, informed consent from the individual whose voice is cloned and from end users whose voice data is processed. Maintain auditable consent logs and provide opt-out and deletion controls.

What is the typical ROI for a 175 language video platform?

Enterprises typically see 10–25% CVR uplift, reduced support costs due to video explainers, and higher LTV through frictionless voice-led reordering. Automation across 175+ languages also lowers cost per creative, improving overall ROI.