Krutrim AI video generator India 2026: Build vernacular text-to-video with Bhashini APIs (and when to choose TrueFan AI vs HeyGen)

Estimated reading time: ~11 minutes

Key Takeaways

- India’s sovereign AI stack pairs Bhashini language APIs with Krutrim compute to deliver on-shore, vernacular video.

- Bodhi‑1 chips (2026) aim to cut latency and cost for multimodal generation, boosting Indic text‑to‑video pipelines.

- For production scale, Studio by TrueFan AI bridges Bhashini audio with high‑fidelity, compliant avatars.

- HeyGen vs Krutrim isn’t app vs app; match infrastructure to needs: data residency, compliance, realism, and ROI.

- Government & enterprise should prioritize watermarking, consent logs, captions, and sovereign compute.

The landscape of digital communication is undergoing a seismic shift as the Krutrim AI video generator India 2026 vision for a cinematic AI video generator in India moves from conceptual roadmap to a functional, sovereign AI stack. For Indian creators, developers, and government agencies, the ability to generate high-quality, lip-synced video content in 22 scheduled languages is no longer a futuristic dream but a tangible reality powered by the convergence of Bhashini’s language APIs and domestic compute infrastructure.

By 2026, the “Made in India” AI ecosystem will be defined by its ability to handle the nuances of Indic dialects—from the tonal shifts in Marathi to the rhythmic cadence of Tamil—without relying on Western-centric models that often struggle with regional accents. This guide explores how you can leverage this sovereign stack today, the role of the upcoming Krutrim silicon, and how to navigate the choice between domestic innovators and global giants.

TL;DR: The State of Indian AI Video in 2026

What exists today is a powerful “Lego-style” pipeline: you can use Bhashini API text-to-video integration for language processing and pair it with advanced rendering engines compared here. While a single-click “Krutrim Video” consumer app is still evolving, the underlying infrastructure—including the Bhashini text-to-video free access India tier for prototyping—allows for enterprise-grade localization. For those requiring immediate, production-ready scale with 175+ languages and strict Indian data residency, platforms like Studio by TrueFan AI enable a seamless bridge between these government-backed APIs and high-fidelity AI avatars.

Who this is for:

- Regional Language Creators: Looking to scale content across the 22 scheduled languages.

- EdTech Platforms: Needing to localize thousands of hours of lectures with perfect accent fidelity.

- Government Communicators: Seeking a government AI video generator India solution that complies with the IndiaAI Mission.

- Enterprise Builders comparing Krutrim vs HeyGen for Hindi content to optimize for TCO (Total Cost of Ownership) and data residency.

1. What “Krutrim AI video generator India 2026” really means: Today vs 2026 Outlook

When we discuss the Krutrim AI video generator India 2026, we are looking at the emergence of India’s first full-stack sovereign AI cloud. Founded by Bhavish Aggarwal, Krutrim is not just a chatbot; it is a multi-layered infrastructure designed to decouple Indian AI from global dependencies.

The 2026 Roadmap: Silicon and Sovereignty

The most significant milestone on the horizon is the launch of Bodhi-1, Krutrim’s first indigenous AI chip, slated for production in 2026. According to The Economic Times: Krutrim to launch first AI chip in 2026, this chip is specifically designed for frontier LLMs and AI inference, which will drastically reduce the latency and cost of generating video content domestically.

By 2026, the Krutrim stack will likely include:

- Bodhi-1 Silicon: Powering high-speed video rendering and LLM inference on Indian soil.

- Krutrim Cloud Expansion: A planned 1GW cloud capacity to host massive multimodal models, as reported by Data Center Dynamics: 1GW cloud expansion from 2026.

- Kruti Assistant Integration: An agentic layer that can orchestrate video production workflows using simple vernacular prompts.

Currently, if you are searching for a Krutrim AI avatar generator Hindi Tamil, you are essentially looking at a pipeline where Krutrim provides the LLM (Large Language Model) capabilities and cloud hosting, while specialized engines handle the visual synthesis. This “sovereign compute” model ensures that sensitive data—especially for government and enterprise use—never leaves Indian borders.

Source: Krutrim Official Site, YourStory: India’s first AI chips by 2026

2. Architecture: How Bhashini powers text-to-video AI in Indian languages

To build a vernacular AI video generator India domestic 2026 solution, one must understand Bhashini, the National Language Translation Mission (NLTM). Bhashini acts as the “neural center” for Indian language processing, providing the APIs necessary to convert text into natural-sounding speech across 22 languages.

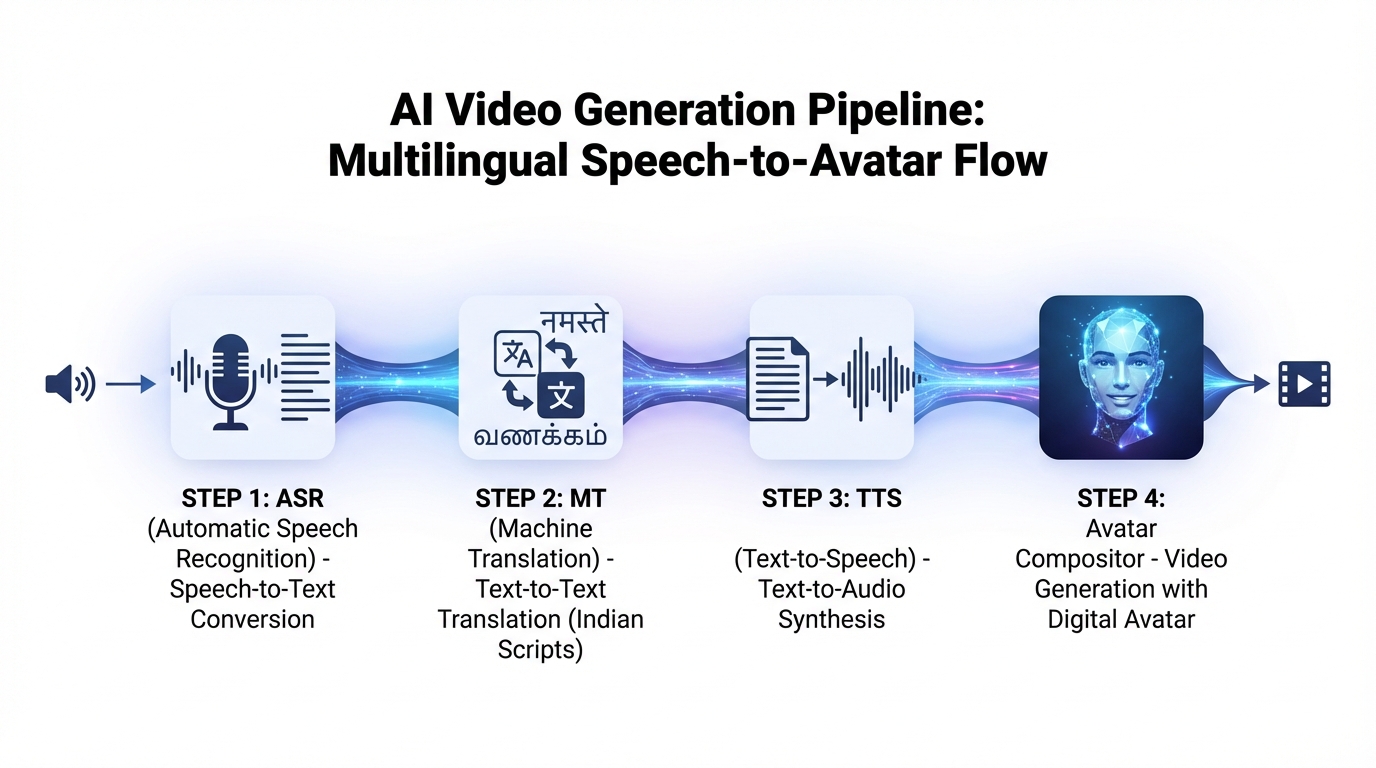

The 4-Layer Pipeline

A robust text-to-video AI Indian languages Bhashini architecture consists of four distinct stages:

- ASR (Automated Speech Recognition): Converts existing audio (like a Hindi lecture) into text. This is critical for repurposing legacy content.

- MT (Machine Translation): Translates the source text into any of the 22 scheduled languages while maintaining context and cultural nuance.

- TTS (Text-to-Speech): This is where Bhashini shines. It generates high-fidelity audio with regional accents. By 2026, these models are expected to support advanced SSML (Speech Synthesis Markup Language) for better prosody and emotional depth.

- Avatar/Compositor: The final stage where the audio is synced to a virtual human via real-time interactive AI avatars in India. This is where AI video creator for scheduled languages India tools come into play, mapping phonemes to visemes (lip movements).

The ULCA Advantage

Bhashini operates on the ULCA (Unified Language Contribution and Analysis) platform. This is a national data pipeline that aggregates datasets from across India to benchmark and improve model accuracy. For developers, this means that an AI video generator 22 languages India strategy is backed by the most comprehensive and culturally accurate datasets available.

Source: Bhashini Field Guide (1st Edition), Bhashini Official Site

3. Hands-on: Bhashini API text-to-video integration (Step-by-Step Tutorial)

For those looking for an Indian language AI video generator free path for prototyping, Bhashini offers a robust API ecosystem. Follow this guide to integrate Bhashini’s TTS into your video workflow.

Pre-requisites

- Register at the Bhashini API Portal.

- Obtain your

userIDandulcaApiKey. - Access the Postman Collection for Bhashini for rapid testing.

Step 1: Discover Models with Pipeline Search

You must first identify the best model for your specific language pair (e.g., English to Telugu).

- Endpoint:

https://meity-auth.ulcacontrib.org/ulca/auth/v1/subsystem/register/ - Action: Use the Pipeline Search API to find TTS engines that support your target dialect.

Step 2: Generate High-Fidelity TTS

Once you have the modelID, send your script to the TTS endpoint. To ensure naturalness in Hindi or Tamil, use SSML tags to control the “prosody rate” and “pitch.”

- Pro Tip: For Indian languages, keep sentences under 15 words to avoid robotic intonation. Use the

<break time="500ms"/>tag after commas to mimic natural Indian speech patterns.

Step 3: Compose the Video

With your Bhashini-generated audio file, you have two paths:

- Option A (Automated Avatar): Use a rendering engine that accepts external audio. You can upload the Bhashini

.wavfile and sync it to a pre-licensed avatar. - Option B (Dynamic Slides): For EdTech, sync the audio timeline with on-screen text and animations using a Python-based compositor like MoviePy.

This Bhashini AI video API tutorial demonstrates that while the “video” part is the visual shell, the “soul” of the content—the language—is now a solved problem thanks to the IndiaAI Mission.

Source: Bhashini API Documentation, UselessAI Community Guide: Implementing Bhashini 0.2

4. Krutrim vs HeyGen for Hindi/Tamil: The 2026 Comparison

Choosing between a made in India AI video tools stack and a global leader like HeyGen requires a nuanced look at ROI, compliance, and cultural accuracy.

| Feature | Krutrim (Sovereign Stack) | HeyGen (Global Leader) | Studio by TrueFan AI (Enterprise India) |

|---|---|---|---|

| Language Realism | High (Bhashini-backed) | Moderate (Generalist) | High (175+ Languages) |

| Data Residency | 100% India (On-shore) | Global/US-based | India-Resident Options |

| Avatar Diversity | Indian-centric | Global/Diverse | Licensed Indian Influencers |

| Compliance | IndiaAI Mission Aligned | GDPR/SOC2 | ISO 27001 & SOC 2 |

| Rendering Speed | Improving (2026 Chips) | Fast | 30-second render time |

Why “Krutrim vs HeyGen for Hindi content” is the wrong question

In 2026, the debate isn’t about which app is better, but which infrastructure fits your use case. HeyGen offers incredible photorealism, but for a government agency or a bank, the risk of data leaving the country is a dealbreaker.

Studio by TrueFan AI’s 175+ language support and AI avatars provide a middle ground: the ease of a global SaaS with the compliance and cultural “soul” of an Indian product. By using licensed digital twins of real Indian influencers, it avoids the “uncanny valley” often found in Western models trying to mimic Indian facial expressions.

Source: TrueFan AI 2026 Benchmark, NASSCOM AI Market Report (Express Computer)

5. Government Communicator Playbook: 22 Languages & IndiaAI Mission

The government AI video generator India initiative is a core pillar of the $1.25 billion IndiaAI Mission creator tools program. For public sector units (PSUs) and ministries, the goal is “Jan Bhashini”—bringing the benefits of AI to every citizen in their mother tongue.

The Compliance Checklist

If you are building for the public sector, your AI video creator for scheduled languages India must meet these criteria:

- Watermarking: Every video must have a non-removable digital watermark to prevent deepfake misuse.

- Consent Logs: If using a human avatar, a clear chain of digital consent must be documented.

- Accessibility: Videos must include burned-in captions in the target language (e.g., Telugu captions for a Telugu audio track).

- Sovereign Compute: Prioritize tools that run on Indian clouds like Krutrim to ensure data sovereignty.

The IndiaAI Mission video tools creators are now focusing on “District-level localization.” Imagine a central government PSA (Public Service Announcement) crisis communication AI video guide that is automatically rendered into 500 different versions, each featuring a local dialect and a relatable avatar, delivered via WhatsApp.

Source: MeitY IndiaAI Mission Report (2024)

6. Roadmap to 2026: Vernacular Avatars and ROI

As we look toward the end of 2026, the vernacular AI video generator India domestic 2026 market is projected to be a major contributor to India’s $17 billion AI economy. According to NASSCOM (Express Computer), AI revenue in India is estimated at $10–12 billion for FY26, reflecting a shift from experimental pilots to ROI-driven deployments.

Key Trends to Watch:

- Latency Collapse: With the Bodhi-1 chip, the time to generate a 1-minute 4K video will drop from minutes to seconds.

- Hyper-Local Dialects: Bhashini is moving beyond the 22 scheduled languages to support 100+ dialects, enabling “hyper-local” marketing.

- Enterprise Automation: Solutions like Studio by TrueFan AI demonstrate ROI through 100% content compliance records and a 90% reduction in production costs compared to traditional shoots.

Businesses that adopt an “API-first” vernacular strategy now will be the ones dominating the Indian “Bharat” market in 2026. The shift from “English-first” to “Vernacular-always” is no longer optional; it is the primary driver of digital growth.

7. FAQs and Implementation Checklist

Frequently Asked Questions

Q1: Is there a single “government AI video generator India” app?

No. There is no single app. Instead, the government provides the Bhashini infrastructure (ASR/MT/TTS). You can combine these APIs with a rendering engine to create a compliant video pipeline.

Q2: How can I get Bhashini text-to-video free access India?

Bhashini offers a free tier for developers and startups to prototype. You can sign up on the Bhashini ULCA portal to get your API keys. While the language processing is free/subsidized, the video rendering (GPU cost) usually depends on the tool you use.

Q3: What is the best path for a Krutrim AI avatar generator Hindi Tamil?

The most effective path is to use Krutrim Cloud for hosting your logic and Bhashini for the language, then use a specialized tool like Studio by TrueFan AI for the final avatar rendering. This ensures your avatars look like real Indians and your data stays secure.

Q4: Can I use these tools for EdTech at scale?

Yes. By using webhooks and APIs, you can automate the creation of thousands of localized lesson intros. This is the primary use case for the AI video generator 22 languages India initiative.

Q5: How do I ensure my AI videos are ethical?

Ensure your provider uses a “consent-first” model. This means avatars are based on real people who have been paid and have licensed their likeness, rather than unauthorized deepfakes.

Implementation Checklist for 2026

- Language Audit: Identify which of the 22 scheduled languages your audience speaks.

- API Setup: Secure your Bhashini

ulcaApiKeyand test the Pipeline Search. - Avatar Selection: Choose avatars that reflect the ethnicity of your target region.

- Compliance Check: Ensure your tool is ISO 27001 and SOC 2 certified.

- Pilot Run: Generate a 30-second clip in a regional dialect (e.g., Kannada) and test for accent accuracy with a native speaker.

Final Thought: The Krutrim AI video generator India 2026 era is about more than just technology; it’s about giving a voice to 1.4 billion people in the language they dream in. Whether you are a developer building on Bhashini or an enterprise scaling with Studio by TrueFan AI, the tools to win the vernacular internet are finally here.

Sources & External Links:

- Krutrim AI Chips 2026 - Economic Times

- Bhashini National Language Mission - Official Site

- IndiaAI Mission Approval - MeitY Official Report

- Krutrim 1GW Cloud Expansion - Data Center Dynamics

- India’s AI Market ROI - NASSCOM/Express Computer

- Bhashini API Postman Documentation

Recommended Internal Links

- Cinematic AI Video Generator India: 2026 Tools Compared

- Cinematic AI video generator India: 2026 tools compared

- IndiaAI Mission Creator Tools: Empowering Small Creators

- Real-time Interactive AI Avatars India: Live Video Chat

- Democratizing AI Video India: Tools for Small Creators

- Crisis Communication AI Video India: Rapid Response Guide

Frequently Asked Questions

What is Krutrim and how does it relate to Bhashini for text-to-video?

Krutrim is India’s sovereign AI stack providing compute and LLM infrastructure, while Bhashini supplies ASR/MT/TTS language capabilities. Together, they enable end-to-end vernacular text-to-video pipelines where on-shore compute meets high-fidelity Indic speech.

How does Studio by TrueFan AI fit into this stack?

Studio by TrueFan AI connects Bhashini-generated audio to enterprise-grade avatars and video rendering. It adds workflow automation, consent-first licensed likenesses, data residency options in India, and rapid rendering for production use.

Is data residency guaranteed for government and enterprises?

Yes—when you deploy on India-based clouds (e.g., Krutrim) and use vendors that offer on-shore processing. Ensure contracts stipulate storage/processing in India, watermarking, consent logs, and audit trails to meet regulatory expectations.

When should I choose HeyGen over a sovereign stack?

Choose HeyGen when you need top-tier photorealism and global deployment without strict on-shore constraints. If compliance, Indic authenticity, or India-only data processing are priorities, a sovereign stack with Bhashini and Krutrim plus Studio by TrueFan AI is preferable.

How will latency and costs evolve by 2026?

With Bodhi‑1 chips and expanded on-shore capacity, inference is expected to become faster and cheaper. Generating a 1‑minute 4K clip should move from minutes to seconds, improving throughput and unit economics for large-scale localization.