Answer Engine Optimization for Video: Win AI Overviews and Zero-Click SEO in 2026

Estimated reading time: ~18 minutes

Key Takeaways

- Prioritize answer-first micro-clips (15–40s) to win AI Overviews and Suggested Clips.

- Align VideoObject and Clip schema to exact user questions and PAA queries.

- Support videos with clean transcripts, TL;DR summaries, and entity-rich on-page text.

- Build E-E-A-T video authority with verified experts, disclosures, and credible citations.

- Scale globally using AI video creator SEO for multilingual, localized variants.

Answer engine optimization is the definitive frontier for enterprise brands seeking to maintain visibility in a landscape dominated by generative AI and zero-click results. As we navigate 2026, the traditional search engine results page (SERP) has evolved into a sophisticated synthesis of information where AI Overviews (AIO) provide immediate solutions. For organizations to thrive, they must pivot toward an answer engine optimization video strategy that prioritizes direct information extraction and semantic relevance.

The shift toward zero-click optimization strategies is no longer optional, as recent data suggests that over 52% of search queries in 2026 are resolved without a single click to an external website. This phenomenon is driven by the efficiency of AI systems that parse video content to deliver “Suggested Clips” and “Key Moments” directly within the search interface. By structuring video assets to be “answer-ready,” enterprises can secure high-authority citations and brand mentions even when users do not visit their primary domain.

What is Answer Engine Optimization (AEO) for Video?

Answer engine optimization (AEO) for video is the strategic practice of engineering video content so that AI systems—such as Google’s AI Overviews, OpenAI’s SearchGPT, and various voice assistants—can seamlessly extract, cite, and synthesize your content into a direct answer. Unlike traditional SEO, which focuses on driving traffic to a landing page, AEO focuses on providing the most authoritative and accessible answer to a specific user query. In 2026, this requires a meticulous combination of high-fidelity transcripts, chapterized segments, and advanced semantic search video content mapping.

The technical foundation of AEO for video involves aligning VideoObject and Clip schema to the specific questions users are asking in real-time. By 2026, AI Overviews have become the primary surface for information discovery, frequently citing YouTube segments that provide 15-to-40-second direct answers. This transition is particularly evident in the Indian market, where SEO experts highlight the increasing reliance on video-first AI summaries to bridge the gap between complex queries and user comprehension.

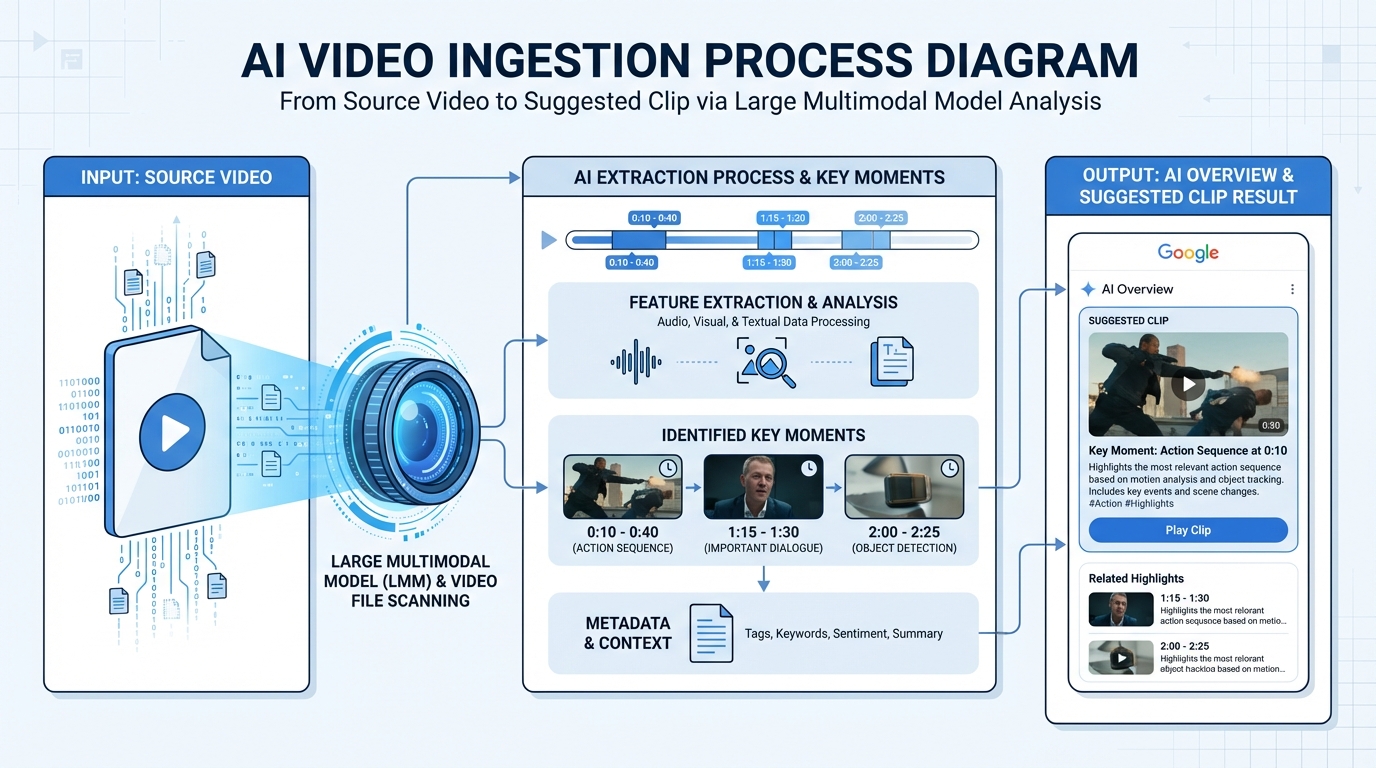

Enterprises must recognize that AI models do not “watch” video in the human sense; they ingest multi-modal data points including visual frames, audio transcripts, and metadata. To optimize for these systems, content must be “atomic”—broken down into discrete, high-value segments that address individual “People Also Ask” (PAA) queries. This granular approach ensures that your brand remains the cited authority within the AI-generated response, reinforcing your market position through E-E-A-T video authority.

Sources:

- Savit: AI SEO playbook for AI Overview inclusion

- MementoTech: Shopify SEO strategy 2026 (embedding + AIO)

- DigiMarkSol: 2026 video, schema, and FAQ guidance

How AI Overviews Ingest and Cite Video Answers

In 2026, the ingestion process for AI Overviews has become highly sophisticated, utilizing Large Multimodal Models (LMMs) to parse video data at scale. These systems analyze the video’s metadata—titles, descriptions, and tags—alongside the structured data provided via JSON-LD. However, the most critical component for AI Overviews video SEO is the alignment between the video’s internal “Key Moments” and the semantic intent of the user’s search query.

To increase the likelihood of inclusion in an AI Overview, brands must adopt an “answer-first” scripting methodology. This involves stating the direct answer to a primary question within the first 10 seconds of a video segment, followed by supporting details and nuances. AI systems prioritize these clear, concise segments because they are easily extractable for “Suggested Clips,” which Google now uses to fulfill over 35% of “How-To” and “What-Is” queries.

Furthermore, the surrounding on-page text plays a vital role in how AI systems contextualize video content. Embedding a video within a rich textual environment—complete with a 40-to-60-word “TL;DR” summary—provides the AI with a secondary confirmation of the video’s relevance. Indian SEO analyses from 2026 indicate that videos hosted on YouTube receive a significant sourcing bias in Google’s AI Overviews, making a YouTube-first hosting strategy essential for enterprise visibility.

Sources:

- MementoTech: AI Overviews + SERP modules surface YouTube

- Savit: Entity-aligned video metadata for AI visibility

Zero-Click Optimization Strategies for Video-Led SERPs

The primary objective of zero-click optimization strategies is to earn direct inclusion in AI answers, featured snippets, and PAA modules. This requires a departure from long-form, narrative-driven video toward “atomic” Q&A content. By producing 30-to-90-second micro-clips that address specific query stems—such as “how to,” “why,” and “pros and cons”—enterprises can dominate the “Suggested Clips” feature that now occupies the top of most informational SERPs.

To maximize E-E-A-T video authority, these micro-clips must feature on-screen experts with visible credentials in the lower-thirds. AI systems in 2026 are programmed to identify and prioritize content from verified experts, especially in “Your Money or Your Life” (YMYL) categories. Stating the brand name and the expert’s title within the first five seconds of the video ensures that even if a user doesn't click, the brand receives a verbal and visual mention in the AI-generated summary.

Another critical tactic is the implementation of conversational SEO videos that mirror the way users interact with voice assistants and chat-based answer engines. This involves scripting content in a natural, dialogue-driven format that anticipates follow-up questions. For instance, a video explaining “Enterprise Cloud Security” should naturally lead into a micro-clip about “Common Implementation Mistakes,” creating a “conversational chain” that AI systems can use to power multi-turn interactions.

Featured Snippet Video Content and Suggested Clips Checklist:

- Question-Aligned Headings: Use exact-match questions for your video chapters. See featured snippet video content.

- The 15-40 Second Rule: Ensure the core answer is delivered within this window for easy extraction.

- Verbatim Summaries: Place a short, 50-word paragraph directly under the video embed that mirrors the video's primary answer.

People Also Ask (PAA) Targeting with Micro-Clips:

By building a PAA tree from high-volume queries, enterprises can produce a library of 12-to-20 micro-clips. Each clip should be titled exactly like a PAA question and include Clip markup to define its boundaries. This strategy has proven highly effective in India’s 2026 SEO landscape, where AEO strategies recommend FAQ and How-To structures to improve inclusion in AI-driven answers. PAA content playbook

Sources:

- Hemant: India SEO outlook 2026—video prominence + schema-backed FAQs

- Digitiger: AEO strategy with FAQ and How-To structures

Schema Markup Video Implementation Checklist for 2026

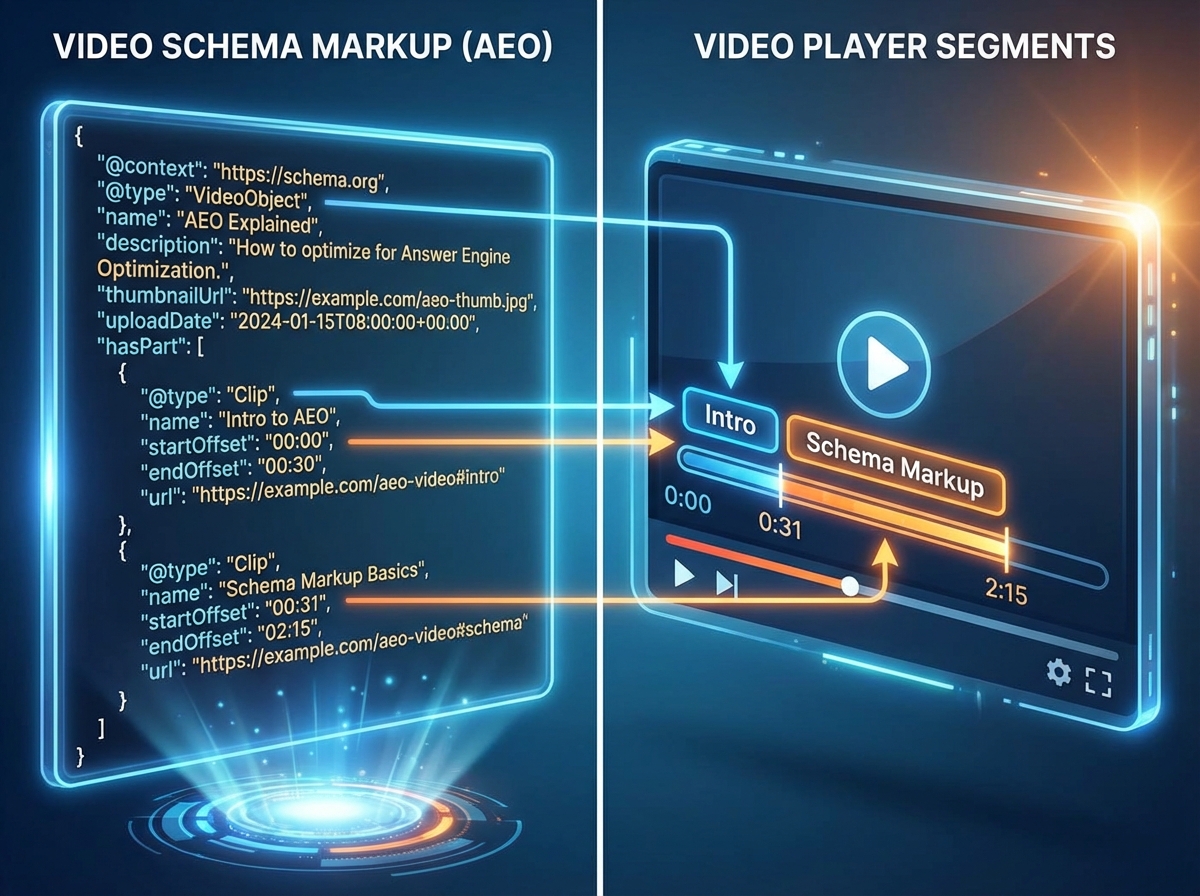

Technical precision is the bedrock of answer engine optimization video. Without robust schema markup video implementation, even the most high-quality content remains invisible to AI ingestion engines. In 2026, the VideoObject schema has expanded to include more granular fields that help AI systems understand the “aboutness” of a video. This includes the transcript field, which should contain a clean, human-edited version of the audio to prevent AI “hallucinations” or misinterpretations.

The Clip markup is perhaps the most influential element for winning “Suggested Clips.” By defining the startOffset and endOffset for every key moment, you are essentially providing the AI with a roadmap of your content. Each clip should have a name that corresponds to a high-volume search query or a PAA question. This level of detail allows Google to jump users directly to the most relevant part of your video, increasing the “value-per-second” of your content. VideoObject and Clip schema best practices

In addition to VideoObject, enterprises must leverage FAQPage and HowTo schema to support their video assets. An FAQPage block that mirrors the questions answered in the video provides a secondary layer of semantic data. Similarly, HowTo schema optimization allows AI systems to extract step-by-step instructions, often displaying them as a numbered list in the AI Overview with your video as the primary visual reference.

Essential JSON-LD Fields for 2026:

- VideoObject:

name,description(answer-first),thumbnailUrl,uploadDate,contentUrl,transcript. - Clip:

@type: "Clip",name(the question),startOffset,endOffset. - HowTo:

step,itemListElement,totalTime,supply,tool. - Speakable: (For voice search) specifying which parts of the transcript are best for audio playback.

Sources:

- CreativeNexus: How to add schema markup (Video/FAQ/HowTo)

- CreativeNexus: Top schema types that improve SEO

- Digitiger: FAQ/How-To usage for better indexing

- Smart Academy: 2026 digital marketing trends

Voice Search Video Marketing and E-E-A-T Authority

Voice search video marketing has become a dominant force in 2026, particularly in multilingual markets like India. Users are increasingly using natural language to query their devices, asking questions like “How do I set up my enterprise firewall?” rather than typing short keywords. To capture this intent, video scripts must be written for the ear, using “speakable” language that is concise and free of jargon.

Building E-E-A-T video authority in this context requires more than just good information; it requires verifiable expertise. AI systems now cross-reference the experts featured in videos with their professional profiles, such as LinkedIn or corporate bio pages. Including these links in the video description and using schema to link the author of the video to a Person entity with established credentials is a critical requirement for ranking in high-stakes AI Overviews.

Furthermore, transparency is a key component of trust in 2026. This includes clear disclosures regarding the use of AI in the production process. Whether it is AI-assisted scripting or virtual editing, being transparent about the production methodology enhances the “Trustworthiness” pillar of E-E-A-T. Enterprises should also include citations to reputable sources within the video description, mirroring the way academic papers cite references, to bolster their authoritativeness.

Voice Search Optimization Checklist:

- Natural Language Questions: Script the video to start with the exact question a user would speak.

- Concise Answers: Keep the initial answer under 30 seconds for voice assistant compatibility.

- Clean Audio: Ensure high-fidelity audio, as AI transcription accuracy is directly tied to audio quality.

- Conversational Chains: Record “follow-up” micro-clips to answer the next logical question in a user's journey.

Sources:

- Hemant: Voice and GEO in India’s 2026 SEO—add genuine FAQs

- DigiMarkSol: Video importance + FAQ schema and organization markup

AI Video Creator SEO: Scaling Variants with TrueFan AI

Scaling an AEO strategy across a global enterprise requires more than manual production; it requires AI video creator SEO. This discipline involves using advanced AI tools to generate, localize, and optimize thousands of video assets that target long-tail queries and regional dialects. Platforms like TrueFan AI enable enterprises to transform a single “pillar” video into dozens of PAA-aligned micro-clips, each optimized with the necessary metadata and schema for AI ingestion.

One of the most significant challenges in 2026 is the need for hyper-localization. TrueFan AI's 175+ language support and Personalised Celebrity Videos allow brands to reach diverse audiences with content that feels native and authentic. By programmatically generating localized variants of Q&A videos, enterprises can win voice search queries in languages like Hindi, Tamil, and Bengali, which are often underserved by global brands. This localized approach is essential for capturing the “next billion users” who interact with the web primarily through voice and video.

Beyond localization, the ability to iterate on content is crucial for AEO success. Virtual reshoots and AI editing allow SEO teams to test different “answer phrasings” to see which one the AI Overview prefers. If a particular 20-second segment isn't being cited, it can be rapidly updated with a new script or a different expert without the need for a full production crew. This agility ensures that the enterprise's video library remains optimized for the ever-evolving algorithms of answer engines.

TrueFan AI Enterprise Capabilities for AEO:

- API-Driven Personalization: Generate thousands of personalized micro-clips for long-tail entity coverage.

- Multilingual Lip-Sync: Maintain trust and E-E-A-T by ensuring localized videos have perfect audio-visual alignment.

- Standardized Metadata Exports: Automatically generate SRTs, chapters, and VideoObject schema for every asset.

- Compliance & Governance: Ensure all AI-generated content meets ISO 27001/SOC2 standards and includes necessary disclosures.

Measuring AI Overviews Video SEO and Implementation Roadmap

Success in the era of answer engine optimization is measured differently than in the traditional “blue link” era. KPIs must shift from click-through rates (CTR) to “Inclusion Rate” and “Brand Mention Share” within AI Overviews. Solutions like TrueFan AI demonstrate ROI through their ability to increase the volume of cited segments across a broader range of queries, effectively turning video assets into a scalable source of brand authority. See also AI Overviews playbook.

To implement a successful AEO video strategy, enterprises should follow a structured 90-day roadmap. The first 30 days should be dedicated to “SERP Reconnaissance,” identifying the PAA questions and AI Overview patterns for your core keywords. This is followed by a production phase where pillar videos and micro-clips are created using AI-accelerated tools. The final 30 days focus on measurement and iteration, using A/B testing to refine the “answer-first” segments that drive the most citations.

90-Day Enterprise AEO Roadmap:

- Days 1–15: Build a PAA map and select one “pillar” topic. Script answer-first intros for 12 micro-clips.

- Days 16–30: Produce content using TrueFan AI templates. Localize into top 5 regional languages and export human-edited transcripts.

- Days 31–45: Publish to YouTube and your site. Validate VideoObject and Clip schema. Update your video XML sitemap.

- Days 46–75: Monitor AI Overviews for citations. Use virtual reshoots to test alternative phrasing for segments that aren't ranking.

- Days 76–90: Expand the library with more PAA micro-clips and additional locales. Refine E-E-A-T artifacts like expert bio pages.

Sources:

- Savit: AI Overview results frequently cite YouTube videos

- MementoTech: 2026 guides emphasize embedding videos

- DigiMarkSol: Add schema and FAQs to support discovery

- Digitiger: FAQ and How-To structures for AI answers

Recommended Internal Links

- AI Overviews SEO Strategy

- AI Overviews Optimization 2026

- Featured Snippet Video Content

- Regional Language Video SEO

- Answer Engine Optimization Video 2026

- Answer Engine Optimization Video Strategies

- Answer Engine Optimization Video Content

- AI Overviews Optimization 2026 Guide

- Answer Engine Optimization Video Tips

- Answer Engine Optimization Video: Ultimate Guide

Frequently Asked Questions

What is the difference between SEO and AEO for video?

Traditional SEO focuses on ranking a page to get a click, while AEO focuses on providing a direct answer that an AI engine can extract and present to the user. AEO requires more granular technical data, such as Clip schema and high-fidelity transcripts.

How long should an “answer-ready” video segment be?

For AI Overviews and Suggested Clips, the ideal length for a direct answer is between 15 and 40 seconds. This allows the AI to easily cite the segment without overwhelming the user with unnecessary information.

Does YouTube hosting matter for AI Overviews?

Yes. In 2026, Google’s AI Overviews show a significant bias toward sourcing video content from YouTube. However, these videos should also be embedded on your own site with supporting text and schema to maximize authority.

How can I scale video production for thousands of PAA questions?

Platforms like TrueFan AI enable enterprises to scale production by using AI to generate localized and personalized micro-clips from a single source of truth, ensuring consistency and compliance at scale.

What is the most important schema for video AEO?

While VideoObject is the foundation, Clip markup (Key Moments) is the most critical for AEO. It tells the search engine exactly where an answer starts and ends, making it eligible for featured snippets and AI citations.