AI Video Marketing Compliance India 2026: The Enterprise Guide to DPDP Consent, ASCI AI Ad Disclosures, and Deepfake-Safe Creation

Estimated reading time: ~10 minutes

Key Takeaways

- SGI rules tighten in 2026: mandatory on-screen AI labels, C2PA provenance, pre-upload declarations, and 2–3 hour deepfake takedowns.

- DPDP consent must be free, specific, informed, unambiguous, and withdrawable—operationalized via registered Consent Managers.

- ASCI transparency requires prominent, persistent “AI-Generated” disclosures and explicit “AI Spokesperson” labeling for avatars.

- Legal safeguards demand licenses for likeness/voice; avoid “soundalikes,” and maintain rapid deepfake response playbooks.

- Studio by TrueFan AI enables a compliance-by-design stack: pre-licensed avatars, automated disclosures, and provenance.

Navigating AI video marketing compliance India 2026 requires a fundamental shift from “move fast and break things” to a “compliance-by-design” framework that satisfies the Digital Personal Data Protection (DPDP) Act, the latest IT Rules amendments, and ASCI’s transparency mandates. As of February 2026, the Indian regulatory landscape has matured, making a compliant AI video marketing India guide essential for any enterprise looking to leverage synthetic media without incurring massive legal liabilities or brand erosion.

The urgency is driven by the 2026 IT Rules amendments, which now mandate prominent AI labeling, persistent metadata provenance, and a strict 2-to-3-hour takedown SLA for unlawful synthetic content. Furthermore, the DPDP Act’s Section 6 now enforces consent that is free, specific, informed, and—crucially—unambiguous. Platforms like Studio by TrueFan AI enable brands to navigate these complexities by providing a “walled garden” of pre-licensed avatars and automated disclosure tools that align with these rigorous standards.

1. The 2026 Regulatory Landscape: Defining SGI and Platform Duties

In 2026, the term “Synthetically Generated Information” (SGI) has become the cornerstone of Indian digital law. SGI refers to any content—video, audio, or image—that has been created or significantly modified by AI in a way that alters its original meaning. While trivial edits like color correction are exempt, any content that misleads or simulates human presence falls under the new IT Act AI video regulation India 2026.

The New Rules of Engagement

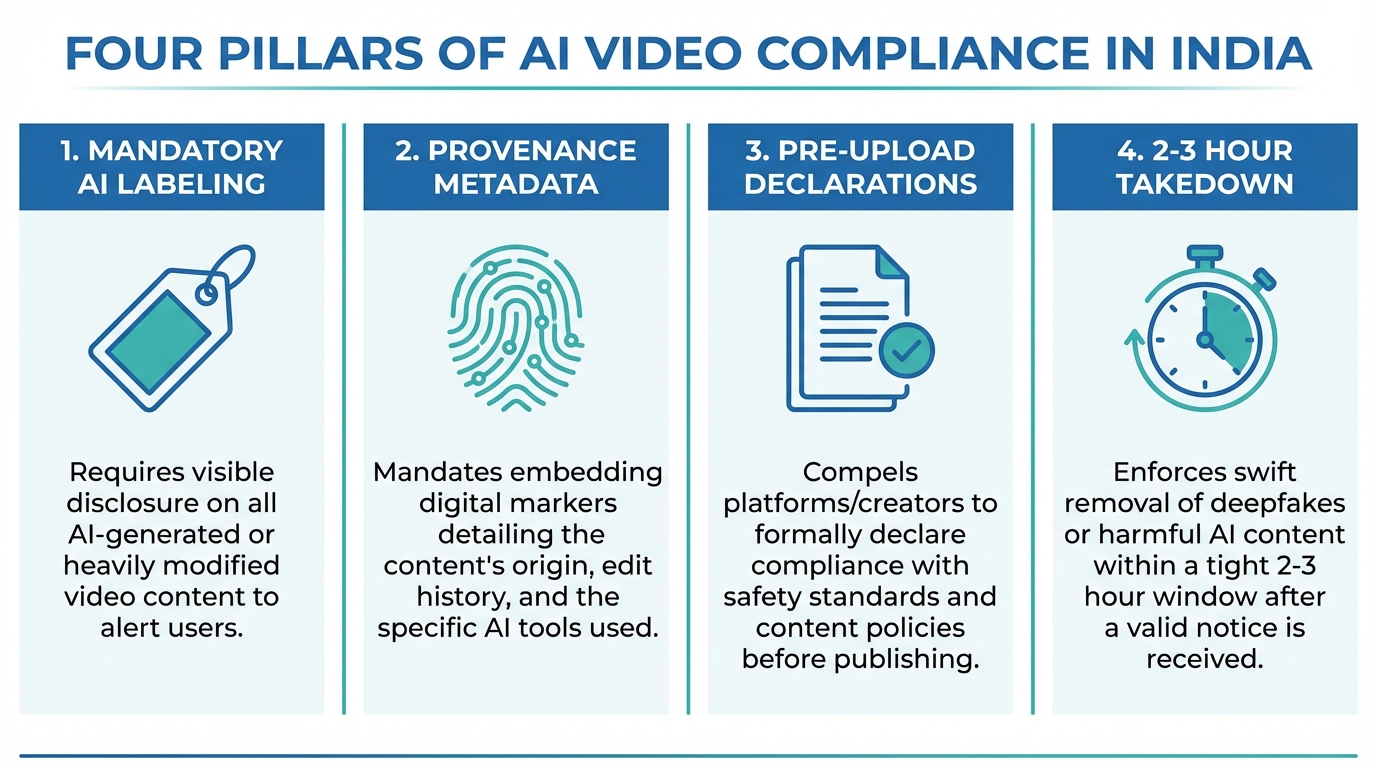

The Ministry of Electronics and Information Technology (MeitY) has established four pillars for AI video compliance:

- Mandatory AI Labeling: Every piece of SGI must carry a clear, non-removable on-screen label (e.g., “AI-Generated”).

- Provenance Metadata: Content must embed tamper-evident invisible watermarking for AI video and C2PA-compliant metadata to track the origin of the synthetic media.

- Pre-upload Declarations: Creators and brands must declare AI usage to platforms before publishing, allowing for automated classification.

- Accelerated Takedowns: For content flagged as harmful or non-consensual (deepfakes), platforms and brands must act within a 2-to-3-hour window to maintain safe harbor protections.

According to recent 2026 industry data, 73% of Indian enterprises have now integrated AI into their marketing workflows, yet only 22% have fully automated their compliance audit trails. With the digital advertising market projected to grow at a 15% CAGR through 2029, the cost of non-compliance—which can include fines up to ₹250 crore under the DPDP Act—is simply too high to ignore.

Source: MeitY IT Rules 2026 Overview; India’s 2026 Rules Redefine AI Regulatory Compliance

2. DPDP Act Compliance: Consent Architecture for AI Video

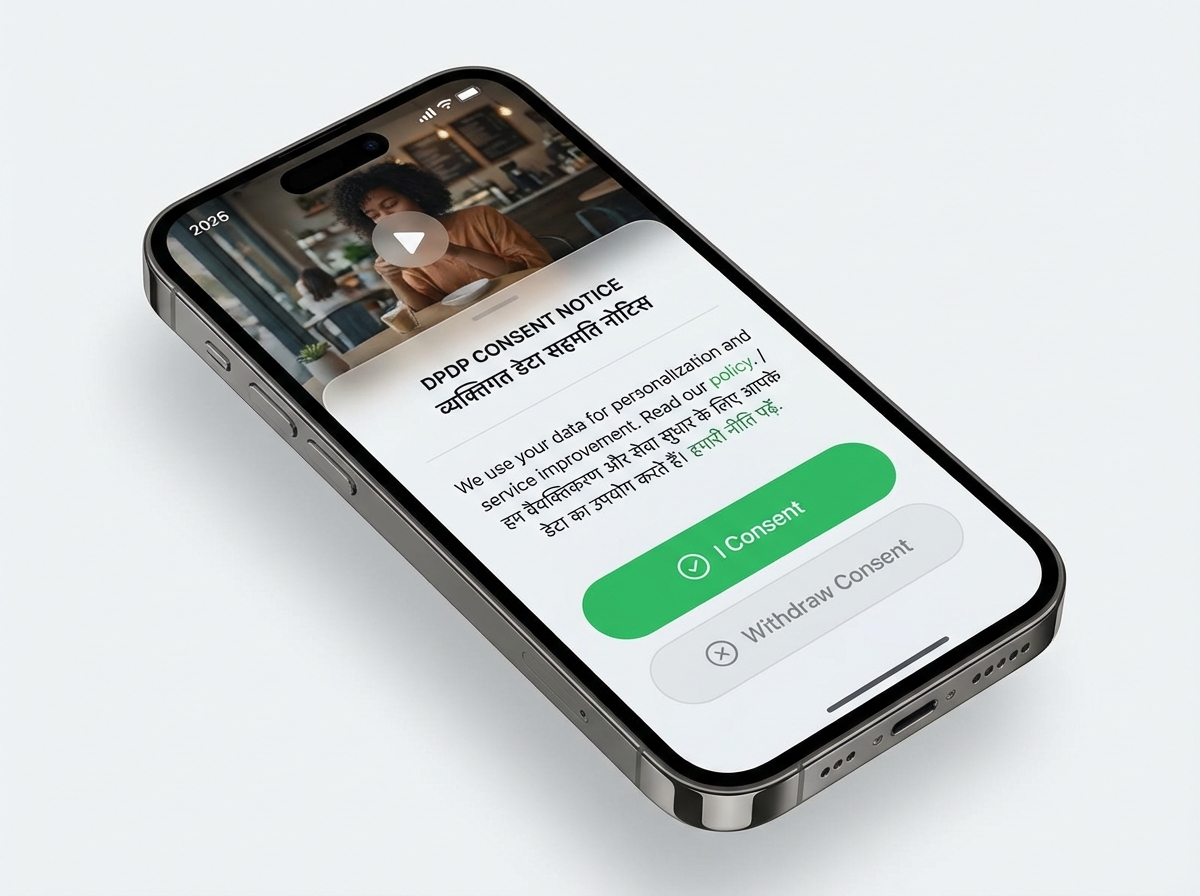

The Digital Personal Data Protection (DPDP) Act has fundamentally changed how brands handle data for personalized AI video campaigns. Under Section 6, valid consent is no longer a checkbox; it is a dynamic, withdrawable agreement. For an AI video creator DPDP compliance strategy to work, the consent must be “free, specific, informed, and unambiguous.”

Implementing the “Notice and Consent” Flow

When a brand uses a customer's name, purchase history, or preferences to generate a personalized AI video, they must provide a notice in plain language (and in regional Indian languages) that specifies:

- What data is being used (e.g., “Your name and last purchase”).

- The purpose of processing (e.g., “To create a personalized anniversary greeting”).

- How to withdraw consent.

The Role of Consent Managers

A significant “content gap” in many compliance discussions is the integration of Consent Managers. These are entities registered with the Data Protection Board that act as a single point for users to manage their permissions. In 2026, enterprise AI video funnels must integrate with these managers via API to ensure that if a user revokes consent on a central portal, the brand's AI video engine immediately stops processing their data.

Studio by TrueFan AI’s 175+ language support and AI avatars allow brands to deliver these notices in the user's preferred tongue, ensuring that the “informed” part of the DPDP requirement is met with 100% clarity. This is critical because 2026 statistics show that localized AI content sees 3.5x higher ROI than generic English-only campaigns, but only if the underlying consent is legally sound.

Source: DPO India: Consent Management under DPDP; Leegality: Consent FAQ

3. ASCI Guidelines & Transparency: Disclosures for AI Ads

The Advertising Standards Council of India (ASCI) has been proactive in regulating generative AI. The ASCI guidelines AI generated ads India mandate that any ad using synthetic elements in influencer marketing must be transparent to the consumer. This isn't just about avoiding “fake news”; it's about maintaining the trust that powers the 91% of businesses now using video as a primary marketing tool.

Disclosure Placement and Duration

ASCI requires that disclosures like “AI-Generated” or “AI-Assisted” be:

- Prominent and Legible: Using a font size and color that contrasts clearly with the background.

- Persistent: In vertical videos (9:16), the label should ideally remain for the entire duration or at least the first 5 seconds of a short-form clip.

- Safe-Zone Aligned: Labels must not be obscured by platform UI elements like the “Like” button or “Share” icons.

AI Spokespersons and Influencers

If your campaign features an AI avatar—whether it's a digital twin of a real influencer or a completely virtual human—it must be explicitly labeled as an “AI Spokesperson.” This prevents consumers from being misled into thinking a human is providing a live endorsement. In 2026, ASCI has also tightened rules for LinkedIn influencers, requiring both the #ad tag and the “AI-generated” disclosure to be visible simultaneously.

Source: ASCI GenAI Whitepaper; Campaign India: ASCI Disclosure Guidelines

4. Legal Safeguards: Personality Rights and Deepfake Prevention

One of the most complex areas of AI video marketing compliance India 2026 is the protection of “personality rights.” Following landmark cases involving actors like Anil Kapoor and Amitabh Bachchan, the Delhi High Court has made it clear: you cannot use a celebrity's voice, likeness, or even their “catchphrases” in an AI-generated video without explicit, written permission.

The “Soundalike” Trap

A common content gap in competitor guides is the failure to address “soundalikes.” Even if you don't use a celebrity's name, using an AI voice that is “confusingly similar” to a famous personality can trigger a “passing off” lawsuit. In 2026, legal experts recommend:

- Explicit Licensing: Contracts must specify “AI cloning rights” and “synthetic media usage.”

- Indemnity Clauses: Ensure your AI platform provides indemnification against IP infringement.

- Morality Triggers: Licenses should include clauses that allow talent to revoke rights if the AI is used to generate content that harms their reputation.

Deepfake Compliance and Takedowns

The deepfake compliance AI video ads framework requires brands to have a “takedown playbook.” If an unauthorized deepfake of your brand's CEO or spokesperson appears, the 2026 IT Rules mandate a response within 2 to 3 hours. This requires a 24/7 monitoring team and direct lines of communication with major social media platforms' grievance officers.

Source: Delhi High Court: Anil Kapoor vs. Simply Life India; Chambers: Personality Rights in India

5. Enterprise Governance: Brand Safety and “Compliance-by-Design”

For large-scale enterprises, manual compliance checks are impossible. The solution lies in choosing an AI video generator brand safety India platform that automates the guardrails. Solutions like Studio by TrueFan AI demonstrate ROI through their “walled garden” approach—ensuring that every avatar used is pre-licensed and every output is automatically watermarked for traceability.

The Provenance Pipeline (C2PA)

A critical technical requirement for 2026 is the adoption of C2PA (Coalition for Content Provenance and Authenticity) standards. This involves:

- Hashing: Creating a digital fingerprint of the video at the moment of generation.

- Metadata Injection: Embedding the “who, what, and how” of the AI generation into the file header.

- Validation: Allowing platforms like YouTube or Instagram to read this metadata and automatically apply the “AI-generated” tag.

Internal Governance Controls

Beyond external regulations, brands must implement internal “Blocklists” and “Manual Review Gates.” For high-risk sectors like FinServ automation and compliance or Healthcare, every AI-generated video should pass through a human-in-the-loop (HITL) review to ensure no “hallucinations” (AI-generated misinformation) have occurred regarding product claims or interest rates.

Source: Grant Thornton: India's IT Rules and AI Responsibility; C2PA Technical Specifications

6. Execution Checklist: Your Path to Compliance

To ensure your brand stays on the right side of the law, follow this compliant AI video marketing India guide checklist for every campaign:

Pre-Production: The Legal Foundation

- DPDP Audit: Have you mapped the data flow? Is the consent notice available in the user's regional language?

- Rights Clearance: Do you have written “AI usage” rights for every face and voice appearing in the video?

- Purpose Binding: Is the data being used only for the specific purpose the user agreed to?

Production: Technical Guardrails

- Labeling: Is the “AI-Generated” watermark visible and contrasting?

- Metadata: Does the file export include C2PA-compliant provenance data?

- Moderation: Has the script been run through a profanity and “hallucination” filter?

Post-Launch: Monitoring and Redressal

- Takedown SLA: Is there a team ready to respond to a legal takedown order within 120 minutes?

- Audit Trail: Are you storing a “Compliance Pack” (consent logs, original prompts, and metadata hashes) for at least 5 years?

2026 Compliance Statistics at a Glance

| Metric | 2026 Projection |

|---|---|

| AI Adoption in Indian Marketing | 73% (YoY Growth) |

| Projected Digital Ad CAGR (to 2029) | 15% |

| Max DPDP Penalty | ₹250 Crore |

| Mandatory Takedown Window | 2–3 Hours |

| ROI Multiplier for Localized AI Video | 3.5x |

Conclusion

As we move through 2026, the intersection of AI and marketing is no longer a legal “Wild West.” The combination of the DPDP Act, the IT Rules, and ASCI guidelines has created a structured environment where transparency is the currency of trust. By adopting a “compliance-first” mindset and leveraging enterprise-grade tools, brands can harness the 3.5x ROI of AI video while completely insulating themselves from regulatory risk.

Sources:

- MeitY: Digital Personal Data Protection Act 2023 Official Text

- ASCI: Generative AI in Advertising Whitepaper

- Delhi High Court: Anil Kapoor Personality Rights Order

- MMA Global: State of AI in Marketing India Report

- C2PA: Content Provenance and Authenticity Standards

Frequently Asked Questions

Can we use “public figure soundalikes” if we don’t mention the celebrity’s name?

No. Under the current interpretation of personality rights in India (e.g., the Anil Kapoor and Bachchan cases), using a voice or likeness that is “confusingly similar” for commercial purposes without a license is a violation. It can lead to immediate injunctions and damages for “passing off.”

Does the DPDP Act require consent for AI videos that don’t use “personal” data?

If the video is generic and does not use any user-specific data (like their name or location), Section 6 consent may not be required. However, if you are using tracking cookies to serve that video or personalizing any part of the content, you must obtain “free and specific” consent.

What happens if a social media platform incorrectly flags our human-shot video as AI?

This is why provenance metadata is vital. By maintaining a clear audit trail and using platforms that embed “human-shot” metadata, you can appeal these flags with evidence. Always keep your original raw footage as a backup for legal verification.

Are there specific rules for AI videos targeting children?

Yes. The DPDP Act requires verifiable parental consent for processing the data of minors. Furthermore, ASCI and the IT Rules have stricter duty-of-care requirements for content that could be viewed by children, including mandatory age-gating for certain types of synthetic media.

How do we handle “Consent Withdrawal” in a personalized video campaign?

You must provide a simple, one-click way for users to revoke consent. Once revoked, you must cease processing their data and, where feasible, delete the personalized video from your active servers. Studio by TrueFan AI integrates with consent managers to automate this “right to be forgotten” workflow, ensuring you remain compliant without manual intervention.

Is a simple “AI” watermark enough to satisfy ASCI?

Not necessarily. ASCI requires the disclosure to be “prominent and legible.” A tiny, transparent watermark in the corner that is covered by a platform logo will likely be flagged as non-compliant. The disclosure should be clear (e.g., “AI-Generated Content”) and placed in a safe zone.